When AI cites your domain directly.

A query is asked. AI generates an answer. The citation footer lists your domain as a source. We count it, by query, by region, by LLM, every day.

⚡ Content Optimization is live — see exactly what to change to get cited in ChatGPT, Perplexity & Gemini

See How it WorksMost tools count what you can see in an AI response.

We count what AI actually reads to write it.

The standard approach: count mentions in the response.

Most AI visibility tools work like this:

This produces a clean-looking visibility percentage. It's also misleading.

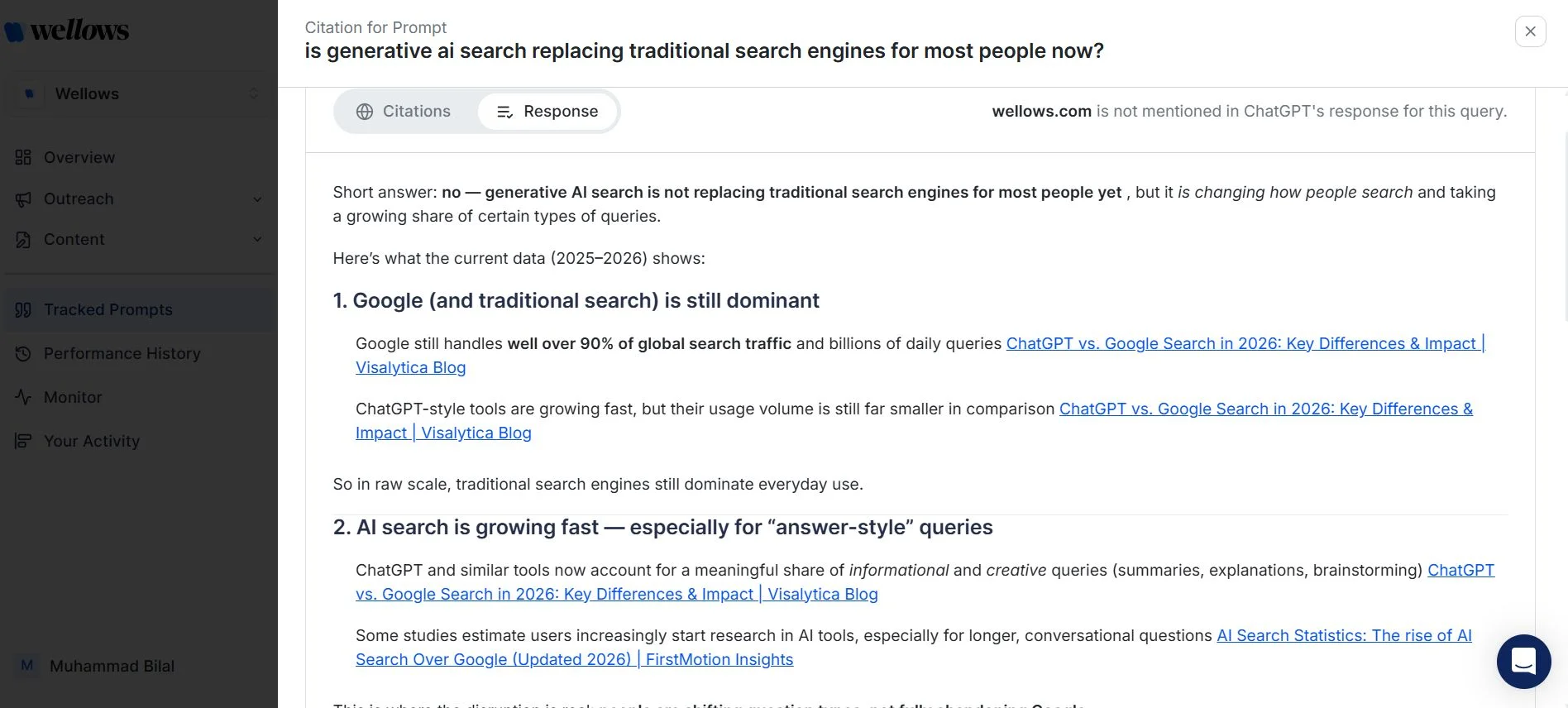

Why? Because mentions in a response are a lag metric. By the time AI is naming you in answers, the work that earned that name happened weeks or months earlier — across a network of sources AI was already reading and trusting.

If you only measure mentions, you can only react. You're always looking at the rear-view mirror.

The Wellows approach: measure the sources AI actually pulls from.

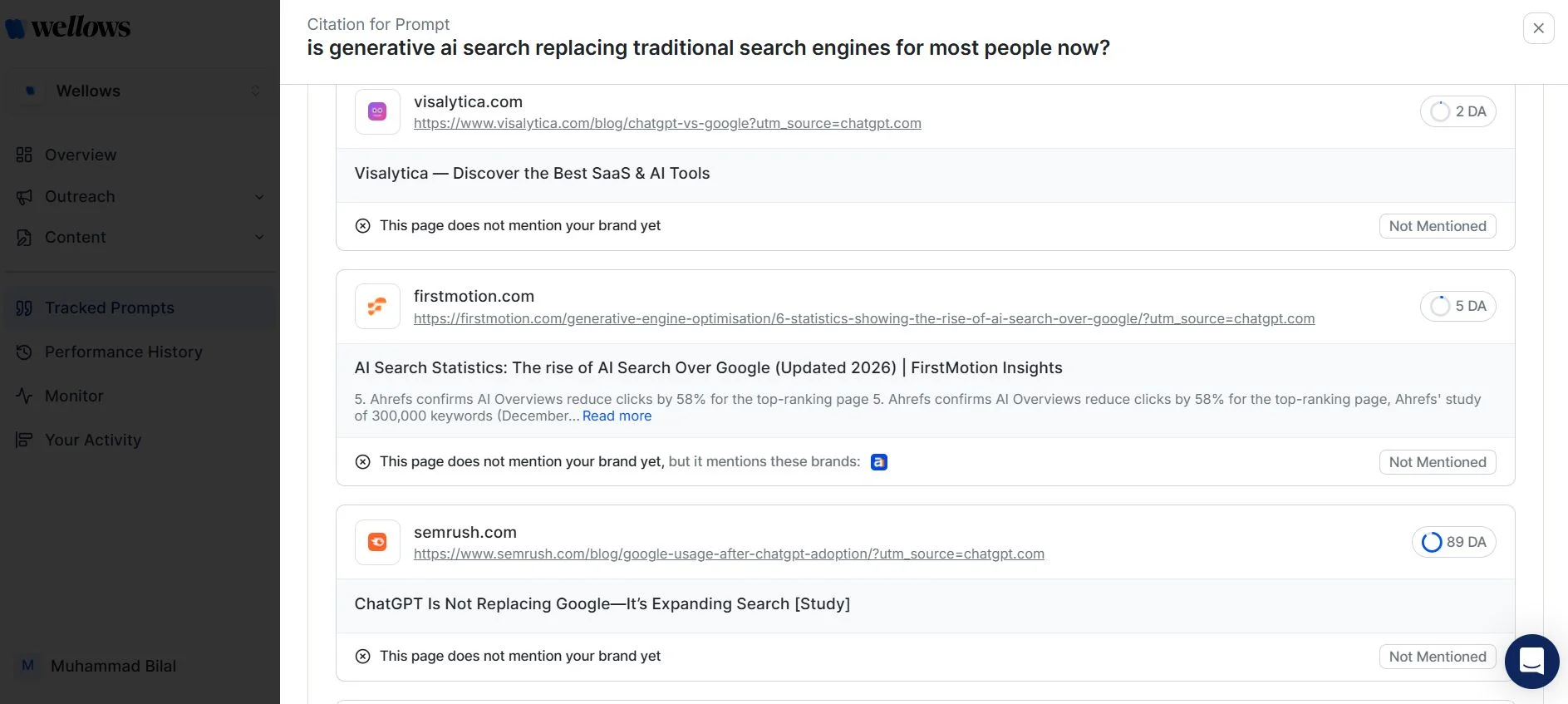

When AI generates an answer, it doesn't pull from thin air. It pulls from a finite set of sources — articles, listicles, comparison pages, reviews, Reddit threads, LinkedIn posts, forums, news sites. Those sources are listed in the citation footer of every modern LLM (ChatGPT, Gemini, Perplexity, AI Overviews, AI Mode).

That citation list is the real signal. It's the leading metric. It's where visibility originates — long before your name shows up in a generated answer.

So Wellows measures two things:

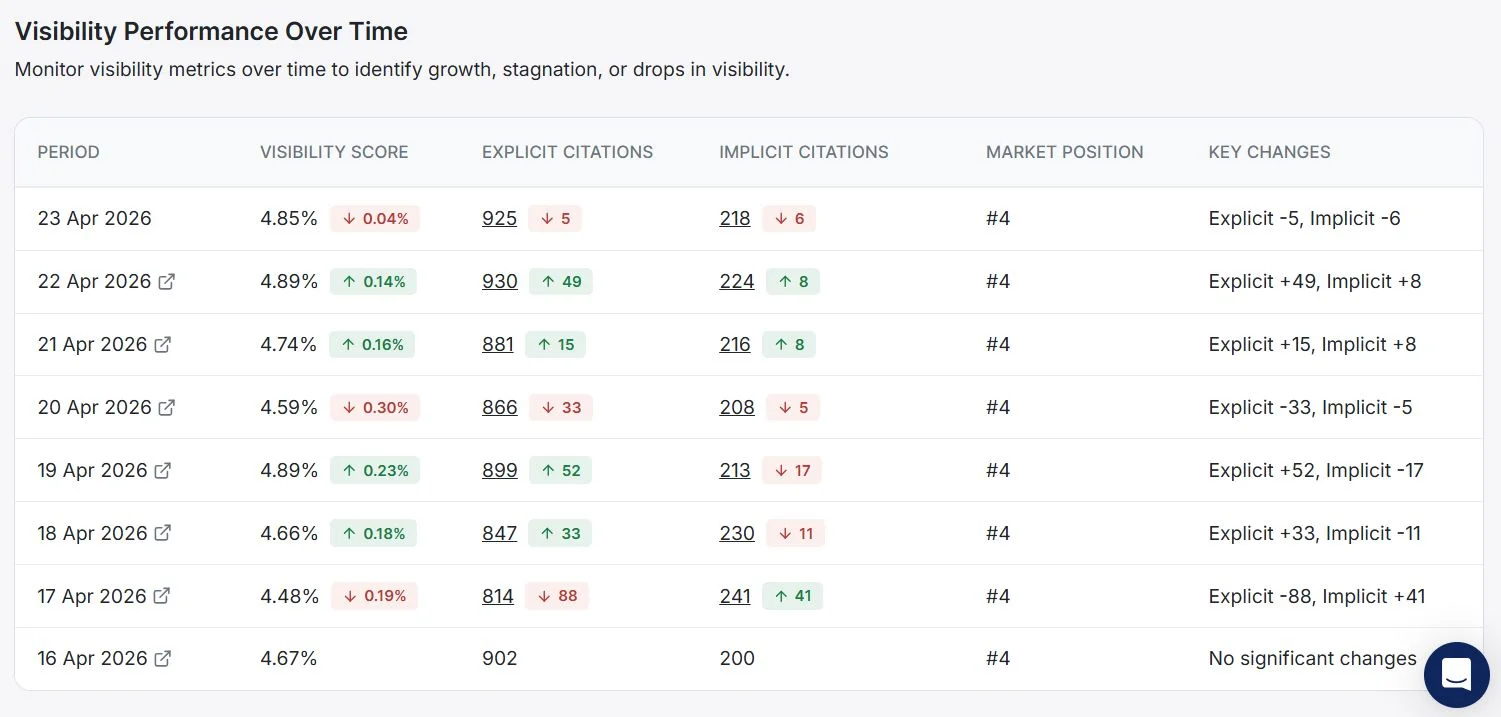

A query is asked. AI generates an answer. The citation footer lists your domain as a source. We count it, by query, by region, by LLM, every day.

The same query runs. AI cites a TechRadar article, a PCMag review, a Forbes feature, a Reddit thread, or a LinkedIn post — and your brand is mentioned inside that content. We index the source, find your mention, and count it.

| What's measured | Standard tools | Wellows |

|---|---|---|

| Brand name in AI response | ✓ | ✓ |

| Your domain cited as a source | — | ✓ |

| 3rd-party pages cited that mention you | — | ✓ |

| UGC sources (Reddit, LinkedIn, forums) | — | ✓ |

| Tracked across 5 LLMs daily | Sometimes | ✓ |

| Lag metric vs. leading metric | Lag | Leading |

| What it tells you | Where you are | Where to act |

The Wellows score combines all of the above. That's why it correlates with LLM traffic. Most "visibility scores" don't.

One number. Built from three signals.

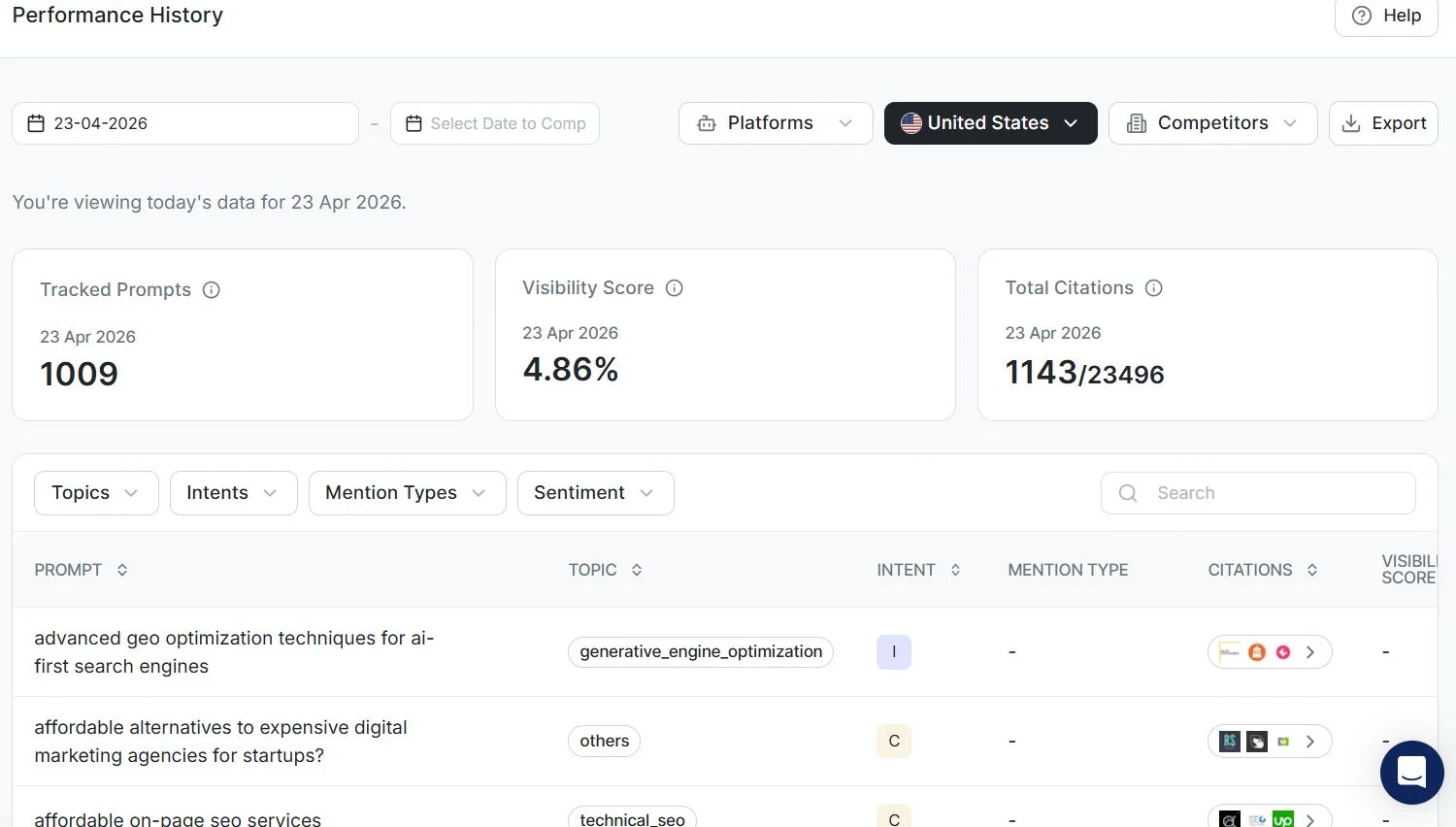

Your Citation Score is calculated from:

How often AI cites your domain as a source for queries in your category.

How often AI cites third-party and UGC sources that mention your brand.

It's built from observable, verifiable LLM citations — not modeled, not estimated.

It's tracked daily, so movement is real, not a sampling artifact.

It scales with your category, not against an arbitrary benchmark.

Built on real, observable LLM behavior.

Every Wellows score is built from:

Across ChatGPT, Gemini, Perplexity, Google AI Overviews and AI Mode

We capture every source the LLM lists, not just what's named in the answer

We crawl the cited pages to identify mentions of your brand, your competitors, and your category

So movement on your dashboard reflects actual change in LLM behavior, not weekly sampling lag

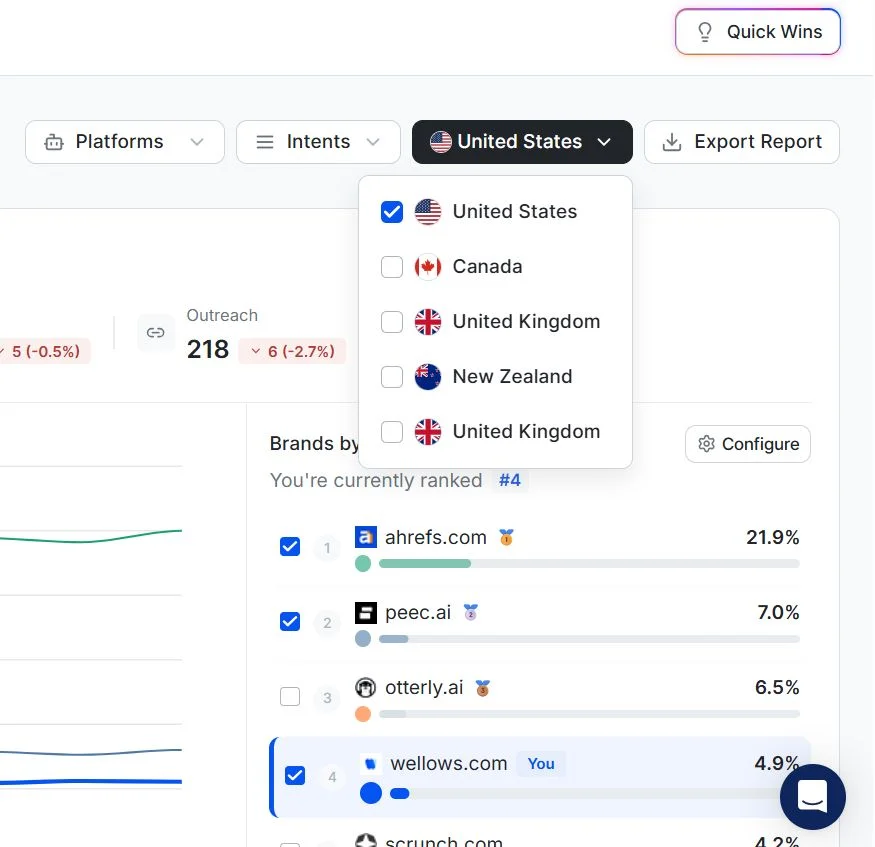

Visibility differs by market, so we measure each region separately

Methodology, sample sizes, and refresh cadence are documented and consistent across customers. Nothing is modeled. Nothing is extrapolated.

Don't take our word for it. Run the math on your own brand.

Connect your domain. Within 20 minutes, you'll see:

Credit card required · No charges during trial · Cancel anytime

Related, but more rigorous. GEO and AEO are emerging fields focused on optimizing for AI search. Most measurement in those fields is still based on response-level mentions. Wellows is a methodology and a platform — built specifically to measure the upstream citation signal that makes any GEO/AEO work successful.

We do — for context. But we don't score you on it. Mentions in a response are downstream of citations. By the time AI is naming you, the citation work that earned that mention happened weeks earlier. Optimizing on a lag metric means you're always behind.

Every citation in your dashboard is observable — you can click it, see the original LLM query, see the citation footer, and visit the cited URL. Nothing is modeled. Nothing is extrapolated. We document our methodology publicly (this page) so it's verifiable.

Any source an LLM cites in the citation footer. That includes major publications (TechRadar, PCMag, Forbes, etc.), category review sites, UGC platforms (Reddit, Quora, LinkedIn), Stack Overflow, GitHub, news outlets, and niche industry sites. We follow the LLMs' citations — we don't pre-select sources.

The principles are identical. The mechanics differ slightly because each LLM exposes its citation footer differently. We document the per-LLM extraction approach in our research paper.