You followed the SEO playbook, added title tag, meta description, keywords, internal links. The page ranked.

Then came the harder questions: Why isn’t it generating leads? Why are competitors cited in ChatGPT while your page is ignored? Why does it still feel off after multiple rewrites?

That’s not an SEO issue. It’s content optimization. SEO helps you rank whie content optimization helps you get chosen, cited, trusted, and acted on. It has no fixed checklist, score, or finish line. The shift is clear!

Ranking is no longer the same as being found.

This guide explains why content optimization feels way harder than SEO, where it breaks down, and what a realistic framework looks like for agencies, startups, SMEs, and teams that need content to do more than rank.

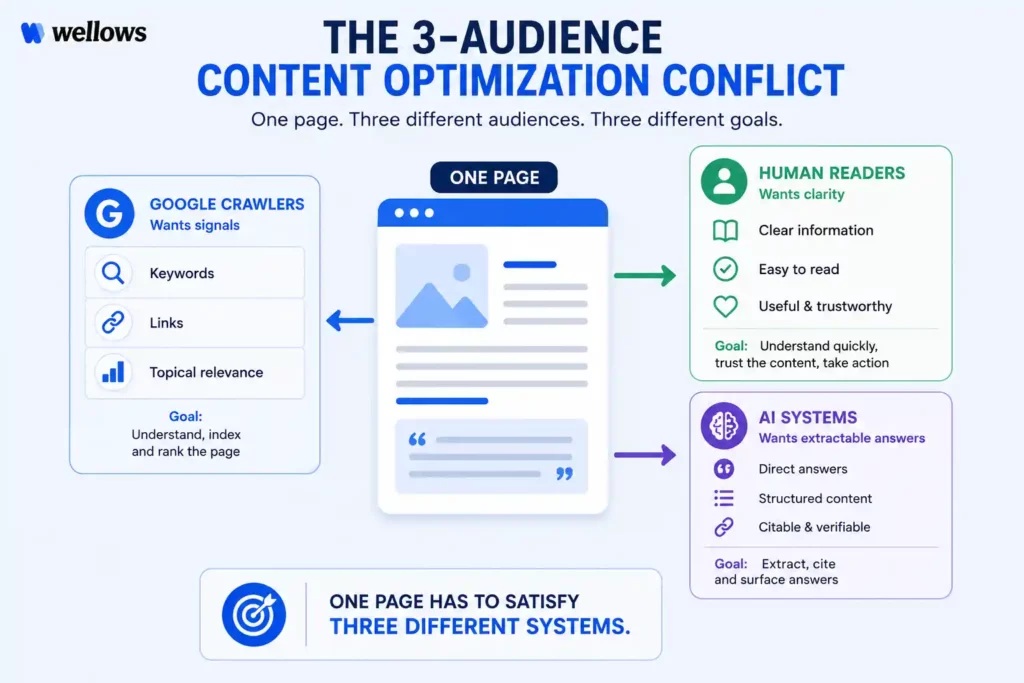

- SEO has rules you can verify. Content optimization has judgment calls you make fresh every time because “good” means something different to Google crawlers, human readers, and AI retrieval systems, and they don’t all want the same thing from the same page.

- Good content does not automatically get cited in AI answers. Structure matters as much as accuracy. A page that buries its answer behind a long introduction will lose a citation to a shorter, cleaner page even if it’s more thorough.

- Optimizing every page equally is the most expensive mistake on this list. Two pages chasing the same prompt don’t double your citation chances. They split them. Decide which page owns each topic before editing begins.

- Content optimization has no completion date. A page that earned citations six months ago may not earn them today because the competitive landscape around that prompt changed. Build a review system that responds to signals, not a calendar.

- Before touching any page, define which audience matters most for that specific piece. The answer changes every editing decision that follows, and skipping this step is why most rewrites produce marginal improvements instead of measurable ones.

The Core Problem: SEO Gives You Rules While Content Optimization Gives You Judgment.

Content optimization feels harder than SEO because SEO is mostly rule-based, while content optimization is mostly judgment-based.

SEO rules are usually around:

- An H1 is either present or missing.

- A meta description is either within range or too long.

- Canonical tags either point to the right URL or they don’t.

These are hard rules: clear, fixable, and verifiable.

Content optimization is different and is on lines of:

- Does the section flow?

- Is the answer clear? D

- oes the opening match search intent?

- Is the explanation too thin or too dense?

None of these have a simple pass/fail answer.

Hard rules create checklists. Soft rules create drafts that need judgment, revision, and context. That is why the hardest part is not knowing what to improve. It is knowing when you have done enough. SEO has a finish line. Content optimization does not. (Source)

No content scorer can fully judge whether an explanation works for your exact audience. That still requires human judgment, which is slower, costlier, and harder to delegate than a checklist.

Here is what this looks like in practice:

Why Content Optimization Feels Way Harder than SEO: The Three-Audience Problem

Content optimization got harder because one page now has to satisfy three audiences at once: Google’s keyword signals, human readability, and AI retrieval systems.

Pain Point 1: Google Still Wants Keyword Signals

Search crawlers haven’t abandoned keywords. Google’s systems still rely on keyword presence, internal linking structure, and topic clustering to understand what a page covers and where to surface it.

Keyword signals still need to appear in headings but if you use too few keywords and rankings can suffer. Use too many and the content starts sounding unnatural.

Here is what getting this wrong looks like:

The first version targets a keyword several times while the second explains value while still giving search engines clear context.

Pain Point 2: Human Readers Punish Over-Optimization

What helps Google can hurt the reader. This includes keyword repetition, long intros, and heavy topic coverage may signal depth, but they can also make content feel slow, robotic, or hard to use.

This is the SEO-readability conflict. Content has to be discoverable, but it also has to help the reader quickly.

Good optimization does not just add more information. It removes friction. Optimizing content for crawlers and optimizing it for humans requires different editing decisions. The mistake is treating them as the same job.

Pain Point 3: AI Retrieval Systems Operate on a Third Rulebook

AI platforms like ChatGPT, Perplexity, Gemini, and Google AI Overviews do not rank pages the same way Google does. They extract answers.

That means content must give a clear answer early. If the answer is buried under background context, AI systems may skip it and cite a clearer competitor instead.

The second version is easier for readers and AI systems to understand, extract, and cite. These structural decisions follow specific AI content optimization strategies built around extraction logic, not keyword logic.

AI retrieval systems reward content that gives the answer first. If your page buries the answer, a clearer competitor can win the citation.

That is why content optimization now means making content clear for humans, structured for Google, and direct enough for AI systems to cite.

Practitioners moving from SEO to GEO workflows echo the same point: AI optimization is not just keyword optimization with a new name. Generative search follows different logic, and teams that miss this often end up rebuilding content that already ranks. (Source)

The 5 Specific Places Where Content Optimization Actually Breaks Down

Understanding the three-audience problem is useful. But the real damage happens during execution.

Most content does not fail because the team ignored SEO. It fails because the page gets pulled in too many directions at once. It tries to satisfy crawlers, readers, and AI systems, but ends up serving none of them well.

Here are the five places where content optimization usually breaks down, with examples that show the difference clearly.

1: Over-Optimization Makes the Page Sound Written for Machines

Keyword stuffing is easy to spot, but over-optimization is more subtle. It happens when every paragraph feels shaped around a target phrase instead of the reader’s actual question.

A section on “content optimization tips” does not need that exact phrase in every third sentence. After a point, repetition stops helping Google and starts weakening trust.

That version is technically on-topic, but it sounds forced. The keyword is controlling the sentence instead of supporting the idea.

The fix is to optimize for semantic coverage, not keyword density. Use the primary keyword naturally, then support it with variations like “editorial improvements,” “on-page revisions,” “content quality upgrades,” and “reader intent.”

2: Strong Content Still Gets Ignored When AI Cannot Extract the Answer

A page can be accurate, detailed, and well-written, yet still fail to appear in AI citations. The reason is usually structure.

AI systems look for clean, direct, reusable answers. If your page spends four paragraphs setting the scene before saying anything useful, it gives the model very little to extract.

AI-friendly content answers first, then explains. A strong opening should define the concept or answer the query before adding any context.

This sounds polished, but it delays the answer. A reader has to keep going, and an AI system has no clear sentence to cite.

That sentence works because it gives the answer before the context. It is useful for humans and easy for AI systems to extract.

For teams diagnosing why a page is missing from citation pools, Wellows’ AI content optimization workflow compares your page against the URLs AI engines already cite. Instead of guessing, you can see which structural elements your competitors use and where your page falls short.

3: “Quality” Means Different Things to Google, Readers, and AI

This is where many audits go wrong. A page can score 91 out of 100 in a content tool and still fail because it answers the wrong question.

Quality is not one thing. For Google, it often means topical depth and clear relevance. For readers, it means clarity and usefulness. For AI systems, it means directness, structure, and extractability.

That page may pass the audit, but still fail the reader.

A content score can support optimization, but it should not define quality on its own. The real test is whether the page solves the user’s problem quickly and clearly.

4: Generative AI Competitor Analysis Needs a New Method

Traditional competitor analysis looks at keywords, backlinks, and domain authority. That still matters for classic SEO, but it does not fully explain why a page gets cited in ChatGPT, Perplexity, Gemini, or AI Overviews.

In generative search, the better question is not “Who ranks above us?” It is “Which URL is the AI using to answer this prompt, and why?”

That approach may help SEO, but it misses the reason the AI system chose the page.

What matters is not just who the competitor is. It is what their cited page does better than yours.

5: Content Maintenance Has No Permanent Finish Line

Technical SEO fixes can stay fixed for a long time. A corrected canonical tag or missing alt attribute may not need constant attention.

Content optimization is different. Every content decision has a shelf life.

Statistics expire. Search intent shifts. Competitors improve their pages. AI systems change what they retrieve. A page that earned citations in Q1 may disappear by Q3 if it has not been reviewed.

By then, the page may have lost rankings, citations, and reader trust at the same time.

This is the maintenance problem most teams underestimate. Content performance rarely collapses overnight. It erodes slowly, page by page, until rankings, engagement, and AI visibility all start telling the same story.

HubSpot research found that roughly 1 in 10 blog posts generates more traffic month over month rather than declining over time. The consistent pattern across those posts: they are regularly updated, clearly structured, and directly answer questions people keep searching. Decay is not inevitable. It is a maintenance decision.

Is Content Optimization Harder Than SEO for Businesses?

For individual creators, content optimization is frustrating. For agencies, startups, and SMEs, it becomes a business problem because stakeholders expect proof, timelines, and measurable progress.

What Businesses Are Being Asked Right Now

Every team is now facing some version of the same question: “Why isn’t our brand showing up when people ask ChatGPT about our category?”

For startups, this comes up in investor conversations. For SMEs, it starts when a founder sees a competitor recommended in Perplexity. For agencies, the question turns into a reporting problem: clients want to know why competitors appear in AI answers and what can be done about it.

AI search optimization reporting for agencies is the process of tracking client visibility across AI search platforms and showing which prompts mention the brand, which URLs get cited, which competitors own citation share, and what content changes can improve visibility across ChatGPT, Perplexity, and Google AI Overviews.

The problem is that AI search visibility is not a fixed ranking. It is a citation probability that can be influenced through structure, clarity, and answer quality.

That distinction matters. McKinsey research found that 50% of consumers now use AI-powered search for buying decisions. If a brand is missing from those answers, it may lose consideration before a search results page ever appears.

Businesses need more than “better content.” They need a way to show which prompts matter, which competitors are being cited, and what structural gaps are keeping their pages out of AI answers.

How to Run a Generative AI Visibility Analysis

The brands cited in ChatGPT, Perplexity, and Google AI Overviews are not always the brands with the most backlinks. They are often the brands with content that AI systems can extract from easily.

Here is a practical process any team can run regardless of size.

- Step 1: Identify buyer prompts. List 10 to 15 real questions buyers ask about your category, written as full-sentence prompts.

- Step 2: Track cited sources. Run those prompts in ChatGPT, Perplexity, and Google AI Overviews. Record which URLs and brands appear.

- Step 3: Audit cited pages. Check whether those pages answer quickly, define terms clearly, and place key information in the first 150 words.

- Step 4: Compare against your page. Find where your content delays the answer, buries definitions, or lacks clear structure.

This is where traditional content audits often miss the real issue.

That gap only appeared after tracking visibility engine by engine and topic by topic. A standard SEO audit would not have surfaced it.

Manual analysis works for 10 prompts. But real businesses often need to monitor 40 to 60 high-value prompts across multiple AI engines. Wellows makes this repeatable by scraping cited URLs per query and showing the structural gaps between cited pages and your content.

Will Choosing an Affordable On-Page Content Optimization Service or a Premium Agency Make It Easier?

Many teams ask this when content optimization starts feeling harder than expected. Neither a platform nor an agency removes the need for strategic judgment, but the right choice can make the process much easier to manage.

When an affordable on-page content optimization service makes sense

An affordable service fits small businesses, startups, and single-site projects that need focused page-level fixes, not a full growth program.

It works best when your team can handle strategy and publishing internally but needs help with structure, readability, AI citation gaps, and optimization recommendations.

This is the right choice when budget matters and you can implement changes without external support.

When a premium agency is worth it

A premium agency makes sense for competitive niches, larger sites, or businesses where organic and AI search traffic directly drives revenue.

Agencies add value through custom strategy, competitor analysis, content production, and performance accountability across many pages.

That depth matters most when execution capacity becomes the bottleneck.

A simple decision rule: Start with an affordable service for specific, measurable fixes across a defined set of pages. Move to a premium agency when strategy, scale, or hands-on execution becomes the constraint.

Red flags worth knowing before you commit:

Avoid providers that promise guaranteed rankings, use vague deliverables, or cannot explain exactly what they will optimize and why.

Good on-page content optimization should clearly define the changes, target pages, and expected outcomes.

If an affordable service only offers automated keyword edits without structural reasoning, it will not close the gap between ranking and being cited in AI answers.

For teams that want agency-level decision-making without the retainer cost, Wellows sits in the middle.

It runs domain-level audits, identifies which pages have the strongest claim to each target prompt, surfaces competitor citation gaps, and routes work toward optimization or new content creation before editing begins.

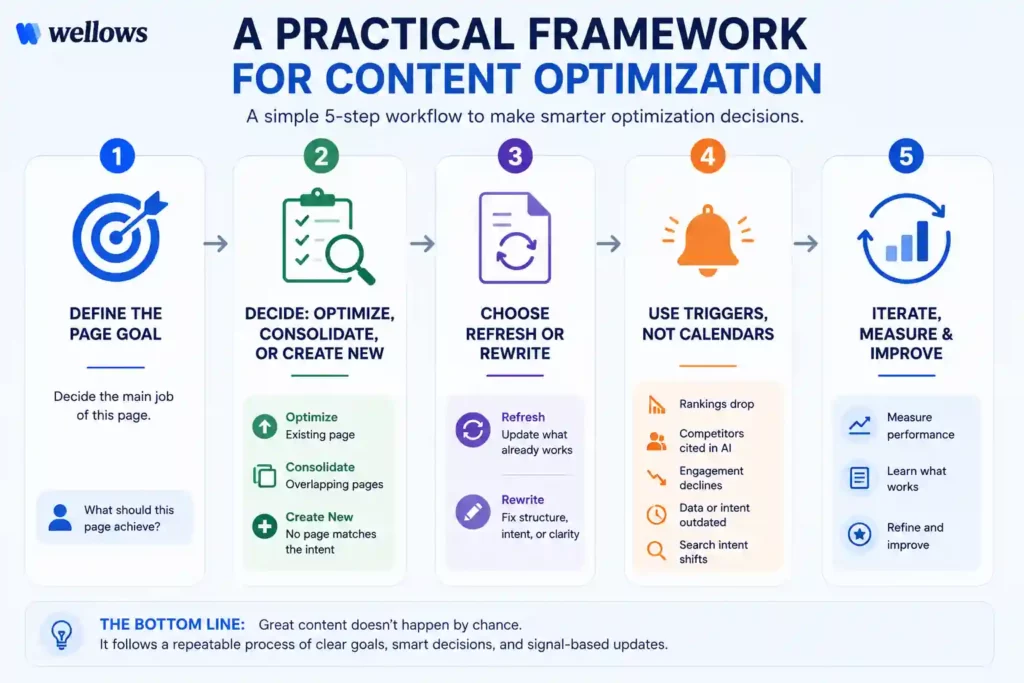

A Practical Framework for Making Content Optimization Manageable

Content optimization becomes easier when you stop editing every page the same way. Before rewriting anything, decide what the page is supposed to win: Google rankings, AI citations, reader trust, or conversions.

Once that goal is clear, the edits become sharper, faster, and easier to measure.

Step 1: Decide the Page’s Main Job Before Editing

The biggest mistake teams make is editing without a target. One person improves readability. Another adds keywords. A third pushes CTAs. The page gets “better,” but not more effective.

Before touching the draft, write one sentence at the top of the brief:

That one line changes the whole workflow. If the goal is AI citations, the opening needs a direct answer. If the goal is conversion, the page needs stronger proof, objections, and CTAs. If the goal is Google rankings, the page needs better topical coverage and internal links.

A useful rule: assign one primary goal per page. Secondary goals can exist, but they should not control the edit.

Step 2: Check Whether You Should Optimize This Page at All

Not every weak page deserves an update. Sometimes the better move is to consolidate, redirect, or improve a stronger page that already exists.

Before editing, search your own site for the target topic. Look for pages that overlap in intent, rankings, backlinks, or AI citation potential.

Use this quick decision rule:

- Optimize if the page already matches the intent but needs clearer structure, fresher data, or stronger examples.

- Consolidate if two pages answer the same question and split authority.

- Create new if no existing page satisfies the query or prompt properly.

Here is the common failure: a team expands a weak 2021 blog post to 3,000 words while a stronger guide on the same topic already exists elsewhere on the site. Now both pages compete for the same ranking or AI citation.

The better move is to strengthen the best existing asset, then redirect or merge the weaker one.

For tool selection, keep it simple. AI content optimization tools can help, but choose based on the job. Surfer SEO is useful for on-page scoring. Clearscope helps with semantic gaps. MarketMuse works for larger topic maps. Wellows is built for AI citation gaps and prompt-level competitive analysis, including surfacing whether any existing page is cannibalizing another before a single edit is made.

For teams with no prior SEO background, Surfer SEO and Hemingway Editor are the easiest on-page editors to start with. For teams whose main concern is understanding why competitors appear in AI answers, Wellows surfaces those structural gaps directly without requiring SEO knowledge to act on the output.

Step 3: Choose Between a Refresh and a Rewrite

A refresh is enough when the page is mostly right but outdated. A rewrite is needed when the page answers the wrong intent or is too hard for readers and AI systems to extract from.

Start with the first 150 words. That is where most optimization problems show up.

Ask three questions:

- Does the opening answer the main query directly?

- Can a reader understand the page’s value without scrolling?

- Could an AI system extract a clean definition or answer from it?

If the answer is yes, refresh the page. Update stats, examples, screenshots, internal links, and missing sections.

If the answer is no, rewrite the structure first. Do not waste time adding new data to a page with a weak opening or outdated angle.

For small businesses asking which content optimization service is best for improving readability scores, the better question is whether readability alone is enough. Wellows helps answer that by checking if a page is not only easy to read, but also structured for AI extraction, LLM citations, and ranking opportunities.

Hemingway Editor is useful for sentence-level readability, and Grammarly helps with grammar and clarity, but both stop at improving the text itself.

Step 4: Use Triggers Instead of Random Review Cycles

Reviewing every page on a fixed schedule sounds organized, but it wastes time. Some pages need attention sooner. Others can wait.

Build review triggers into your content workflow. A page should be reviewed when performance signals change, not just because the calendar says so.

Use these triggers:

- A priority keyword drops meaningfully.

- A competitor appears in ChatGPT, Perplexity, or AI Overviews for your target prompt.

- Engagement falls by 20% or more.

- Stats, screenshots, pricing, or product details become outdated.

- Search intent shifts and the page no longer matches what users want.

This keeps optimization focused. You are not editing for the sake of editing. You are responding to clear signals that the page is losing relevance, visibility, or usefulness.

Content Optimization Mistakes That Make It Feel Harder than SEO

SEO feels easier because most mistakes are visible. A missing title tag, broken canonical, or weak internal link can be found and fixed.

Content optimization is harder because the mistakes are quieter. A page can look polished, score well in a tool, and still fail because it answers the wrong intent, competes with another page, or is hard for AI systems to extract.

1: Optimizing Every Page Like It Deserves Equal Attention

Not every page should be optimized with the same effort. Some pages support the topic. Others should be the main answer.

When every page gets equal treatment, authority spreads thin. In AI search, this is especially risky because two similar pages from your own site can compete for the same prompt.

This is the decision Wellows’ cannibalization-aware engine was built for. Before any optimization begins, it scans the entire domain to identify which page has the strongest claim to each target prompt and routes the work accordingly. Two pages never end up competing for the same citation when the decision gets made upfront.

This is one reason content optimization feels harder than SEO. You are not just fixing pages. You are deciding which pages deserve to win.

2: Improving Readability While Breaking AI Extractability

A cleaner reading experience is important, but it should not remove the structure AI systems use to understand and cite the page.

Editors often combine short sections, remove repeated headings, or turn Q&A blocks into smoother prose. The page may read better, but it can lose the clear answer blocks that made it useful for AI retrieval.

The goal is not to choose between humans and AI. The goal is to make the page easy to read and easy to extract.

3: Using Keyword Density as a Quality Score

Keyword density is one of the fastest ways to make content feel optimized while making it worse.

Modern search and AI systems understand context better than exact-match repetition. If a page repeats the same phrase too often, it can sound written for crawlers instead of people.

The better signal is topical completeness. A strong page answers the main question, covers the next obvious questions, and leaves fewer gaps than competing pages.

4: Treating Content Optimization Like a One-Time Task

Technical SEO fixes can often stay fixed. Content does not work that way.

Search intent changes. AI retrieval behavior shifts. Competitors update their pages. Statistics expire. A page that worked six months ago may now be outdated without looking broken.

Bottom line:

Content optimization feels harder than SEO because it has fewer fixed rules. The solution is not more random edits. It is clearer intent, stronger page selection, cleaner structure, and review triggers that catch performance drift early.

The teams that win are not the ones that update everything constantly. They are the ones that know which pages to improve, what to preserve, and when a page actually needs attention.

The brands that compound content performance over time aren’t the ones who publish the most. They’re the ones who built a system that knows when to act and when to leave a page alone.

FAQs

SEO refers to technical and structural signals that help search engines crawl and rank pages. Title tags, backlinks, site speed, internal linking. Content optimization focuses on making the actual content more useful, clear, and satisfying for readers and AI systems. SEO gets the page found. Content optimization determines whether it performs after it is found. Both are necessary. Neither replaces the other.

Wellows is the easiest starting point for beginners who want to understand why a page is not ranking or getting cited in AI answers, because it turns content gaps into clear, prompt-level actions. For sentence-level readability, Hemingway Editor is still useful, while Surfer SEO’s Content Editor helps with basic on-page guidance. The difference is that Wellows connects optimization to AI visibility, content structure, and ranking opportunities, not just writing scores.

There is no universal schedule that works across topics. Fast-moving topics in AI, finance, or technology should be reviewed every three to six months. More stable topics can hold for twelve months. The more reliable approach is behavioral. If a page drops in rankings, loses AI citations, or shows significant engagement decline, that signals a review regardless of when it was last edited.

Prioritize services that include readability analysis, on-page content review, and specific recommendations, not just a score delivered without an action path. The most useful services explain what to change and why it matters for your specific audience. For small businesses with tight budgets, self-serve platforms with guided workflows tend to deliver better ROI at entry-level price points than fully managed services.

The best content optimization platform for agencies depends on whether they need AI citation tracking, content scoring, or client reporting.

Wellows is best for agencies focused on AI search visibility across ChatGPT, Perplexity, and Google AI Overviews. It tracks citation share by prompt, detects cannibalization, supports multi-domain workspaces, and helps show clients measurable AI visibility progress.

Semrush is best for agencies that need an all-in-one SEO, content, and reporting platform. Its client reporting tools make it useful for full-service SEO campaigns.

Surfer SEO is best for optimizing and refreshing content across multiple client sites. It helps identify underperforming pages and improve page-level content scores.

Clearscope is useful for agencies focused on content quality and writing consistency, but it is less suited for AI visibility tracking or agency-level reporting.

For most agencies, start with Wellows for AI citation visibility, add Surfer SEO for page-level content optimization, and use Semrush if broader SEO reporting, keyword, and backlink tools are needed.

Conclusion

Content optimization feels way harder than SEO because one page now has to serve three audiences: search crawlers, human readers, and AI retrieval systems. Each one values something different.

The solution is not to optimize everything for everyone. It is to decide what each page should win, then edit around that goal.

SEO helps search engines find your content. Content optimization helps that content get read, trusted, cited, and acted on.

That is why SEO has checklists, while content optimization needs judgment, structure, and regular review.

Wellows helps make this process more measurable by showing where your content falls short for AI citations, answer clarity, and ranking opportunities, so your optimization decisions are based on evidence instead of guesswork.