AI search engines no longer surface information by ranking pages alone. They generate answers by selecting sources they trust, reuse, and validate across multiple related queries. If your content is not trusted at the model level, it is excluded regardless of how well it ranks organically.

That’s also why most “visibility” playbooks now start with how to rank in chatgpt —because in LLM answers, selection depends on trust and reuse, not blue-link position.

This shift matters because AI-generated summaries sit above traditional results, answer queries directly, and often replace clicks to websites. Google AI Overviews now show on roughly 13.14% of U.S. searches, more than double from 6.49% just two months earlier, signaling a rapid shift toward AI-first results. (Search Engine Land)

Being a Trusted Source in AI Search now determines whether AI engines reference your content, include it in answers, or overlook it entirely making trust and citation the new cornerstone of visibility in generative search.

- AI search engines prioritize trust signals, not traditional rankings, when selecting sources for answers.

- Being cited in AI answers depends on clarity, consistency, and alignment with established entities and facts.

- Trusted sources are reinforced through repeated usefulness across similar queries, not one-time performance.

- Content structure, factual grounding, and reduced ambiguity directly influence whether AI systems reuse information.

- Trust in AI search compounds over time, making visibility a result of sustained credibility rather than short-term optimization.

What Is Meant by a Trusted Source in AI Search?

AI search engines no longer evaluate pages only by rank or recency. They select trusted sources based on how consistently a source is reused, validated, and aligned with consensus knowledge across multiple queries. If a source reduces uncertainty for the model, it is reused. If it introduces ambiguity, it is ignored.

A trusted source in AI search is therefore a citation anchor, not just a publisher. These sources are repeatedly referenced when AI systems generate answers because they provide clear explanations, stable facts, and entity-aligned context, not just indexed content. The Ranking vs. Trust Gap in AI Search.

Then

Traditional Google Search

➡️ Visibility = position in SERP

➡️ Clicks measure success

➡️ SEO rewards optimization

Now

AI Search & Overviews

➡️ Visibility = citation or reuse

➡️ Mentions measure success

➡️ GEO rewards reliability

What Makes a Source Trusted in AI Search?

A source becomes trusted in AI search when it consistently helps AI systems reduce uncertainty while generating answers. Large language models don’t look for popularity signals alone; they evaluate whether a source explains a topic clearly, aligns with known entities, and can be reused safely across similar queries. Trust forms when content behaves predictably under repeated AI interpretation.

You can think of trust as model confidence. If a source repeatedly delivers accurate, structured, and context-aware explanations, AI systems learn that citing it leads to fewer contradictions and better answers. Over time, these sources are favored, even if they don’t dominate traditional rankings.

AI systems evaluate trust signals such as:

- Topical consistency → Sources that explain the same concept clearly across multiple related queries are reused more often.

- Entity alignment → Content that matches established entities (brands, standards, institutions) is easier for AI to ground.

- Clarity and structure → Well-organized explanations reduce hallucination risk during answer generation.

- Consensus reinforcement → Sources that align with broader web consensus are seen as safer to cite.

These signals directly map to Generative Engine Visibility Factors, which explain why some pages become citation anchors while others remain invisible in AI answers.

How Do AI Answer Engines Select Which Sources to Trust?

AI answer engines assemble responses by evaluating patterns, not one-off performance. They reinforce sources that repeatedly help generate accurate answers.

- Interpret intent and expand the query space: AI engines map the query to an intent (informational, transactional, research) and generate close variants to reduce ambiguity and cover edge cases.

- Pull candidate sources from retrieval layers: They retrieve a set of potential sources from indexed web pages, knowledge graphs, and other trusted corpora to ground the answer in evidence.

- Score sources for clarity and extractability: Sources that explain the concept cleanly, define terms, and provide scannable passages are easier to quote and reuse so they rank higher in selection.

- Validate against entities and consensus signals: AI checks whether claims align with known entities (brands, institutions, standards) and whether the explanation matches broader consensus patterns.

- Favor sources with repeatable usefulness: If a source has helped answer similar questions without contradictions, the model leans on it again because it lowers the risk of errors.

- De-prioritize ambiguity and weak grounding: Pages with vague claims, missing context, outdated details, or inconsistent framing get filtered out because they increase hallucination risk.

- Generate the answer with grounded references: The final response gets assembled from the most reliable passages often blending multiple sources to support coverage and confidence.

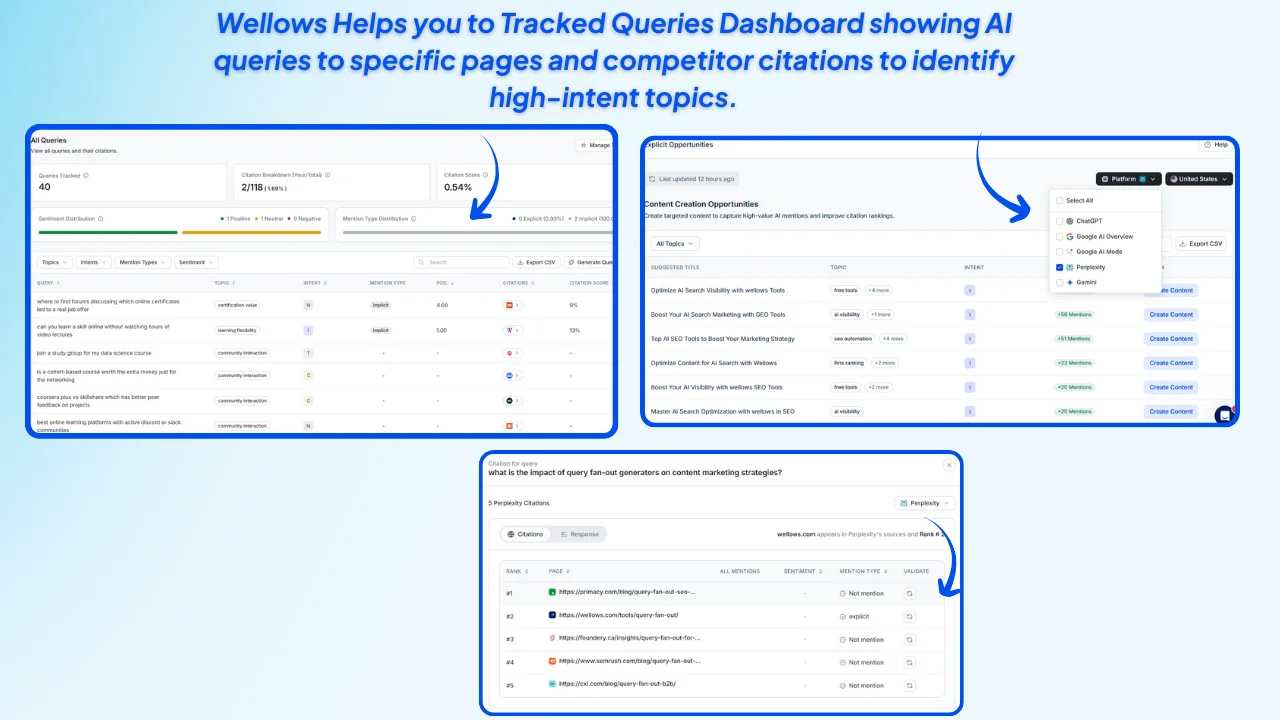

How Wellows Helps You Operationalize This Trust Logic?

AI trust signals are real but invisible without the right lens. Wellows turns abstract trust patterns into measurable inputs by showing where, how, and why AI systems reuse certain sources. Instead of guessing what AI prefers, teams can observe citation behavior, validate credibility signals, and act on gaps with confidence.

Track repeat citations across AI answers to identify which pages AI consistently trusts for similar queries.

Analyze citation context and sentiment to see whether AI positions your brand as authoritative, neutral, or secondary.

Benchmark against competitors using Citation Score to understand who AI prefers and where you’re being replaced.

Surface implicit mentions where your brand influences answers but isn’t credited, then convert them into explicit citations.

Monitor trust volatility over time to catch shifts after updates, content changes, or competitor moves.

By unifying tracking, monitoring, and validation, Wellows makes AI trust observable, comparable, and improvable so teams can earn citations by design, not chance.

What Types of Content AI Models Trust Most?

AI models favor content that is clear, repeatable, and grounded in consensus because their goal is to reduce uncertainty when generating answers. Instead of rewarding novelty or opinion, AI systems prioritize formats that can be reliably extracted, verified, and reused across similar queries.

| Content Type AI Prefers | Why AI Trusts It | Impact on AI Citations |

|---|---|---|

| Explanatory guides | Provide step-by-step logic and stable definitions | High reuse across informational queries |

| Structured comparisons | Reduce ambiguity by contrasting options clearly | Strong grounding for decision-style answers |

| Entity-led content | Aligns with known people, brands, standards, or concepts | Easier validation against knowledge graphs |

| Data-backed analysis | Anchors claims in verifiable numbers or studies | Higher credibility signals for AI models |

| Evergreen reference pages | Remain accurate over time with minimal volatility | Preferred for repeated citation cycles |

Compared to opinion-driven or promotional pages, these formats perform better because AI systems can consistently extract the same meaning without reinterpreting intent each time.

To understand how different AI platforms reinforce these preferences over time, review AI citation patterns, which show that LLMs like ChatGPT, Gemini, Google AI Overviews, and others repeatedly favor specific content structures and valid sources.

What Are Trusted Information Sources in Technology?

In AI search, trusted technology sources are those that consistently explain complex systems clearly, align with widely accepted standards, and demonstrate long-term accuracy. AI models favor these sources because they reduce interpretive risk and help generate stable, defensible answers across fast-changing tech topics.

- Official documentation & standards bodies → Sources like W3C, NIST, and IEEE are frequently referenced because they define protocols, frameworks, and compliance benchmarks used across the industry.

- Established research publishers → Peer-reviewed outlets and institutional research (e.g., ACM, arXiv preprints with high reuse) signal methodological rigor and factual grounding.

- Authoritative product & platform blogs → First-party engineering blogs from companies like Google, Microsoft, and OpenAI provide direct explanations of how systems work, which AI models treat as primary references.

- Recognized expert-led publications → Consistently authored content with clear credentials and topical depth builds repeat citation patterns, especially in AI, cloud, and cybersecurity domains.

How Can Brands Improve Data Platform Citations in AI Answers?

For agencies and growth teams, this creates a new problem: citations are happening, but they’re invisible and unmanaged. This is where AI search visibility for agencies becomes critical. Wellows for Agencies fits naturally by helping teams move from guessing to measuring how AI platforms recognize, reuse, and trust brand content across Google AI Overviews and other LLMs. Instead of relying on rankings, agencies can now manage AI citations as a first-class visibility metric.

Key Takeaways: Together, this allows brands to systematically improve how AI platforms recognize and reuse their content turning citations from an accident into a strategy.

How Does Content Structure Influence AI Citations?

AI systems don’t “read” content the way humans do. They extract, compare, and reuse information based on recognizable structural patterns. This is why pattern recognition in AI-generated answers plays such a critical role in citation eligibility AI models consistently favor content that follows repeatable, predictable formats over creatively written but structurally inconsistent pages.

- Direct answers placed immediately under clear, descriptive headings

- Lists and tables with explicit labels that reduce interpretation effort

- One concept per section, avoiding mixed or overlapping explanations

- Consistent terminology across pages so entities and meanings stay stable

Because AI models learn through repetition and reinforcement, structure drives trust more reliably than keyword variation.

How Do You Get Cited in AI Health and Science Answers?

Health and science answers operate under higher trust thresholds. AI systems prioritize evidence density over visibility.

What increases citation likelihood

- Peer-reviewed or institutional references

- Clear author credentials

- Conservative language and disclaimers

- Alignment with clinical or scientific consensus

Why Are Health and Science AI Answers Harder to Influence?

Health and science answers carry higher risk, which makes AI systems far more selective about the sources they trust. Models apply conservative filters, prioritize consensus-driven information, and often rely on a narrow set of whitelisted publishers to reduce the chance of misinformation. As a result, visibility in these domains is earned slowly and reinforced through repeated credibility signals rather than isolated content wins.

This is where digital PR for generative engine visibility becomes essential. Authoritative citations, third-party mentions, and expert-backed references help AI systems validate trust beyond on-site content alone. In regulated industries, Wellows helps teams monitor whether AI systems recognize their content as credible or consistently default to established competitors instead so trust gaps can be addressed deliberately rather than guessed.

Who Benefits Most From Becoming a Trusted Source in AI Search?

Brands operating in high-consideration and research-driven markets benefit the most because AI answers increasingly replace early discovery steps. Over 60% of B2B buyers now rely on AI-generated summaries before engaging with a brand directly, making early trust signals decisive rather than optional.

Content-led industries such as SaaS, healthcare, finance, and professional services gain compounded visibility when AI systems repeatedly reuse their explanations. In these categories, absence from AI answers often signals a credibility gap, not a ranking issue directly affecting perceived authority and consideration.

Which Teams Gain the Most From AI Trust Signals?

Marketing, content, and SEO teams benefit the most from AI trust signals because AI search prioritizes sources that consistently explain topics clearly and reduce uncertainty. As AI-generated answers increasingly replace early-stage clicks, teams that understand how AI systems select and reuse sources can adapt content structure, topic depth, and authority signals to stay visible where decisions now begin (McKinsey, 2025).

Analytics and strategy teams gain value by shifting measurement from rankings to trust and citation patterns. AI trust signals reveal whether a brand is becoming a repeat reference or being ignored, helping teams identify credibility gaps earlier and align content, PR, and SEO efforts with how AI search actually works today (Semrush, 2026).

What Are the Limitations of Becoming a Trusted Source in AI Search?

Becoming a trusted source in AI search is not a switch you flip it’s a cumulative outcome of consistency, clarity, and credibility over time. AI systems reward patterns they can validate repeatedly, not short-term optimizations. This means even strong content may be ignored if trust signals are inconsistent, fragmented, or contradicted across the web. Key limitations teams must account for

No guaranteed citations: AI models decide independently; even authoritative content may be summarized without attribution.

Delayed feedback loops: Trust builds gradually, making improvements harder to tie to immediate actions.

Model opacity: AI engines do not expose ranking or trust formulas, limiting direct control.

Domain-level bias: Some industries face higher trust thresholds due to risk or regulation.

External signal dependency: Trust is influenced by third-party references, not just owned content.

Wellows help teams understand why trust is earned or withheld by revealing citation patterns, source repetition, and competitive trust gaps across AI platforms. This visibility turns trust from a black box into a measurable signal, which is the foundation for meaningful, long-term improvement.

- Optimize Conversational AI Search Queries: How to Optimize Conversational AI Search Queries for Better Visibility?

- AI Search Marketing Semantic Intent: How AI Search Marketing Strategies Target Semantic Search Intent (2026)

FAQs

A trusted source is one that AI systems consistently reuse and ground answers in because its content is clear, fact-aligned, and repeatedly validated across similar queries.

Not always. AI may trust smaller or niche sources if their explanations are precise, consistent, and better aligned with user intent than large publishers.

Yes. AI trust evolves as new information, updated data, and stronger explanations emerge, which can replace previously favored sources.

Common issues include misattributed sources, outdated references, synthesized explanations without direct citations, and overgeneralized summaries.

Peer-reviewed research, government datasets, recognized standards bodies, and consistently cited expert publications are the most reliable inputs for AI systems.

Final Thoughts: Trust Is the New Ranking Signal in AI Search

Trust in AI search is no longer earned through rankings alone, but through repeatable clarity, credibility, and consistency across answers.

Brands that understand how AI systems select, reuse, and validate sources gain durable visibility even when clicks disappear.

In AI-driven search, trust is the signal that compounds, and those who measure and reinforce it early shape how they are remembered, cited, and relied on.