Startups move fast, but their visibility across Google, Gemini, and AI assistants often lags behind. Most early teams don’t have the time, staff, or deep SEO knowledge to keep up with constant algorithm and GenAI shifts, so organic growth ends up inconsistent or reactive.

That’s where an AI search visibility platform for startups becomes crucial. Instead of juggling disconnected tools, founders can track how their brand shows up across SERPs and AI answers in one place, reduce manual reporting, and turn visibility into a measurable growth lever.

This guide breaks down how AI visibility works for new businesses—the operational gaps that hurt discoverability, the AI-driven strategies that streamline execution, and the benefits of building a GenAI visibility stack for startups from day one. Each section focuses on helping lean teams ship faster while staying visible in both search engines and AI systems. Running a structured technical SEO audit early ensures crawl paths, indexation, and site performance don’t silently limit AI search visibility from the start.

As AI-powered search platforms grow—AI assistants already account for around 5.6% of U.S. desktop browser search traffic—startups are beginning to treat AI search engine visibility as seriously as rankings and clicks. Visibility inside ChatGPT, Gemini, Perplexity, and similar tools now shapes how users perceive brands, compare options, and make decisions.

Why Do Startups Need an AI Search Visibility Platform?

Most early-stage companies don’t begin with a full marketing or SEO team. Many founders rely on generalists who juggle product, sales, and growth while great startup ideas remain underexposed due to limited AI visibility prioritization. The result is simple: content ships, but nobody really knows how the brand shows up across Google and AI assistants.

Here’s where things get even more challenging for founders:

Did you know?

Did you know?

These findings point to a clear pattern: interest in AI and SEO is high, but execution and measurement are fragmented. Without a dedicated AI visibility solution, teams struggle to connect their content investments with visibility outcomes across both SERPs and LLMs.

Did you know?

What Is a GenAI Visibility Stack, and Why Is It Important for Startups?

A GenAI visibility stack is the set of models, data layers, and analytics tools that show how your startup appears across search engines and AI assistants. Instead of only looking at rankings, it reveals where your brand is mentioned, cited, and trusted inside systems like ChatGPT, Gemini, and Perplexity.

For AI startups, this matters because GenAI usage is exploding, but visibility is uneven. Many teams ship AI features and content, yet have no way to see whether those investments improve discovery, credibility, or demand. A structured stack turns GenAI from experiments into measurable visibility and growth.

- Models and orchestration: LLMs such as GPT-4, Claude, and Gemini, plus orchestration frameworks that route prompts, manage guardrails, and connect to external tools. Cloud platforms like Azure OpenAI Service make it easier for startups to deploy these capabilities at scale.

- Retrieval and memory: Retrieval-augmented generation (RAG) tools and vector databases store your content, product docs, and knowledge as embeddings, so models can answer with accurate, brand-safe context instead of generic web data. Pinecone, Weaviate, and Chroma are common choices for this layer.

- Analytics and AI visibility: This is where a GenAI visibility stack for startups becomes actionable. An AI search visibility platform like Wellows shows how your brand and entities appear across SERPs and AI assistants, tracks Citation Score, and makes AI visibility part of the same KPI set as traffic and conversions.

Without this top visibility layer, founders have little control over how GenAI systems represent their brand. With it, they can monitor AI-driven mentions, spot gaps, and make targeted changes to content and entities—improving brand control, compliance, and measurable visibility over time.

Which Tools Are Recommended for Building a GenAI Visibility Stack

A practical GenAI visibility stack maps each of these layers to specific tools so teams don’t reinvent the wheel for every use case.

- Models and orchestration: Hosted LLMs like GPT-4, Gemini, and Claude, wired through orchestration frameworks such as LangChain-style libraries that make it easier to chain prompts, tools, and APIs.

- Retrieval and memory: RAG frameworks and vector databases do most of the heavy lifting. Tools like LlamaIndex handle document ingestion and retrieval, while Pinecone, Weaviate, and Chroma store embeddings and power semantic search.

- Observability and monitoring: GenAI observability platforms trace prompts, log outputs, and spot anomalies so startups can detect quality or safety issues before they affect users.

- AI visibility and analytics: A dedicated AI visibility solution translates all this activity into marketing and growth insights. This is where Wellows fits: instead of tracking only usage and latency, it focuses on how often your brand appears in AI answers, where it is cited, and how that overlaps with search demand and SEO performance.

How Does an AI Search Visibility Platform Fit Inside the GenAI Stack

An AI search visibility platform sits in the top layer of the GenAI stack, above models, retrieval, and application logic. It doesn’t replace those components; it reads their outputs and the wider search landscape to show how your brand is actually represented across SERPs and AI assistants, especially in cases where AI misunderstood what my startup does and communicates that misunderstanding at scale.

Did you know?

- Connecting search data, AI answer data, and entity data into one view.

- Ingesting how your brand appears across Google, ChatGPT, Gemini, and Perplexity.

- Turning those signals into metrics like Citation Score, AI search visibility, and entity coverage for lean teams.

Because it operates as an analytics and decision layer, an AI search visibility platform for startups is stack-agnostic. Whether you use Azure OpenAI, open-source models, Pinecone, or Weaviate underneath, the role of Wellows is to answer a simple question: “Where, and how, are we visible in search and AI systems?”

As a result, the GenAI visibility stack becomes more than infrastructure. With an AI visibility platform on top, it behaves like an autonomous marketing platform for discoverability—highlighting gaps, surfacing opportunities, and guiding what to improve next across SEO, content, and product pages.

How Startups Can Use AI for Growth and Visibility

Artificial Intelligence (AI) is reshaping how startups think about SEO by extending it beyond rankings into AI search visibility. Instead of only tracking positions in Google, lean teams can now see how often their brand appears inside AI answers on ChatGPT, Gemini, and Perplexity, and how that visibility changes over time.

1. AI-Powered SEO and Visibility Tools

AI tools automate core SEO workflows—keyword research, content optimization with strong on-page SEO, and Technical SEO Issues—while also revealing how content performs across AI-driven experiences.

They analyze search intent, detect issues affecting site performance, and highlight which pages are actually being cited or surfaced by AI systems. This allows startups to prioritize fixes and opportunities with the highest visibility impact.

![]()

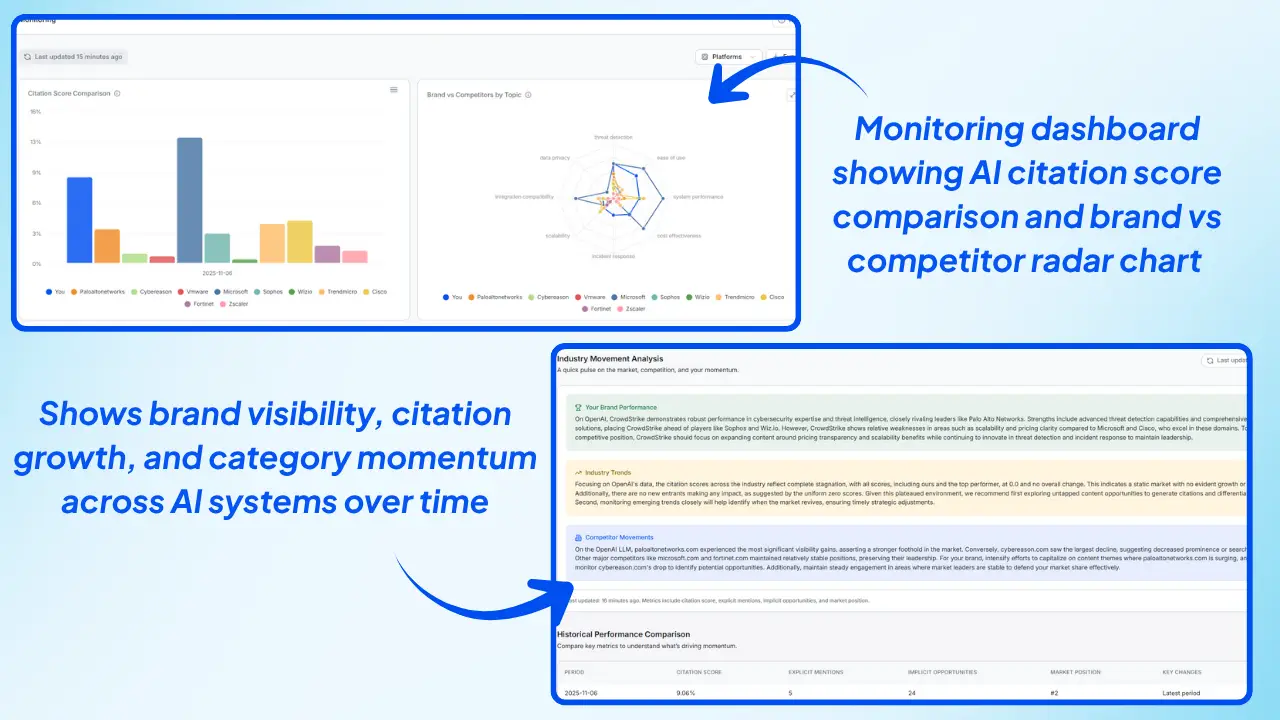

Wellows, an AI search visibility platform for startups, connects these signals in one place. It unifies search, AI answer, and entity data, and turns scattered SEO inputs into structured, decision-ready insights for lean teams.

2. Generative Engine Optimization (GEO)

As AI-powered search engines like Gemini, ChatGPT, and Perplexity become more common, startups need Generative Engine Optimization (GEO) alongside classic SEO. GEO focuses on optimizing for AI-generated answers by:

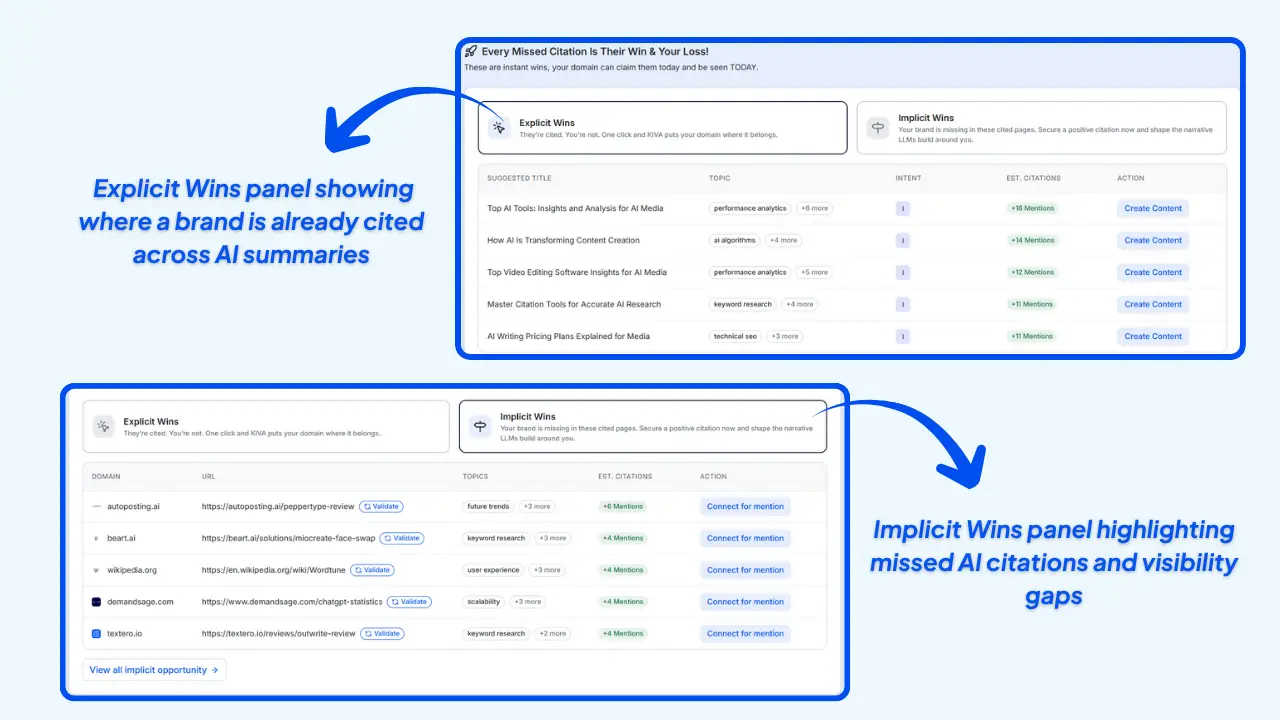

- Tracking how your brand and entities show up in AI-generated summaries.

- Structuring content so AI models can easily interpret and reuse it.

- Improving citation potential in AI-generated responses.

![]()

Wellows helps brands monitor and measure how they appear across AI-powered search and answer engines such as ChatGPT, Gemini, and Perplexity—giving marketers a clearer picture of where they win or lose attention in AI-first environments.

3. AI Search Visibility Tracking

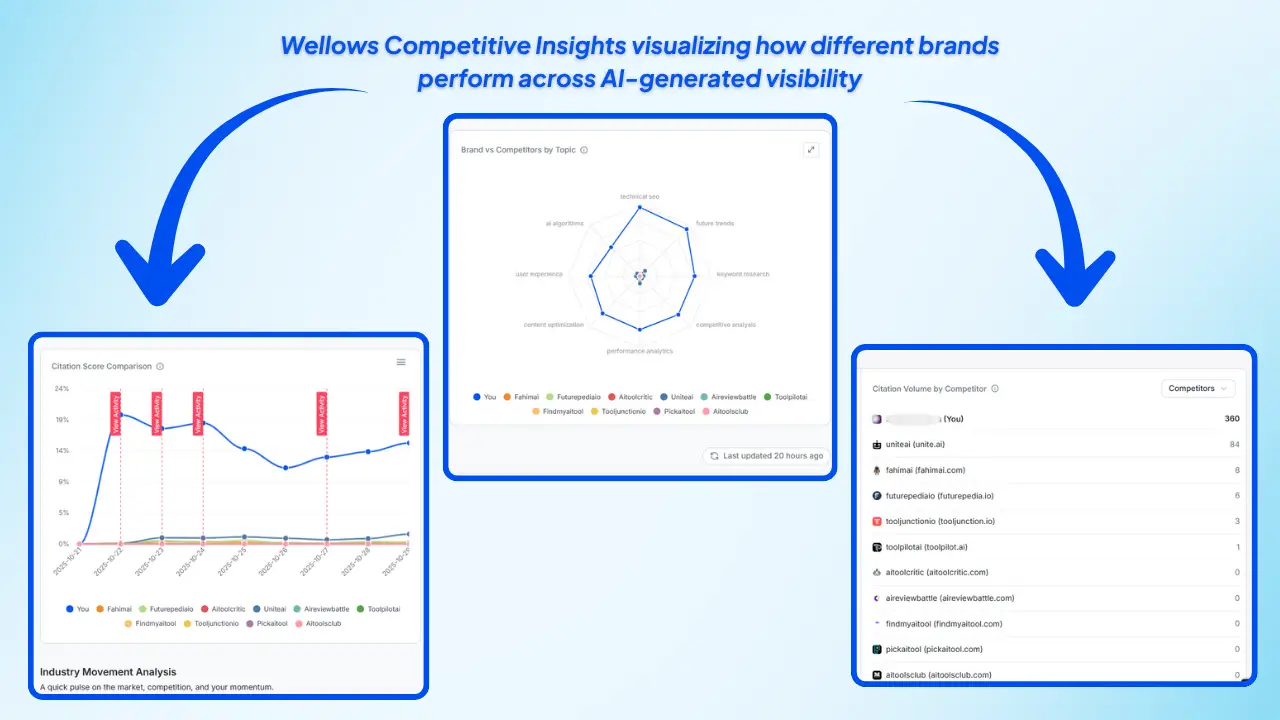

AI now goes beyond rankings—it tracks brand mentions, sentiment, and AI visibility across both traditional and AI search platforms. Startups can finally see which topics, pages, and entities drive the most exposure, and benchmark that performance against Competitor and category leaders.

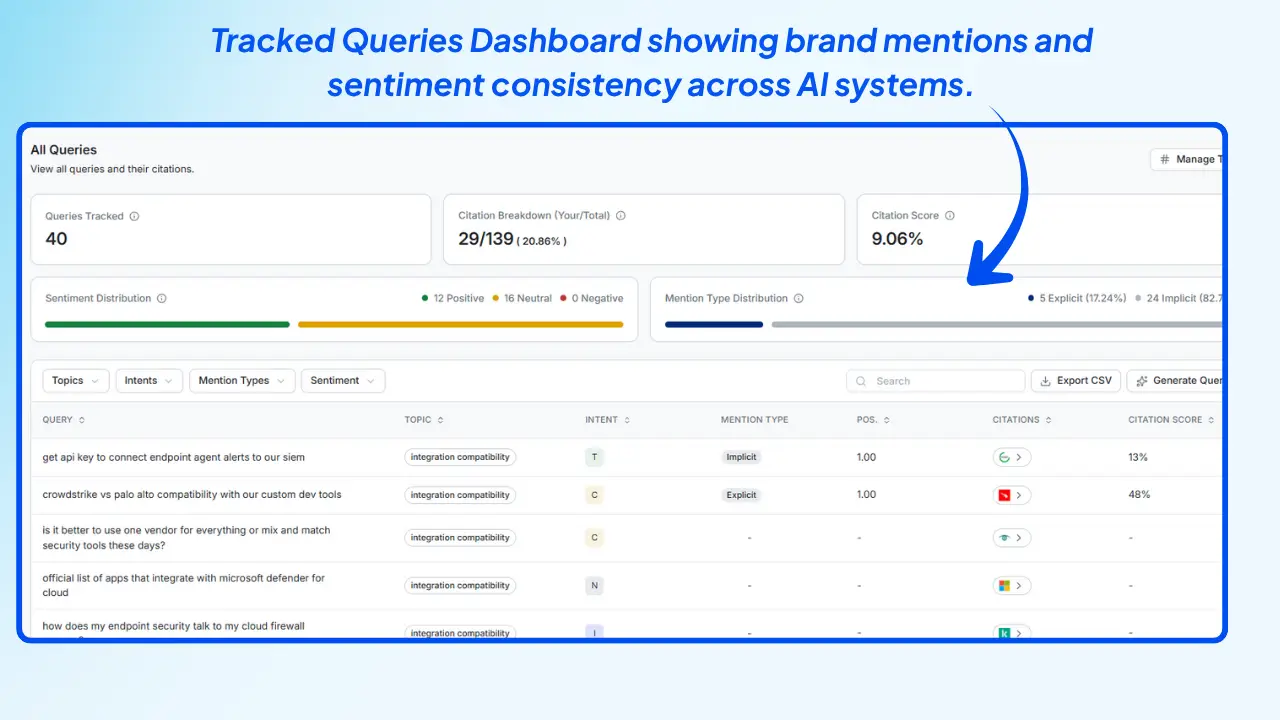

Wellows provides a Citation Score that shows how often your brand and key entities are cited in AI-generated answers across ChatGPT, Gemini, and Perplexity. It analyzes mentions and visibility trends, helping startups identify where they’re underrepresented and where authority is already compounding.

Together, these capabilities reflect the rise of AI visibility platforms designed to help lean teams understand how their brand presence evolves across both search engines and generative AI systems.

4. Answer Engine Optimization (AEO)

With AI-driven search shifting toward direct answers, Answer Engine Optimization (AEO) is becoming a core part of startup SEO. AEO focuses on crafting content that answers questions clearly and conversationally, so brands are more likely to be cited in AI-generated snippets.

- Structured content: Mirrors natural language and aligns with user intent.

- Concise messaging: Delivers quick, context-rich answers that AI models can lift safely.

By combining GEO and AEO with an AI search visibility solution for startups, early teams move from guessing to testing. They can see which topics win citations, which pages need restructuring, and why startup leads dropped, revealing how AI-driven visibility directly influences both pipeline performance and brand preference.

How Startups Can Use AI to Power Smarter Content Marketing

AI helps startups turn scattered ideas and search data into a focused content engine. Most marketers already use AI for content creation, and teams using AI publish around 42% more content each month than those who don’t. (Ahrefs, 2025)

The challenge is not just producing more content—it’s making sure that content is discoverable in both search engines and AI assistants. This is where an AI search visibility platform for startups and a structured GenAI visibility stack turn content into measurable visibility, not just output.

How Can an Autonomous Marketing Platform Support Lean Teams?

For lean teams, an autonomous marketing platform handles the heavy lifting of research, planning, and performance feedback. Instead of juggling separate tools for SEO, content, and analytics, founders get one system that turns intent data into topics and topics into measurable visibility.

Did you know?

- Cluster keywords and group intent into coherent topic themes.

- Surface topics that match how users actually search across channels.

- Turn search data, forum questions, and LLM queries into structured topic maps that are easier to brief, assign, and ship.

An autonomous marketing platform like Wellows then acts as the decision layer. It connects search data, AI answers, and entity-level signals to show which topics generate visibility, which URLs win citations, and where your brand is invisible or misrepresented.

For teams that still rely on legacy workflows such as KIVA under Wellows, those are best reserved for cases that need explicit citations and structured briefs.

Wellows itself focuses on AI search visibility, Citation Score, and entity coverage, so ideas come from user intent, content ships faster, and visibility data feeds back into the roadmap.

Did you know?

- Nudging teams toward topics that compound visibility.

- Flagging content that underperforms across SERPs and AI assistants.

- Keeping lean startups aligned with what their market is actually asking.

How Should Startups Align Content With GEO and LLM Optimization

To make AI-powered content truly work, startups need to align it with both Generative Engine Optimization (GEO) and LLM-focused practices. GEO treats AI assistants like new discovery channels, not just side effects of SEO, and asks: “What would make an AI system confident enough to cite us?”

Did you know?

- Topic cluster related queries into clear themes.

- Build pillar pages with question-led H2/H3 headings.

- Support them with deep, well-cited subpages to create semantic depth.

Next, shape content so it’s easy for LLMs to parse. Use short paragraphs, question-focused headings, and explicit definitions of key entities such as your product, category, and ICP. Resources like LLM.txt and LLM-full.txt files help make that context machine-readable across your site.

Schema markup and FAQs also play a critical role. Adding structured data and clear Q&A sections supports both Answer Engine Optimization (AEO) and GEO, making your pages more likely to be surfaced as concise, copyable answers inside ChatGPT, Gemini, and Perplexity.

Finally, connect these practices back to AI visibility. Wellows track how GEO-optimized content affects Citation Score, LLM mentions, and overlap between SERP and AI visibility. This turns GEO and LLM optimization into an ongoing feedback loop rather than a one-off checklist.

What Are the Challenges in Integrating a GenAI Visibility Stack, and How Can They Be Addressed

Bringing a GenAI visibility stack into an existing environment looks simple on paper but gets messy fast. Most issues fall into a few predictable buckets: data, legacy systems, risk, performance, and people.

- 1. Data quality and availability

If your data is messy, stale, or scattered, GenAI models and visibility metrics will simply amplify that confusion. Dashboards will look impressive but won’t be trustworthy.

How to fix it: Put basic data governance in place first: clear owners, approved sources of truth, and regular checks for accuracy and freshness. Your GenAI visibility stack should sit on top of these curated sources, not raw, unvetted data. - 2. Integration with legacy systems

Older CRMs, analytics tools, or content systems rarely plug cleanly into modern GenAI workflows. That’s how you end up with brittle scripts and one-off integrations that break whenever something changes.

How to fix it: Use a phased approach. Start with one or two high-impact use cases and connect them via APIs or middleware. Prove the value, then standardize patterns for how GenAI services talk to your legacy stack. - 3. Security, privacy, and compliance

A visibility stack touches search data, content, logs, and sometimes user information. Without guardrails, you risk exposing sensitive data to models or third-party tools that shouldn’t see it.

How to fix it: Treat security as a design constraint, not an afterthought. Enforce least-privilege access, encrypt data in transit and at rest, and log how data flows through your GenAI components. Map these controls to your compliance requirements so legal and security teams stay comfortable. - 4. Scalability and performance

Once more teams rely on the stack, latency and cost can spike. Dashboards slow down, evaluations back up, and experiments get blocked by infrastructure limits.How to fix it: Design for modular scaling early. Separate storage, retrieval, and visibility analytics so each can scale independently. Run load tests and watch usage patterns so you can right-size compute before bottlenecks hit production. - 5. Trust and explainability

If stakeholders don’t understand how GenAI-driven insights are produced, they won’t use them to make decisions. “The model says so” is not enough when budgets and roadmaps are on the line.

How to fix it: Pair your stack with simple explainability: show which sources fed an answer, what prompts or rules were used, and how confidence is scored. Document these patterns and train teams so they trust what the visibility layer is telling them. - 6. Talent and change management

Even a well-architected GenAI visibility stack fails if nobody knows how to use it or if teams cling to old spreadsheets and manual reports.

How to fix it: Invest in training for existing teams instead of assuming you’ll hire your way out. Start with a small group of champions, give them clear wins using the new stack, and let them evangelize internally. Make the new dashboards and workflows the default, not an optional side project.

Done right, integrating a GenAI visibility stack becomes less about fighting tools and more about building a stable foundation where AI visibility, brand safety, and growth move in the same direction.

Did you know?

How Should Startups Handle Compliance, Security, and Data Quality in Their Stack?

- Keep data boundaries clear: Store customer and product data in controlled systems of record, and let GenAI apps access it via governed APIs and retrieval layers instead of raw database access.

- Treat visibility as read-only: Use platforms like Wellows as a lightweight, read-only analytics layer that ingests just enough search, content, and AI-answer data to calculate Citation Score, entity coverage, and AI search visibility.

- Define canonical sources: Decide which docs and systems count as your source of truth for products, messaging, and brand language, and make sure those are the ones exposed to LLMs and visibility tools.

How Can Startups Monitor and Evaluate the Outputs of Their GenAI Models?

Startups can monitor and evaluate GenAI outputs by combining model-level observability with an AI search visibility layer. In practice, that means tracking how well models answer, where they fail, and how often those answers actually surface your brand across search and AI assistants.

Most teams start with evaluations and tracing. Modern GenAIOps practices use automated evaluations, LLM-as-a-judge scoring, and prompt-flow analytics to assess response quality, safety, and relevance over time—instead of relying only on ad-hoc human review.

From there, observability tools capture metrics like hallucination rate, faithfulness to source content, latency, and user feedback. Frameworks such as OpenTelemetry for generative AI and GenAI-aware monitoring in Amazon CloudWatch make it easier to standardize these signals across apps and agents.

Evaluation doesn’t stop at launch. Industry guidance now recommends continuous GenAI evaluation—testing prompts, comparing versions, and running rubric-based checks on live traffic—to keep quality aligned with business goals as models, data, and user behavior change.

But even with good model metrics, many startups still don’t know how generative answers represent their brand in the wild. That’s where a GenAI visibility stack for startups and an AI visibility platform like Wellows come in—connecting model behavior with real-world AI search visibility, Citation Score, and entity coverage across ChatGPT, Gemini, and Perplexity.

By pairing GenAI observability with AI visibility analytics, founders can answer two critical questions at once: “Are our models performing well?” and “Are they helping us show up, accurately, where our customers search and ask questions?”

Which Metrics and Dashboards Should Founders Track for AI Search Visibility?

For AI search visibility, the most useful dashboards sit above raw model logs. They show how often your brand is surfaced, how it is described, and how that overlaps with the topics your team is investing in across SEO and content.

A core metric is Citation Score—how frequently your brand and key entities are cited in AI-generated answers across ChatGPT, Gemini, and Perplexity.

If your Citation Score is stuck, an automated SEO outreach strategy using Wellows is one of the most direct ways to earn fresh mentions and links that AI systems can pick up as trusted references.

Tracking this over time reveals whether new content, docs, and campaigns are actually increasing your presence in AI assistants.

Next is LLM mentions by surface and query theme. A useful dashboard shows which questions, intents, and categories trigger your brand, and where competitors dominate instead. This helps prioritize new content, product pages, and knowledge assets that can close specific AI visibility gaps.

Founders should also watch entity coverage and accuracy: which products, features, and use cases are consistently referenced, and whether those descriptions are on-brand. A GenAI visibility stack turns this into a structured view instead of one-off screenshots from ChatGPT.

Another high-value lens is SERP + LLM overlap—where you rank in Google, where you appear in AI answers, and where the two diverge. Platforms like Wellows help teams connect classic SEO metrics with AI visibility, so they can see which pages drive both traffic and citations, not just clicks.

Finally, founders should monitor how quickly new assets gain AI visibility. Time-to-visibility for new articles, docs, or landing pages becomes a leading indicator of whether your GenAI visibility stack for startups is working end-to-end—from content planning to search to AI assistants.

What Are the Best Practices for Deploying a Scalable GenAI Visibility Stack?

The best GenAI visibility stacks start small and get sharper over time. Rather than wiring every tool at once, high-performing teams begin with a few clear use cases and then standardize the stack around what actually works.

- Start with focused use cases: Begin with 1–2 concrete workflows (e.g., SEO visibility, product docs) and expand only after you see clear signal.

- Use one shared stack across teams: Standardize on a common layer for models and retrieval, with a single analytics and AI search visibility layer on top.

- Avoid tool sprawl: Prevent every team from running its own disconnected GenAI experiment by centralizing models, RAG, and visibility tools.

- Assign clear ownership: Decide who owns data quality, who owns model behavior, and who owns visibility metrics so dashboards don’t turn into noise.

- Tie to real growth KPIs: Connect your GenAI visibility stack to metrics like qualified traffic, assisted pipeline, and AI visibility on core topics—not just usage or latency.

- Treat content and visibility as one system: Use the same stack to plan topics, ship content, and see how often that content appears in SERPs and AI answers.

- Leverage SERP + LLM alignment: Use frameworks like Wellows’ SERP + LLM views so ranking signals and Citation Score reinforce each other instead of working in silos.

How Should Startups Phase Adoption from MVP to Series B?

From MVP to Series B, adoption can be phased like this:

- MVP stage: Start with a lightweight GenAI visibility stack for startups that combines one LLM, a basic RAG layer, and a simple AI visibility dashboard to see where your brand appears today.

- Approaching product–market fit: Add a few repeatable workflows—e.g., one pipeline for SEO content, one for product docs, and one for support content—each feeding into the same visibility and AI search visibility metrics.

- Series A–B scale-up: Aim for consistency across teams so marketing, product, and RevOps share the same stack, entities, and visibility KPIs.

- Add an autonomous marketing layer: At growth stage, introduce an autonomous marketing platform layer to turn recommendations into regular action instead of one-off projects.

- Keep the core principle stable: Fewer tools, clearer roles, and one place to see how GenAI, content, and visibility are performing together at every stage.

FAQs

Final Takeaway for Startups Building a GenAI Visibility Stack

- Visibility for fast-moving startups now goes beyond blue links on Google to include how often AI assistants mention your brand, which pages they trust, and how your story appears in open-ended queries.

- A thoughtful GenAI visibility stack for startups centralizes this view by layering models, retrieval, and content workflows under an AI visibility solution that turns signals into AI search visibility, Citation Score, and entity coverage.

- Teams can use this stack to focus on proven topics, close visibility gaps, and ship content that both search engines and LLMs can interpret reliably.

- Wellows acts as an AI search visibility platform on top of your existing tools, showing whether your SEO and content investments are actually converting into visibility across SERPs and AI systems.