Want to create content for SERP and LLM trends that rank on Google and appear in answers from ChatGPT, Claude, or Gemini? As search evolves across both engines and AI models, the rules of visibility are shifting fast.

Many still view this only through search engine optimization (SEO), but visibility now means showing up in both Google’s search engine results pages (SERP) and large language models (LLMs).

A frequent question is: “What is SERP in digital marketing?” It’s the page of results Google shows—snippets, FAQs, videos, and now AI summaries. Another is: “What are the characteristics of LLM?” These models rely on semantic understanding and contextual reasoning, not just ranking signals.

This raises: “How is LLM used in technology?” Beyond chat, LLMs are shaping search, discovery, and decision-making by selecting which brands appear in AI-powered answers.

To stay visible, brands must align with both SERP optimization and LLM visibility principles. Emerging Autonomous marketing platform like Wellows show how structure, trust, and formatting decide what content gets surfaced.

For lean teams, the AI search visibility for Startups solution offers a direct path to scale with SERP + LLM optimization built-in.

Semrush research shows 13% of U.S. searches display AI summaries, 88% targeting informational queries. Structured content is no longer optional—it’s essential.

TL;DR

- Content must work for both SERPs and LLM answers.

- Use question-based keywords, FAQs, schema, and answer-first writing.

- AI Overviews are replacing snippets — structure content for easy extraction.

- Map conversational queries to user intent and choose the right format.

- Use SERP-winning styles: how-tos, lists, comparisons, UGC.

- Keep sections short, clear, and LLM-readable.

- Tailor workflows for agencies, consultants, startups, and freelancers.

Why SERP and LLM Trends Now Matter for Modern Content Teams?

Search is no longer driven only by blue links and ranking factors. With Large Language Models (LLMs) now integrated directly into Search Engine Results Pages (SERPs), the way users discover information has fundamentally changed.

Content teams must adapt to a world where visibility depends on performance across both Google’s search interface and AI-generated answer engines—something modern platforms like Wellows are built to support.

To stay competitive, teams must rethink how they create content for SERP and LLM, ensuring every page is structured to perform across Google, AI Overviews, and LLM responses.

AI-Generated Summaries Are Taking Prime Real Estate

AI Overviews and other LLM-generated summaries frequently appear at the top of SERPs, answering user questions instantly before they scroll.

This shift reduces click-through rates for traditional organic listings, pushing websites further down the page and reshaping how visibility is earned. Industry analyses show that AI summaries now occupy a significant portion of the SERP layout and materially influence ad CTRs.

The Shift From SEO to LLM Optimization (LLMO)

Content teams are moving beyond traditional SEO—focusing not just on ranking in Google but also on being recognized, trusted, and cited by LLMs.

LLM Optimization (LLMO) involves creating structured, authoritative, semantically rich content that aligns with how AI models consume and reorganize information. Teams adopting LLMO frameworks have seen up to a 40% increase in presence across AI-generated responses. (IDC, 2025).

The Rise of Generative Engine Optimization (GEO)

Generative Engine Optimization (GEO) is emerging as the next evolution of content strategy, emphasizing topic clusters, related entities, and adjacent queries instead of single keywords.

This semantic-first approach increases the likelihood that content will surface inside AI summaries and answer boxes. Understanding how LLMs evaluate and extract AI content is becoming essential for modern visibility workflows.

User-Generated Content Is Becoming a Visibility Driver

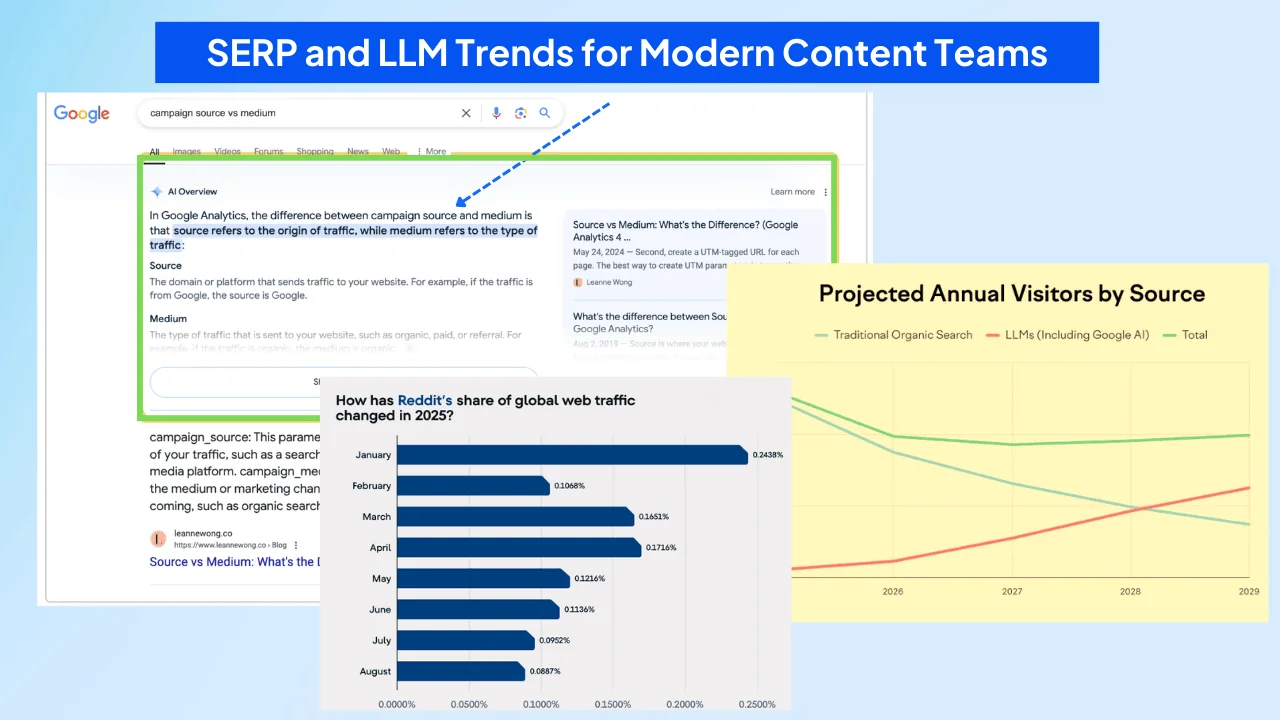

LLMs prioritize authentic, context-rich insights—leading to a surge in visibility for UGC platforms. Verified analytics show Reddit’s organic traffic increased by 603% and Quora’s by 379% as AI models began citing community-driven responses more frequently (MTSOLN, 2024).

Search Is No Longer a Single Platform

With LLMs powering answers across search engines, chatbots, browsers, and third-party tools, users are no longer confined to Google for discovery. This diversification means brands must build visibility across multiple platforms. A Google-only strategy now risks missing large portions of user intent and behavior.

AI-driven search is redefining how content gains visibility. To stay competitive, teams must adopt LLMO and GEO principles, incorporate UGC signals, and expand visibility beyond traditional search engines. Content built for both SERP ranking and LLM extraction will shape the next era of digital discovery.

How To Rank For Conversational Queries In Google SERP?

Google increasingly interprets search the way people naturally speak—including full questions, long-tail phrasing, and voice-driven prompts.

To rank for these queries, content must align with conversational language, match user intent, and follow extractable formats Google can surface in featured snippets, AI Overviews, and People Also Ask boxes.

Strong performance for conversational queries comes from using question-based keywords, building FAQ sections, applying structured data, optimizing for snippets, ensuring mobile readiness, and aligning your content with user intent.

1.Focus on Long-Tail, Question-Based Keywords

- Identify conversational questions using tools like AnswerThePublic and SEMrush.

- Write in natural, spoken-style language that mirrors how users phrase queries.

Example: Instead of “best SEO practices,” optimize for “What are the best SEO practices for conversational queries?”

2. Develop Clear, Concise FAQ Sections

- Use real questions from support tickets, customer calls, and social media.

- Keep answers short (under 280 characters) to increase snippet eligibility.

3.Use Structured Data and Schema Markup

- Apply FAQ schema for question-based sections.

- Use HowTo, Product, or LocalBusiness schema when relevant.

4.Optimize for Featured Snippets

- Lead with a one-sentence answer, then add brief explanation.

- Use bullets, numbering, and tables to improve SERP extraction.

5.Ensure Mobile-Friendly Performance

- Use responsive layouts to support mobile and voice-driven searches.

- Improve page load speed to strengthen ranking signals.

6.Align Content With User Intent

- Answer informational queries thoroughly.

- Guide users clearly for navigational intent.

- Use strong CTAs for transactional queries.

Explain How Conversational Search Impacts Featured Snippets on Google?

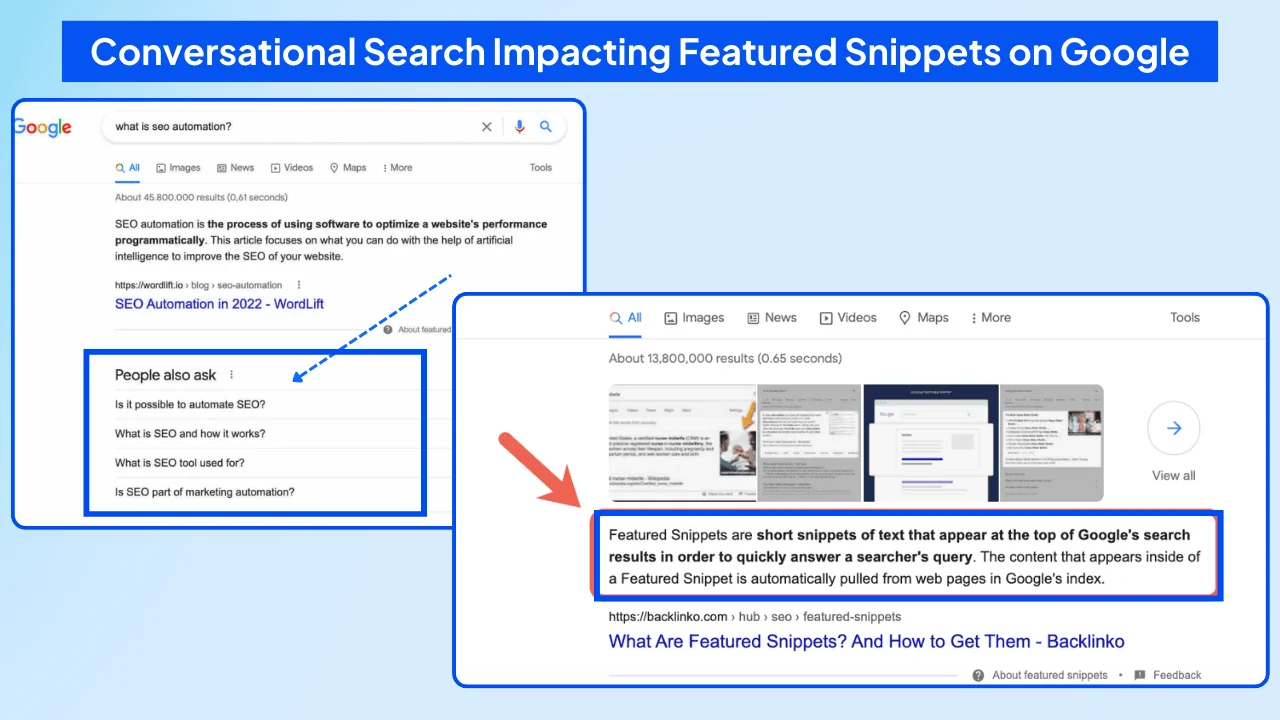

Conversational search has reshaped how Google selects and displays featured snippets. As users increasingly ask natural, question-based queries, Google responds with richer, AI-supported formats designed to answer those questions instantly—even before a user clicks anything.

This shift makes it essential to create content for SERP and LLM that is structured, clear, and ready for direct extraction.

AI Overviews Are Replacing Traditional Snippets

Google’s newer AI Overviews pull information from multiple trusted sources to create full, conversational answers. This has reduced the frequency of classic single-source snippets and shifted visibility toward multi-layered responses that feel closer to human dialogue.

As a result, mastering AI Overviews Optimization has become a primary objective for teams who want to maintain their share of voice in a search environment that increasingly favors synthesized, multi-source summaries over traditional blue links.

Zero-Click Behavior Is Growing

Because AI Overviews often answer the question completely, users are less likely to click through to a website. This rise in zero-click searches changes how content teams approach visibility and reinforces the need to structure information so it can be surfaced directly in Google’s answer formats.

Authority and Structure Matter More Than Ever

Pages that demonstrate high authority, offer clean structure, and present direct answers are more likely to be included in AI-driven summaries. Google now favors content that is factually strong, clearly organized, and easy for its systems to extract at a passage level.

How to Align Content With Conversational Snippet Behavior

- Use schema markup such as FAQ and HowTo to help Google understand your page structure.

- Write in a conversational tone that reflects how people naturally speak and search.

- Answer specific questions directly at the start of each section to increase extractability.

To adapt to these shifts, creators need to build content that works for both snippets and AI Overviews. A helpful guide is the Wellows breakdown on optimizing for featured snippets, which explains how page structure, clarity, and formatting influence search visibility.

These same principles apply inside generative models. Tests like Wellows’ ChatGPT visibility experiment show how conversational formatting and direct-answer structure increase the likelihood that an LLM will extract and reuse your content.

The closer your writing matches real conversational intent, the more likely it is to surface across both SERPs and AI-generated answers.

What Strategies Can I Use to Optimize for SERP and LLM Visibility?

To optimize your website for both traditional SERP and large language models, you need a balanced strategy that supports ranking signals while making your content easy for LLMs to understand, extract, and cite. This dual approach strengthens visibility across Google’s results and AI-generated answers.

1.Develop High-Quality, Authoritative Content

LLMs and search engines reward depth, accuracy, and expertise. Create content that demonstrates authority through original data, examples, case studies, and clear explanations. High-quality, well-researched content is more likely to rank and be selected for AI summaries.

2.Implement Structured Data and Schema Markup

Use schema markup such as FAQ, HowTo, Article, and Product schema to help both Google and LLMs understand your content’s structure. Schema improves SERP appearance and increases your chances of being reused in AI-generated responses.

3.Optimize for Conversational Queries

LLMs rely heavily on natural-language patterns. Use question-based subheadings, answer-first formatting, and concise explanations to align with how users phrase their queries in voice search and chat interfaces.

4.Strengthen Internal Linking and Content Clusters

5.Build topic clusters around core themes and support them with strategic internal linking. This signals topical authority to Google and helps AI systems understand contextual relationships across your site.

5.Improve User Experience and Technical SEO

Fast loading speeds, mobile-friendly layouts, clean code, and strong Core Web Vitals (LCP, FID, CLS) improve rankings and help LLMs parse stable, predictable content. Technical health is foundational for both search engines and AI-driven crawlers.

6.Maintain Consistent and Updated Content

7.Use Semantic SEO Practices

Write the way people think, search, and speak. Focus on related phrases, contextual signals, and natural language rather than repeating exact-match keywords. Semantic richness helps your content perform better in both SERPs and AI-generated answers.

8.Monitor and Adapt to AI Search Trends

Track how your brand appears in AI-generated responses and adjust your strategy accordingly. Tools like Otterly.ai show how LLMs interpret your content, allowing you to refine structure, clarity, and entity coverage.

When your website aligns with both SERP fundamentals and LLM-friendly structure, you build a durable visibility foundation across every platform where users search, ask questions, or compare information.

How Do I Map User Intent From Conversational Queries to Actionable Content?

Mapping user intent from conversational queries starts with understanding what people are truly trying to accomplish when they ask natural, voice-style questions.

Instead of focusing on keywords alone, you interpret the underlying goal of the query and turn it into content that answers clearly, directly, and in the right format.

Identify the Intent Behind Conversational Queries

Conversational questions make intent easier to recognize because they reveal exactly what the user wants. By studying how these questions are phrased, you can separate them into informational, commercial investigation, or transactional intent.

This clarity helps you see whether someone is exploring a topic, comparing options, or preparing to take action.

- Informational intent: “How does X work?” or “Why does this happen?”

- Commercial investigation: “Best tools for…” or “X vs. Y for my needs”

- Transactional intent: “Buy…,” “Download…,” or “Sign up for…”

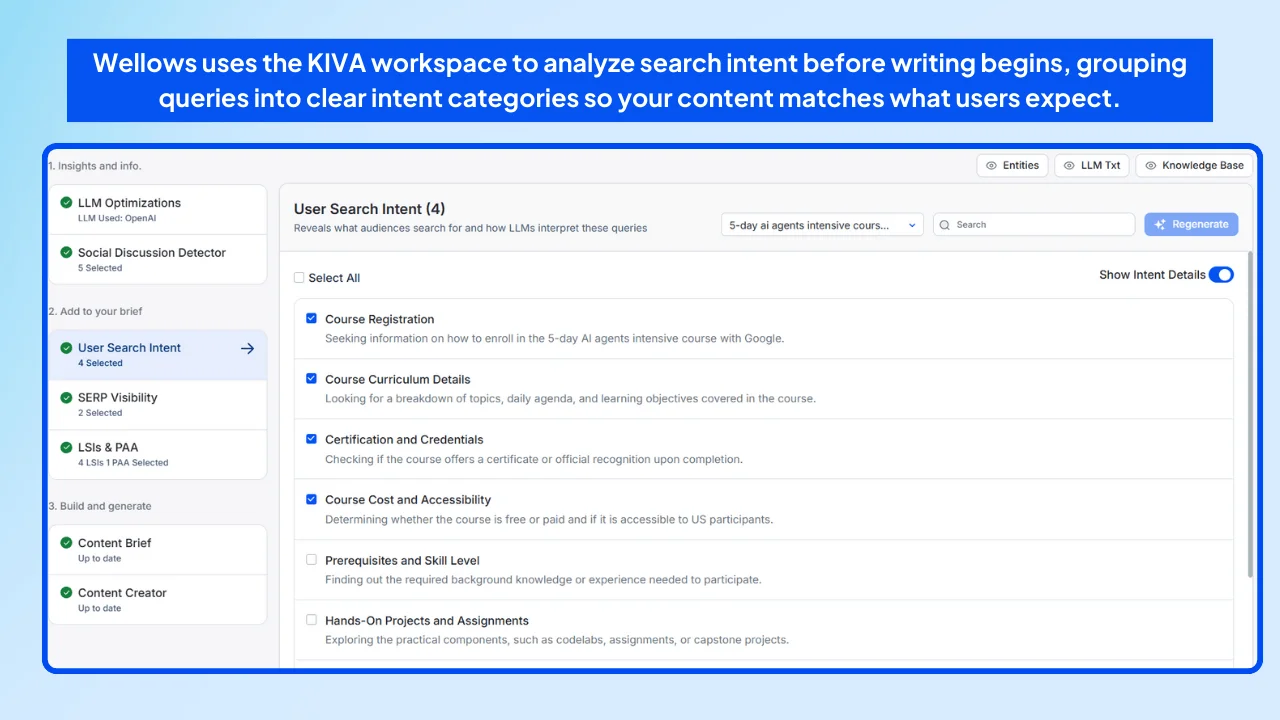

Tools that categorize intent automatically can speed up this process. For example, the Wellows uses the KIVA workspace for User Intent Analysis before writing begins, grouping queries into clear intent categories so your content matches what users expect.

Create Content That Matches Real User Intent

Once you know the intent behind a query, build content that meets it without forcing the reader to hunt for answers. Informational intent needs explainers, tutorials, and FAQs.

Commercial investigation benefits from comparisons, lists, and reviews. Transactional intent performs best when supported by simple product pages and clean, high-clarity CTAs.

Real data also helps you understand intent at scale. Reviewing phrasing patterns inside Google Search Console often reveals what people actually asked for—not what you assumed they queried.

The Wellows guide on GSC keyword analysis is useful here, showing how query clusters can uncover intent shifts you might otherwise miss.

Use Intent-to-Answer Mapping to Make Content Discoverable

Intent-to-answer mapping connects common conversational prompts with the content formats that satisfy them best. When someone asks a natural-language question, your content should already exist in the structure Google or an LLM prefers—answer first, then expand.

- Match prompts to the right content type—FAQ, blog, comparison, or product page.

- Lead with a direct answer that an AI model can extract instantly.

- Use mirrored headings (e.g., “How does…?”) to help match the search query.

Use Conversational Patterns from AI Systems

Language models reveal predictable patterns in how they interpret and answer questions. Studying these patterns helps you shape content that feels “AI-readable”—clean structure, tight paragraphs, and clear entities.

Content that aligns with these patterns tends to perform better across SERPs, AI Overviews, and generative engines.

Refine Content as Intent Signals Evolve

User intent changes as new questions emerge. Monitoring conversational trends, SERP shifts, and LLM outputs helps you adjust content structure, depth, and clarity so your pages stay aligned with what people are actually asking today.

When mapped correctly, conversational queries become precise content opportunities that work across Google, AI Overviews, and LLM-driven experiences—turning intent into visibility at every stage of discovery.

How SERP Structure Shapes Visibility Across AI Overviews and LLMs?

SERP feature optimization has evolved beyond blue links as Google blends featured snippets, AI Overviews, and People Also Ask boxes with generative interfaces—making it essential to create content for SERP and LLM that can be extracted, summarized, and surfaced across both search and AI environments.

Visibility now requires designing content tailored for Google’s SERP structure while also aligning with how large language models analyze, extract, and summarize information. This blending of traditional results with generative interfaces means content must be both search-friendly and AI-friendly.

What Does ‘Create for SERP’ Mean?

- Add meta tags and markup that signal relevance.

- Write keyword-aligned content that reflects real user phrasing.

- Use schema markup to help Google understand context.

Creating For SERP and Large Language Models

Modern optimization requires designing content that works for both search engines and LLMs like ChatGPT, Claude, and Gemini. Structuring content into clear sections, using question-based headers, and presenting information in extractable formats supports visibility across both systems.

SERP Visibility Goes Beyond Ranking

Winning formats follow predictable patterns used in conversational and AI-driven search:

- How-to guides dominate instructional intent.

- Listicles and comparisons win for commercial investigation.

- UGC and forums perform well for trust-based searches.

Ignoring these formats leads to broader LLM visibility issues, where content fails across both SERPs and AI summaries.

Analyze SERPs to Extract Content Opportunities

SERP tools like SEOTesting, Semrush SERP Features, Ahrefs SERP Overview, BrightEdge, and SE Ranking reveal structural trends across top-ranking pages.

Look for:

- Repeated winning formats

- Authority gaps you can fill

- Use of multimedia, FAQs, or structured markup

- If “AI writing tools” shows roundups, follow that style.

- If forums dominate a query, use UGC-style insights.

To scale implementation, explore 5 Tips to Triple Content Output Using AI Writing Assistants, which shows how AI workflows make SERP-aligned drafting faster.

Turn SERP Visibility Into Content Briefs

Before writing, shape your outline based on SERP patterns—format, structure, and depth.

- Choose the right format for the intent.

- Match the depth of pages already winning the SERP.

- Use headers and subheaders that mirror dominant hierarchy.

For real examples, see how to create an AI content brief using the Wellows workspace.

According to Semrush’s 2025 R&D study, 88.1% of searches that triggered AI-generated answers also displayed featured snippets or PAA panels on page one.

Why Large Language Models Extract Content Through Semantic Analysis?

AI content selection patterns reveal unique behaviors. Large language models like ChatGPT-4, Claude-3, and Gemini Pro don’t rank pages like traditional search algorithms.

Instead, they use transformer architectures and attention mechanisms. These models employ semantic chunking, passage retrieval, and contextual analysis to identify and remix content blocks.

These systems prioritize semantic relevance, clear structure, and trusted citations. Unlike Google, LLMs don’t rely on traditional ranking signals like backlinks or keyword density. Instead, they extract information based on clarity, contextual fit, and answer quality.

To better understand how these AI-driven citations compare to traditional SEO link-building, take a look at how LLM citations differ, and how we break down the shifting role of trust and authority in generative search.

LLMs Focus on Passage-Level Accuracy and Context

- LLMs retrieve content from specific passages that directly answer user prompts.

- They value well-defined, self-contained content blocks over long-form narrative.

- Semantic chunking ensures retrievability by aligning with user intent. For example, well-structured Q&A blocks often get cited in generative responses.

Citation Bias and Trust Signals in LLM Outputs

LLMs tend to cite high-trust sources like Wikipedia, Reddit threads, and reputable publishers.

An internal analysis by Wellows used controlled research methodology. The study analyzed 7,785 LLM-generated queries across 12 industry verticals. These included healthcare, finance, e-commerce, SaaS, manufacturing, and legal services.

Results showed 48% of citations came from high-authority domains. These domains included news publishers, educational institutions, and government databases with domain authority scores above 70.

For commercial searches, the same study of Wellows revealed that 66% of citations referenced product specifications or expert reviews. This shows that clear, detailed, and informative content is far more likely to be cited—especially when it helps answer specific, product-driven questions.

Semrush has also reported that nearly 90% of ChatGPT citations come from content beyond the top 20 Google results. This suggests that structure and clarity may outweigh traditional rankings when it comes to AI citation.

Each LLM shows its own preference. For example, 47% of Perplexity’s citations come from Reddit, highlighting the value of peer-generated insights.

To explore this further, check out Why Generative Engines Love Reddit? for a breakdown of why forums dominate AI citation logic and how you can adapt your strategy to benefit from similar formats.

Structuring Content for LLM Visibility

To target LLM visibility effectively:

- Break content into small, labeled chunks (e.g., “Step 1: Research SERP Trends”).

- Embed clear signal cues like FAQs or TL;DR summaries.

- Incorporate cited facts, data, and credible sources that align with each model’s citation behavior.

This modular approach improves extractability, increasing the chance that an AI model will pick and cite your content.

To apply this approach consistently, explore our guide on Chunk optimization for AI SERPs, where we break down how to label, format, and structure content blocks for maximum visibility across both search and AI interfaces.

How Content Alignment Maximizes SERP and LLM Visibility?

Multi-platform content visibility requires semantic long-form content creation strategies that align with both SERP optimization and LLM citation best practices through a methodical approach.

Each article must create content for SERP and LLM by matching how people search and how platforms select, display, and summarize information.

A step-by-step workflow ensures that each part of the article meets the expectations of Google Search and LLM tools like ChatGPT or Gemini.

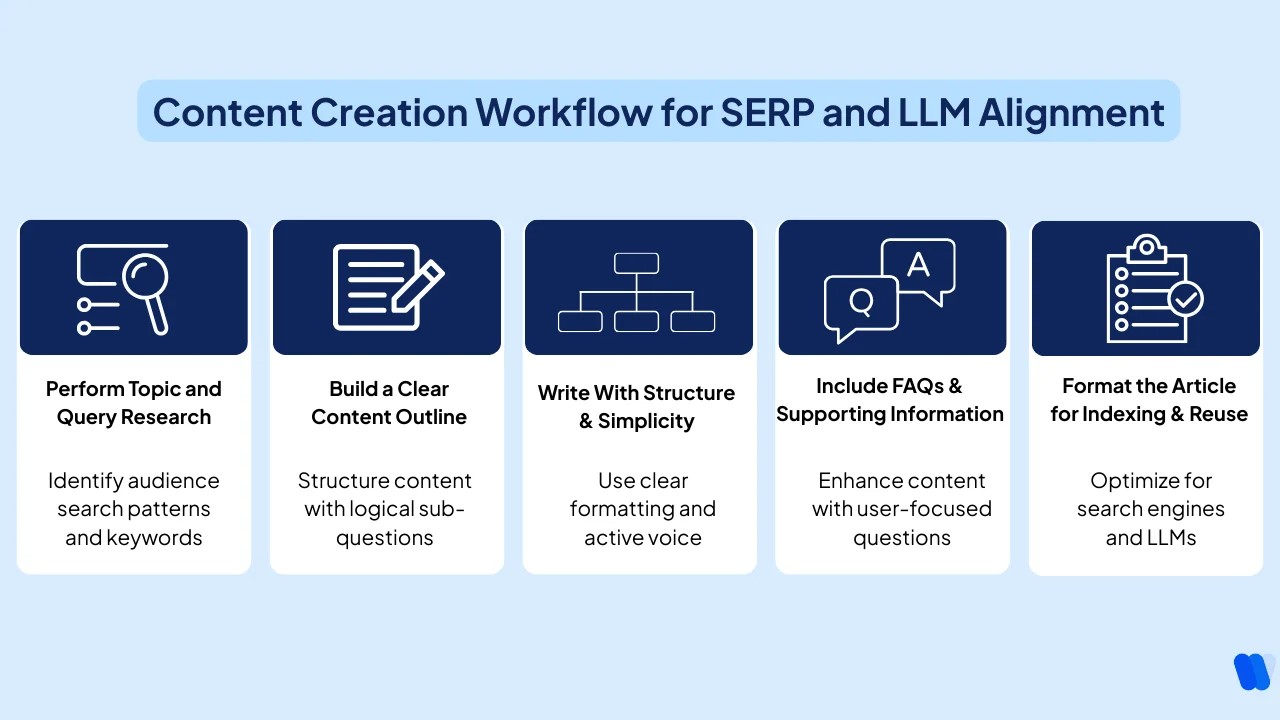

Step 1 – Perform Topic and Query Research

Effective research begins by identifying what the audience is already searching for. Query-based research helps structure content around real demand rather than assumptions.

AI keyword research platforms reveal important patterns. Tools like Wellows, Semrush, Ahrefs, and AlsoAsked identify phrasing patterns and snippet formats. Content optimization tools like Clearscope, MarketMuse, and SurferSEO analyze ranking content structures. Analytics platforms including Google Search Console and Adobe Analytics provide performance data.

1. To guide research:

- Use the “Questions” filter in keyword research tools to extract real search queries

- Prioritize keywords that trigger featured snippets or FAQ blocks in Google Search

- Study the structure of top-ranking articles to identify formatting patterns

Matching keyword intent and question phrasing improves discoverability across both SERPs and LLM outputs, especially when guided by AI SEO agents using SERP visibility, which surface query patterns and content structures that rank in search and get cited in AI responses.

Step 2 – Build a Clear Content Outline

A clear content outline helps structure the article into answer-first sections. Google Search and LLM tools both favor writing that solves a problem upfront and supports the answer with details.

To create a strong outline:

- Choose one main idea or question per article

- Break the topic into logical sub-questions that become H2 or H3 headings

- Arrange sections to mirror the typical order of user discovery: definition → how-to → tips → FAQs

A structured outline often begins with a definition of AI content, then moves into use cases, workflow integration, tool selection, and measurable outcomes, as shown in How Marketers Use AI Content in Their Workflow.

This structure helps guide both readers and search engines through the topic in a logical, intent-aligned flow.

Step 3 – Write With Structure and Simplicity

Google Search prioritizes content that is easy to scan and understand. LLM tools extract content from pages that lead with the answer and minimize ambiguity.

Writers should use formatting that signals clarity.

To improve structure:

- Begin each section with a one-sentence answer, followed by supporting explanation

- Keep paragraph length between 2–4 lines

- Use numbered lists or bullets to break down instructions

Writing should rely on the active voice, neutral tone, and sentence lengths between 15–20 words for consistent readability. Overuse of transitional phrases or introductory filler should be avoided.

Step 4 – Include FAQs and Supporting Information

Frequently asked questions improve SERP presence and help language models understand the full scope of the topic.

These sections often appear as AI-generated answers, Google’s “People Also Ask” boxes, or FAQ schema-enhanced listings.

To build an effective FAQ section:

- Select 3–5 questions based on actual user queries from keyword tools

- Label the section clearly with headings like “FAQs,” “Common Questions,” or “Related Topics”

- Answer each question in 40–60 words using complete sentences

To create high-impact FAQ sections, it’s important to align with real user intent. One effective approach is using People Also Ask data, which provides actual search queries you can turn into precise, snippet-ready answers.

According to Google’s Search Central (Webmaster Trends Team, 2023), properly marked-up FAQ sections using FAQPage schema may be displayed as rich results in search listings or Google Assistant, helping users find answers directly in search. [/did_you_know]

Step 5 – Format the Article for Indexing and Reuse

Content formatting affects how Google Search ranks the article and how LLM tools extract and display information.

Articles must be built for scanning, understanding, and retrieval at both page-level and section-level. Using clear headers, structured data, and modular sections makes content more reusable and visible.

To improve formatting:

- Use consistent heading levels (H1 for the title, H2 for questions, H3 for supporting points)

- Keep paragraphs between 2–4 lines

- Insert internal links that match related topics using clear anchor phrases (e.g. “SEO topic clusters” instead of “click here”)

- Apply FAQPage schema if the article includes a dedicated Q&A section

- Repeat the main topic keywords naturally across sections without keyword stuffing

Formatting should make every section function as a self-contained answer. When the reader (or a machine) lands in the middle of the article, the section should still make sense without scrolling—especially when you create content for SERP and LLM where extractability and clarity directly influence visibility.

How Wellows Maps Visibility Across Search Engines and LLMs?

Modern content strategy requires more than keyword targeting — you need to understand how both search engines and LLMs interpret, structure, and surface information. Inside the Wellows visibility stack, this becomes a single connected workflow instead of a fragmented process.

The visibility workspace (powered by the legacy KIVA module) helps teams move beyond isolated SEO tasks and build AI-aligned content with clarity and structure.

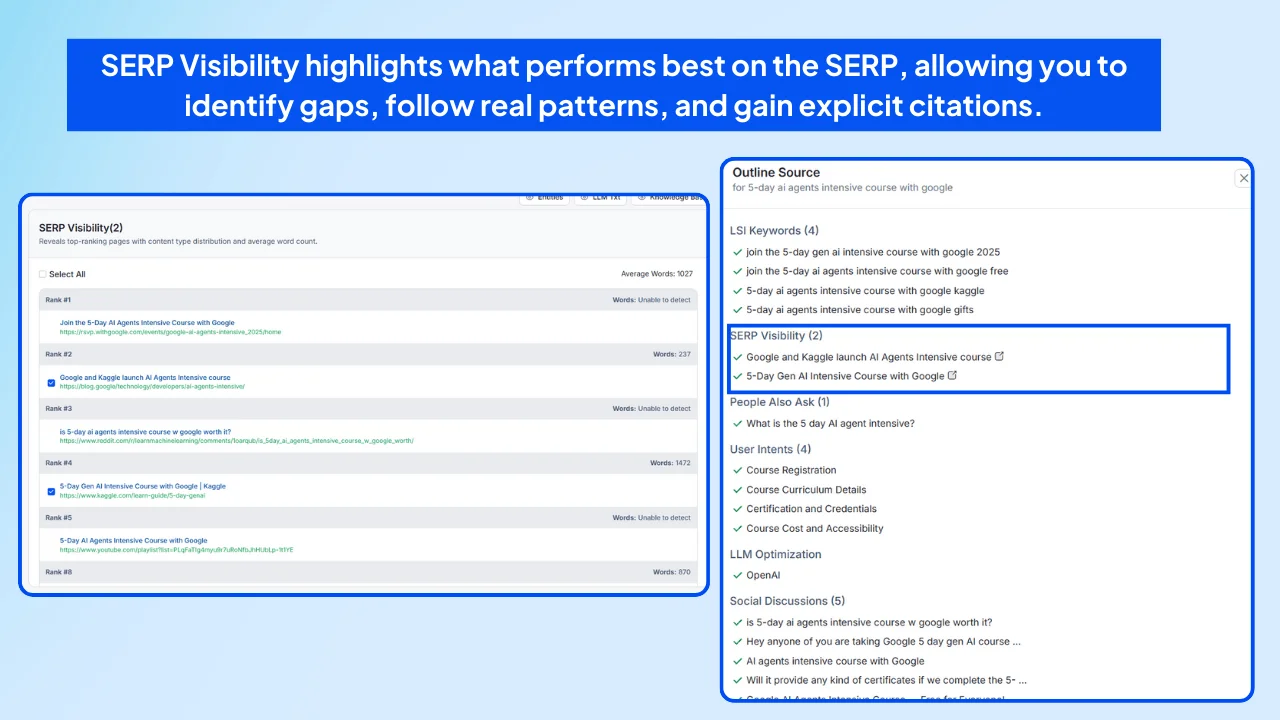

One key capability is the SERP Visibility feature, which shows the dominant content formats Google ranks — from how-to guides and UGC to product roundups and videos — helping you structure briefs around real SERP patterns.

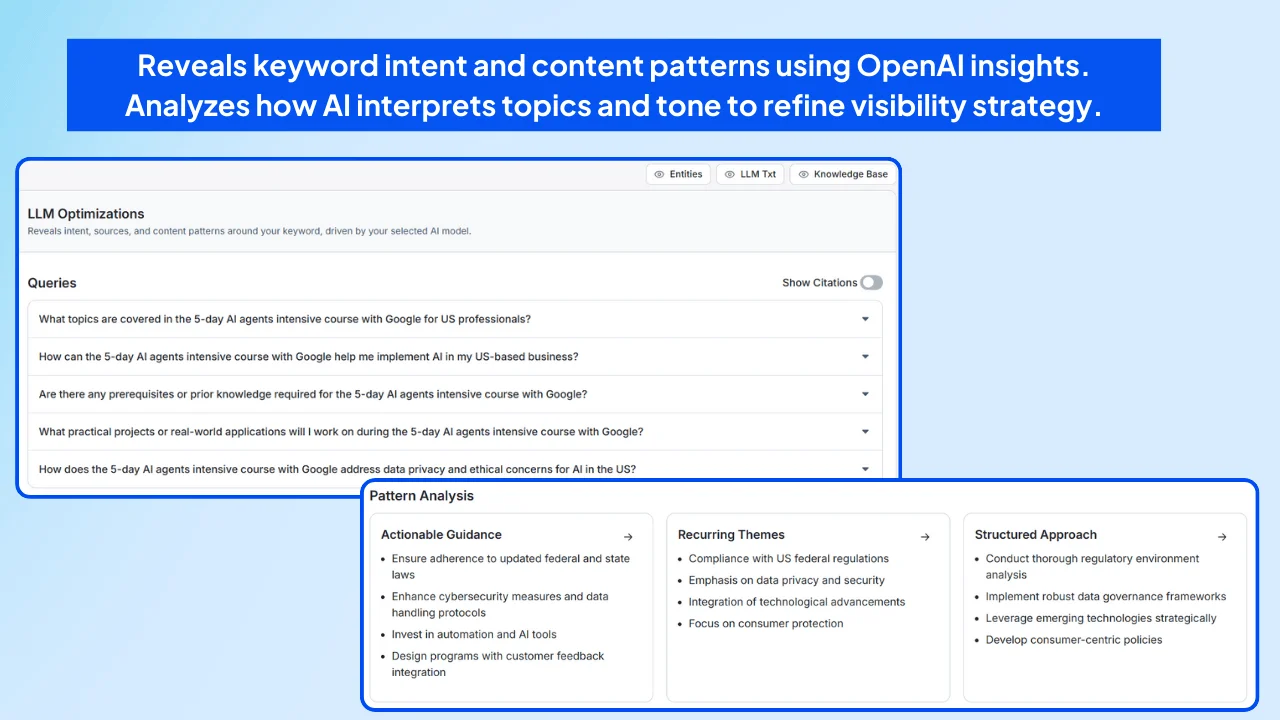

Another is the LLM Visibility feature, which reveals how models like ChatGPT, Claude, and Gemini interpret your topic, what phrasing they prefer, and which sources they consistently surface or cite.

For teams scaling toward automated execution, the guide on Agentic AI Marketing explains how Wellows connects SERP and LLM visibility into one unified framework.

Step 1: Analyze SERP Behavior

The SERP Visibility layer inside Wellows shows you the top-performing pages, formats, and structures in real time, helping you spot visibility opportunities, align with proven search patterns, and create content that earns explicit citations.

- Identify which formats win (guides, UGC, reviews, roundups)

- See visual elements such as featured snippets, videos, and PAA

- Compare competitors and structural gaps instantly

- Use interactive “View” panels to pull SERP structure into briefs

This lets teams create briefs aligned with what Google rewards — so content meets user expectations and matches current search behavior.

Step 2: Understand LLM Citation Logic

Search shows what users click — LLMs show what gets cited, summarized, or trusted. The LLM Visibility feature analyzes how leading models interpret your topic, revealing phrasing patterns, structural expectations, and source preferences across ChatGPT, Claude, Gemini, and DeepSeek.

- Discover the queries models generate around your topic

- See which domains LLMs cite most often — and why

- Reveal preferred answer formats (lists, steps, comparisons)

- Measure brand mentions across models

- Extract structural cues for future content briefs

This gives you model-specific snapshots that highlight where visibility is strong, where it’s weak, and what content opportunities exist.

- ✔ Identify what ranks in search and what appears in AI answers

- ✔ Structure content using proven SERP and LLM patterns

- ✔ Benchmark brand visibility across engines and models

- ✔ Build briefs faster with clearer, pattern-aligned insights

By combining SERP data with LLM citation behavior, Wellows helps you build content that aligns with both search algorithms and generative engines — resulting in pages that are easier to find, reuse, and trust.

Why LLM Tools Prioritize Structured Content Selection?

AI tools such as ChatGPT, Perplexity, and Google’s AI Overviews select content based on clarity, structure, and how easily a section can answer a user’s question. They scan public content, identify cleanly formatted passages, and extract blocks that follow predictable heading and paragraph patterns.

Articles that use logical hierarchies, answer-first formatting, and concise language are consistently more likely to be chosen, summarized, or cited inside AI responses—especially when you create content update for SERP and LLM that is structured for easy extraction.

LLM Tools Prefer Question-Based Sections and Predictable Structure

Most LLMs are trained to answer natural-language questions. When your H2s and H3s are phrased as complete questions — such as “How do search engines identify structured content?” — the model immediately understands what the section is about and how to repackage it.

LLMs scan the header first, then evaluate whether the paragraph directly answers the question. The simpler and more direct the answer, the better the extraction quality.

- Write H2s and H3s as real questions

- Place the answer in the first 2–3 lines

- Use simple definitions for technical terms

For example, an H2 like “What Is a Semantic Keyword Cluster?” followed by “A semantic keyword cluster is a group of related search terms…” signals answer-first clarity that LLMs prefer.

Inside Wellows, the legacy KIVA workspace maps these structural patterns directly into content briefs — showing how question-based headers, short paragraphs, and clean formatting improve the chances of being included in AI summaries.

Clarity, Simplicity, and Sentence Structure Affect Extraction Quality

Sections chosen by LLM tools usually share consistent traits: 15–20 word sentences, active voice, and clearly named entities. LLMs need supporting facts, not filler, so vague intros and long, wandering sentences reduce extraction potential.

- Clear entities (“Semrush is a keyword analysis platform…”)

- Short, factual lines with minimal filler

- Answer-first paragraphs with tight structure

Inside Wellows, KIVA’s LLM Visibility feature highlights these patterns by showing how ChatGPT favors clean, answer-driven passages with strong attribution and minimal promotional language.

According to SEOClarity’s 2025 study (DR 85), 99.5% of AI Overview summaries reference content that appears within Google’s top 10 results.

LLM Tools Use Content Blocks, Not Whole Pages

LLMs rarely summarize entire articles. Instead, they extract specific, self-contained blocks — usually the text under each H2 or H3 — that answer a single query without requiring additional context.

- Each section must work independently

- Name entities directly instead of using pronouns

- Avoid references like “the section above” or “as mentioned earlier”

Within Wellows, KIVA’s visibility snapshots show how Google AI Overview, Gemini, Perplexity, and AI Mode interpret these block structures, helping teams format content so each section stands alone and is eligible for direct inclusion in AI-generated insights.

This also guides how ChatGPT — the only model used inside the Wellows writing workspace — extracts answer-ready blocks during drafting, refinement, and rewriting.

How Different Business Types Require Tailored Implementation Strategies?

While the core of SERP and LLM visibility remains universal, how you implement it shifts based on team size, workflow, and stakeholder expectations.

Agencies, consultants, startups, and freelancers each face unique visibility challenges—and need scalable ways to create content for SERP and LLM that executes quickly without losing clarity or performance.

For Agencies – Scale Visibility Insights Across Clients

Agencies manage multiple clients across different industries while adapting to changing SERP formats and LLM behaviors. To keep performance consistent, they need workflows that merge SERP signals with LLM visibility patterns and deliver briefs at scale.

Agency action points:

- Use content planning platforms that integrate real-time SERP snapshots and LLM response analysis.

- Create modular brief templates that combine keyword intent with model-preferred phrasing.

- Report cross-channel visibility using tools like AlsoAsked or LLM coverage tracking.

For Consultants – Turn Visibility Signals into Strategy

Independent consultants must deliver clear, strategic insight with limited resources. A structured visibility matrix—similar to the approach shared in SEO for solo consultants—helps them turn SERP and LLM data into client-friendly direction.

Consultant action points:

- Evaluate SERP types and AI citations before recommending content topics.

- Shape deliverables around AI-friendly structure while maintaining human value.

- Use modular brief components that fit into any brand or editorial workflow.

This builds trust with clients who increasingly expect clear LLM-ready content structures. It also proves that SEO without a team can work when powered by intentional visibility insights.

For Startups – Move Quickly With Reliable Signals

Startups need momentum but often lack the bandwidth for deep research. Startups use AI for visibility to close this gap and avoid guesswork by relying on hybrid SERP + LLM insights.

Startup action points:

- Use combined SERP and LLM research to uncover “low-effort, high-impact” opportunities.

- Build repeatable content formats for clusters, FAQs, comparisons, and how-tos.

- Repurpose structured content across social, email, and product education.

Startups benefit most from an AI search visibility platform like Wellows, which distills SERP patterns and LLM behavior into simple, actionable content structures without extra tools or manual research.

It helps content teams understand what search engines rank and what AI models cite—without the need for manual audits or fragmented tooling.

That’s also why many startup teams are increasingly relying on content-specific AI agents—here are 10 reasons writers are turning to AI to simplify execution without sacrificing clarity or structure.

For Freelancers – Deliver High-Impact Work With Limited Time

Freelancers juggle multiple clients alone. They need fast, dependable signals that guide their writing without spending hours manually checking Google or testing LLM responses.

Freelancer action points:

- Use SERP + LLM visibility data to choose topics that drive results quickly.

- Adopt consistent content formats that speed up delivery while keeping quality high.

- Turn visibility insights into clear client explanations to build trust and authority.

Freelancers who rely on structured visibility data produce stronger work in less time and avoid the guesswork that usually slows down solo creators.

FAQs

The main benefit is visibility across both search engines and AI models. When you create for search results and AI models, your content not only ranks higher in Google but also gets selected by LLMs like ChatGPT or Gemini. This dual optimization drives more clicks, citations, and user trust.

SERPs focus on ranking factors like backlinks and structured data, while LLMs extract content through semantic analysis. To cover both, brands must design for search engines and language models—balancing keyword signals for Google with structured, answer-first writing for AI processors.

Writers need SEO skills like keyword research and schema markup, but also must know how to build for search results and LLMs. That means structuring answers clearly, embedding trusted sources, and formatting content in modular chunks that machines can extract.

Optimization ensures visibility across platforms. When you create for search results and AI models, you increase the chance of appearing in featured snippets, People Also Ask boxes, and AI summaries. Without optimization, your content risks being overlooked by both.

LLMs have expanded SEO beyond Google rankings. They favor clarity, chunked answers, and trusted sources. That’s why it’s crucial to design for search pages and language processors—so your content performs in SERPs and AI-driven environments alike.

AI powers SERP features like AI Overviews and influences how LLMs summarize content. When you design for search engines and language models, you ensure your articles are readable by humans and extractable by machines, giving your brand broader reach.

Conversational AI shifts users from typing short keywords to asking full, natural questions. Instead of scanning long result pages, people now expect direct, instant answers. This increases zero-click behavior, pushes Google to surface AI-generated summaries, and rewards content that is clear, structured, and answer-first.

Final Thought: Get Found Where It Counts

Today’s visibility is no longer just about ranking. It’s about relevance across every discovery moment.

Whether a user types into Google or prompts an AI assistant, your content needs to show up clearly, confidently, and consistently. That requires purposeful structure, alignment with real search behavior, and the ability to create content for SERP and LLM that speaks to both humans and machines.

The creators and brands who master this balance will be the ones who rise above the noise.

Key Takeaways:

- Break content into scannable, well-labeled sections

- Include verifiable data, clear answers, and trusted citations

- Match the tone, length, and format found in AI responses

- Use TL;DRs, lists, and FAQs for easy extraction

- Monitor both SERP and LLM performance to refine your strategy