Search is being rewritten by AI. With 45% of people now using AI platforms weekly and AI-driven queries up 300% year-over-year, getting found isn’t enough anymore; you need to get quoted. That’s where LLM SEO comes in. This marks a revolutionary approach to search engine optimization for the era of generative AI and large language models.

This guide breaks down exactly how to optimize LLM content for AI citations. You’ll get the frameworks, tactics, and steps that leading brands use to dominate AI-generated answers, so your expertise shows up where attention is moving.

Whether you’re implementing LLM content optimization, mastering prompt engineering, building a comprehensive AI content strategy, or improving SERP visibility, this definitive LLM SEO guide covers everything from keyword research and semantic search to natural language processing (NLP).

Ready to stop competing for clicks and start winning citations?

Let’s go.

What is LLM SEO?

LLM SEO (also known as large language model SEO, AI SEO, generative AI SEO, or LLM-driven SEO) is the process of optimizing digital content so large language models can accurately interpret, cite, and recommend it as authoritative answers in AI-generated search results. This represents a fundamental evolution in search engine optimization, where ranking in LLM-generated content and being cited by AI platforms becomes as important as Google Search rankings.

This involves refining your content structure, semantic clarity, and technical SEO accessibility to ensure AI platforms recognize your pages as trusted sources, often starting with an LLM audit for SEO to pinpoint what’s being cited and what’s missing, a step many brands handle internally or with guidance from specialized Content Marketing Agencies familiar with AI-driven search visibility.

The process requires balancing traditional SEO fundamentals with new AI content generation considerations and optimizing content for search engines.

Unlike legacy methods, LLM SEO focuses on making pages accessible and reference-worthy to artificial intelligence systems, ensuring visibility within large language model search output. This shift represents the convergence of traditional SEO with cutting-edge AI technology.

For a wider foundation beyond citations, AI SEO explains how AI-driven signals affect research, structure, and optimization decisions across search environments.

The core objective of LLM SEO is to raise your website’s visibility not only in search listings but most importantly inside AI-powered answers. Achieving this requires structuring your content for clarity, trustworthiness, and easy citation, qualities vital for being cited by large language models during search and answer generation.

LLM SEO stands out by prioritizing natural language structure and reliable sourcing. As AI responses gradually overtake conventional keyword-based techniques, achieving prominent placement depends on semantic organization and demonstrable subject matter authority.

Adapting to this evolution is necessary for brands seeking to stay relevant.

Businesses implementing LLM-powered SEO strategies now are positioning themselves for generative content indexing and establishing domain adaptation strategies before competitors catch up.

Multi-Platform LLM Visibility

LLM SEO extends beyond a single platform. Your content needs to rank across multiple AI search engines including Perplexity, Gemini, Claude, and emerging platforms like Google AI Mode and Meta AI.

Each platform has unique indexing patterns, but they share core preferences for structured, authoritative content. Multi-engine optimization differs from traditional Google SEO by prioritizing answer extraction over click-throughs.

LLMs pull content from their training data and real-time indexes, making visibility dependent on semantic clarity, structured markup, and third-party validation, rather than backlink volume alone.

The Library Analogy: If traditional SEO is like getting your book on the library shelf where people can find it, LLM SEO is like having librarians memorize and quote your book when answering questions.

You want to be the source they reach for first when someone asks about your topic, which is why teams often start by running an LLM visibility audit, often supported by a ChatGPT Visibility Tracker, to see where they’re already cited and where they’re missing.

- Platform Diversity: Content optimized for one LLM often performs well across others due to shared quality signals.

- Citation Mechanics: Each platform cites sources differently, some link directly, others mention by brand name.

- Real-Time vs. Training Data: Platforms like Perplexity prioritize fresh web content, while others rely more on training data.

- Tracking Requirements: Monitor visibility across all major LLMs separately to identify platform-specific gaps.

LLM SEO vs. LLMO vs. GEO vs. AEO

It’s important to differentiate LLM SEO from related concepts:

- LLM SEO (Large Language Model SEO): As defined above, optimizing content for AI-powered search.

- LLMO (Large Language Model Optimization): A broader term referring to optimizing the LLM itself (training data, architecture), not content creation.

- GEO (Generative Engine Optimization): Focuses specifically on optimizing content for generative AI models like ChatGPT to ensure the AI uses your content as a source.

- AEO (Answer Engine Optimization): Focuses on optimizing content to directly answer user questions and appear in featured snippets

What’s the Difference Between LLM SEO and LLMO?

LLM SEO targets citation visibility in AI-generated search results, while LLMO (Large Language Model Optimization) encompasses broader AI platform presence including multi-modal content and knowledge databases.

Both techniques complement each other within the AI search optimization field but serve distinct strategic goals, especially as content strategy increasingly shifts from keyword targeting to entity and intent-driven structuring outlined in modern LLM content creation approaches.

The comparison below clarifies each approach:

| LLM SEO | LLMO (LLM Optimization) |

|---|---|

| Targets increased site visibility in AI-generated search results from large language models. | Covers a wider range of AI platforms, including Perplexity, Gemini, and Claude, beyond simple text search environments. |

| Focuses on structured content, exact language, and connected sources for easier AI citation. | Incorporates entity alignment, multi-format content (text, video, code), and appearances in structured knowledge databases. |

| Can work with traditional optimization tools such as Bing Webmaster and schema updates. | Involves embedding contextual cues and optimizing for multi-modal outputs and AI assistants. |

| Measures success primarily by mention frequency in AI search engines. | Monitors the full scope of AI-triggered brand occurrences. |

Both are needed: LLM SEO provides tactical groundwork, while LLMO serves as the strategic layer for broad AI recognition.

Understanding these distinctions helps clarify where LLM SEO fits within your overall digital strategy as AI-driven discovery continues to reshape how visibility is earned.

How Does LLM SEO Differ from Traditional SEO?

LLM SEO prioritizes being cited in AI-generated answers over ranking for clicks, focusing on semantic clarity and structured data rather than backlinks and keyword density.

Traditional SEO aims to drive traffic through search engine rankings, while LLM SEO succeeds when AI platforms quote your content as authoritative, even if users never click through.

- Focuses on rankings in search engine results and driving traffic through keywords and backlinks.

- Tracks rank, visitor numbers, and conversions to measure results.

- Prioritizes meta tags, site speed, and mobile usability to assist search engine crawlers.

- Emphasizes link-building.

- Primary aim is to be cited within AI-generated answers, even if users don’t click through.

- Uses citation frequency, source mentions, and contextual references within LLM-driven platforms as key metrics.

- Highlights well-structured content, E-E-A-T, and clarity to support machine reading and citation.

- Values semantic context and comprehensive answers.

Success today is less about first-page rankings and more about becoming the AI’s definitive source. This shift in measurement and outcomes leads to an important question: why should this matter to your brand right now? It also changes how you build and prioritize queries, which is why a keyword strategy checklist built for LLM-era search can help keep your content aligned with how people actually ask questions.

Understanding How LLMs Read and Process Content

LLMs like those used by Google and Bing are trained on massive datasets. When processing content, they look for:

- Semantic Meaning: Understanding context and intent.

- Entity Recognition: Identifying key entities (people, places, things).

- Topical Authority: Assessing depth of coverage.

- Factual Accuracy: Verifying information against reliable sources.

- Coherence and Clarity: Evaluating logical flow

Why Is LLM SEO Important?

LLM SEO is critical because over 45% of people now use AI tools weekly for research, bypassing traditional search entirely, and as AI answers blend with classic rankings, AI vs traditional optimization becomes the new baseline for staying visible, brands not optimized for citations risk disappearing as behavior shifts toward LLM-powered platforms.

- User Behavior Shift: Over 45% of people use GenAI tools every week for research, bypassing traditional click paths.

- Immediate Answers: AI-driven solutions beyond Google now deliver immediate, context-aware responses for users.

- Competitive Defense: LLM SEO enables your expertise to appear as a referenced source, protecting your brand from competitors taking the AI mention.

- Priority on Quality: Clear, credible content is prioritized by LLMs, making structured optimization a leading advantage.

- Risk of Obscurity: Delaying adoption leads to ongoing diminishing visibility in evolving digital search settings.

- Industry Impact: Industries built on credibility, healthcare, finance, legal services, are hit hardest by failing to embrace LLM SEO.

- Perception: Citations now impact user perception more than keyword presence alone.

With the stakes this high, understanding not just why LLM SEO matters but how to implement it becomes critical. The framework below breaks down the path to LLM visibility into actionable steps.

A unique framework for thinking about LLM SEO success:

- Layer 1 – Technical Accessibility: Can LLMs read and parse your content? (Schema, crawlability, structure)

- Layer 2 – Semantic Clarity: Do LLMs understand what your content is about? (Clear language, entities, context)

- Layer 3 – Authority Signals: Do LLMs trust your content enough to cite it? (E-E-A-T, mentions, freshness)

Most sites fail at Layer 1. Fix all three layers to dominate LLM citations in your niche.

The 8-step framework that follows is organized around this stack:

- Steps 1-3 build Technical Accessibility (Bing Webmaster Tools, schema, best practices)

- Steps 4-6 strengthen Semantic Clarity (answering questions, freshness, original content)

- Steps 7-8 establish Authority Signals (citations, branded search)

Each step reinforces one or more layers, think of them as building blocks that work together to make your content citation-worthy.

How Do I Rank in Large Language Models?

To rank in LLMs, implement these eight essential steps: set up Bing Webmaster Tools, add comprehensive schema markup, write in conversational language, answer autocomplete questions, keep content fresh, avoid AI-generated text, earn third-party citations, and grow branded search volume.

This framework builds the technical accessibility, semantic clarity, and authority signals that LLMs require for citation, and it’s why many teams now pair these fundamentals with an LLM.txt file to make key pages and entities easier for models to discover.

Step 1: Set Up Bing Webmaster Tools

Bing Webmaster Tools are instrumental for effective LLM SEO. Connecting your website here gives you a direct path to influencing your site’s presence across LLM-driven tools.

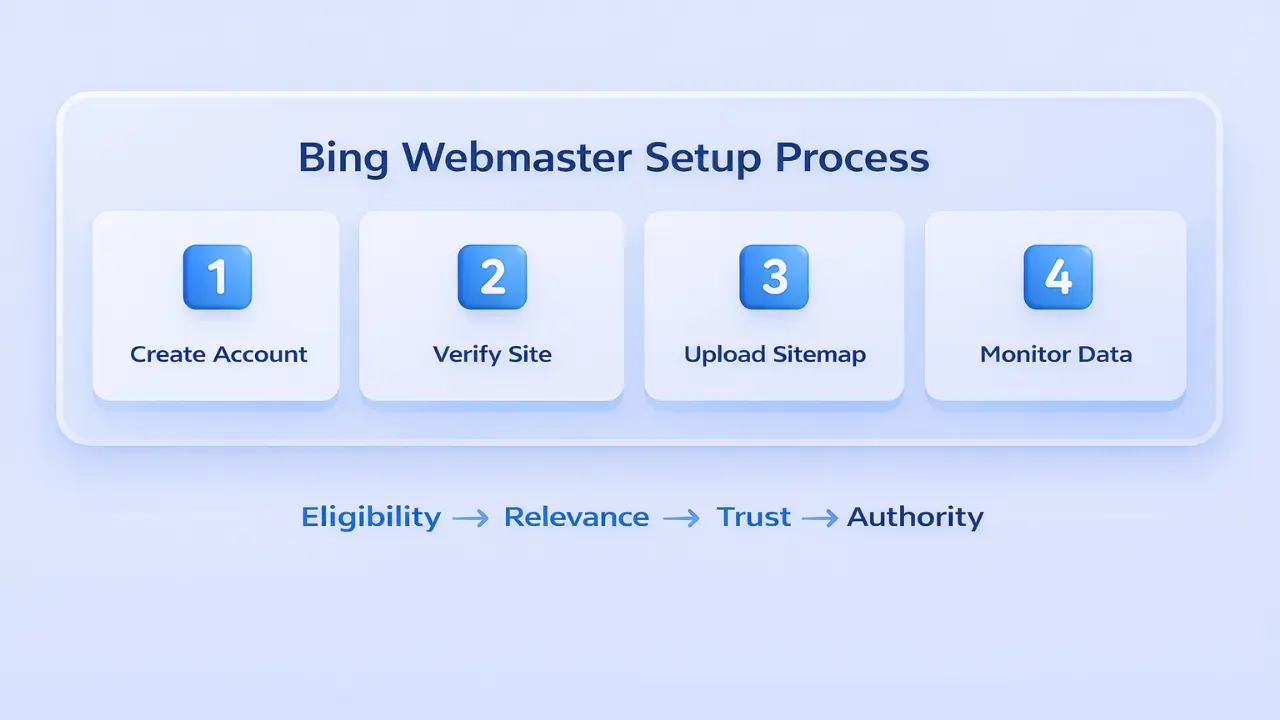

- Create an account and verify your website on Bing Webmaster Tools to access visibility data.

- Upload a clear, structured sitemap to Bing to make all sections of your site available for LLM indexing.

- Review Bing-specific performance to align your content updates with LLM ranking factors.

- Check for crawl issues and delays to maintain seamless communication between your site and Bing-based LLMs.

Why Bing Rankings Impact Multi-LLM Visibility

Bing’s index powers multiple LLM platforms. Strong Bing performance increases your chances of appearing in Perplexity, Copilot, and other AI search results.

Bing prioritizes technical health, content recency, and structured data, all critical for LLM citation.

- Bing’s algorithm emphasizes semantic clarity and E-E-A-T signals that LLMs value.

- Pages ranking well on Bing typically see higher citation rates across multiple AI platforms.

- Use Bing Webmaster Tools to identify crawl errors that block LLM indexing.

- Monitor mobile-first indexing status, as LLMs increasingly favor mobile-optimized content.

Step 2: Add Schema Markup to Your Site

Schema markup is essential for structuring data within the LLM SEO framework, allowing large language models to clearly identify and re-use crucial information, a pattern reinforced in LLM citation trends.

- Include JSON-LD code on your homepage and main landing pages to clarify your site’s purpose.

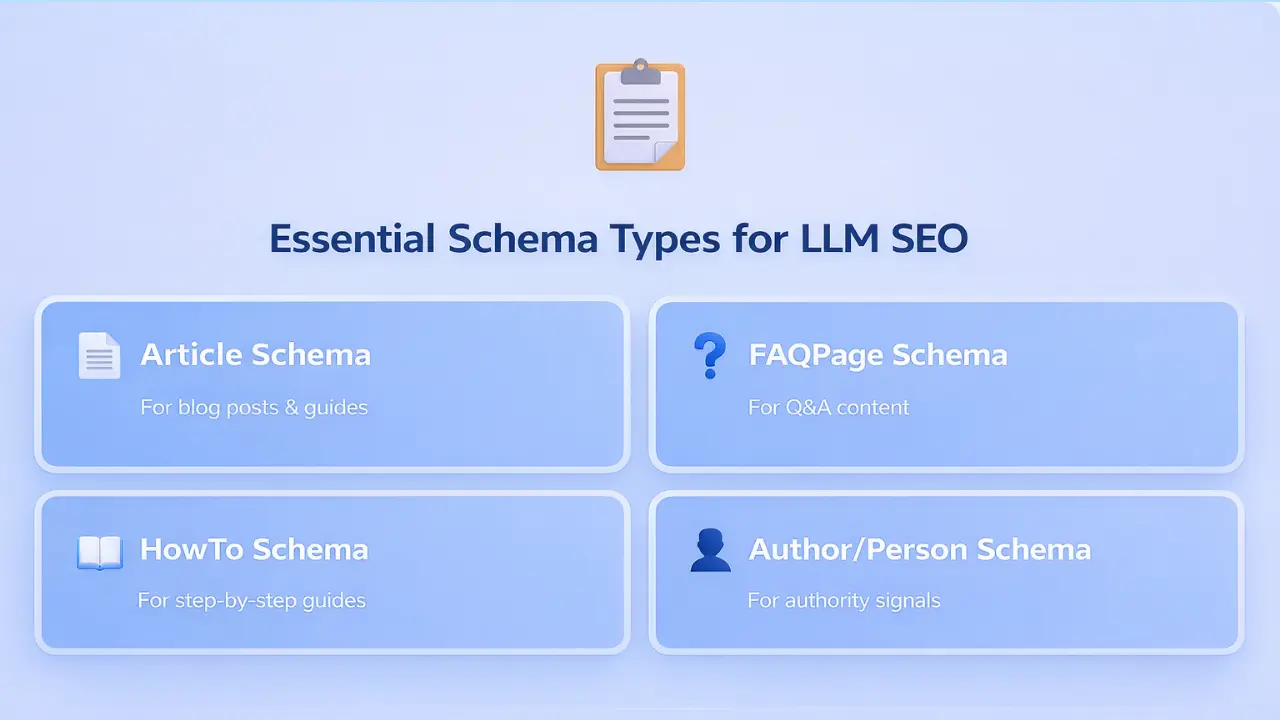

- Focus on Article, FAQPage, and Product markup for maximum AI benefit in both search and conversational settings.

- Update schema with correct publish and revision dates to reinforce recency for AI platforms.

Observation: Pages most frequently cited in LLMs nearly always have thorough schema implemented.

Step 3: Write for Google and Bing Best Practices

LLM SEO works best when built on strong fundamentals. Writing with best practices favored by Google and Bing not only prepares your content for today but sets it up for future AI needs.

- Use clear headings, well-organized sections, and direct language for easier LLM reading.

- Have your content answer the real questions users ask, build topical authority by covering topics thoroughly.

- Support your statements with links to reliable third-party sources for added credibility.

- Build internal topic clusters that interlink related articles for added semantic reinforcement.

Writing in Natural Conversational Language

LLMs respond best to content written the way people actually speak. Semantic SEO, optimizing for meaning and context rather than exact keywords, improves citation rates across Perplexity, Gemini, and other AI platforms, and consistent patterns like these are easier to validate with an LLM pattern analysis checklist.

- Write as if answering a question someone asked you directly.

- Use question-based headings that mirror actual user queries (e.g., “How do I track LLM citations?”), and expand them into related variations using Query Fan-Out.

- Enrich content with synonyms and related terms to improve semantic matching.

- Structure sentences to be clear and complete, avoiding ambiguity that confuses AI interpretation.

- Adopt a conversational tone that matches how users phrase queries to AI assistants.

Step 4: Find and Answer Autocomplete Questions

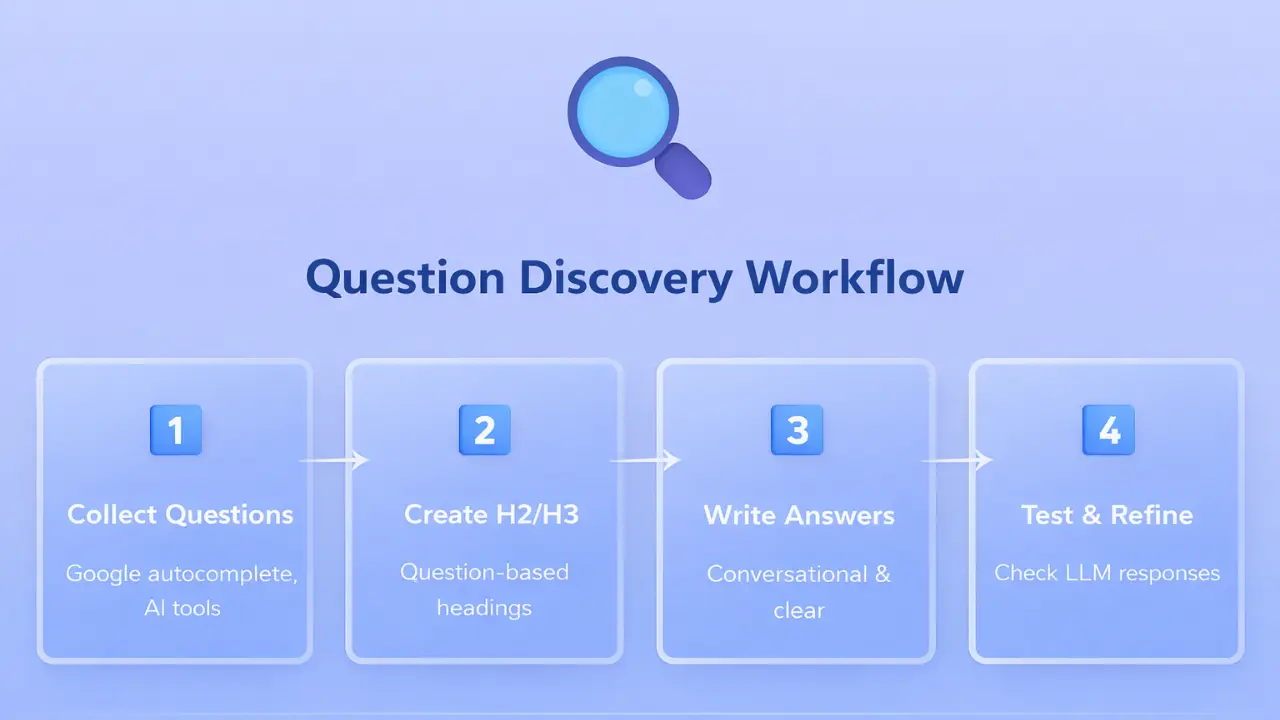

Powerful LLM SEO strategies include identifying and targeting autocomplete-based questions, high-frequency, intent-driven queries surfaced by both search engines and AI platforms.

- Use autocomplete in Google, chat.com, and other AI tools to collect current, NLP-ready user questions.

- Create new H2 or H3 sub-sections for each real-world question, letting LLMs easily find direct answers.

- Write answers in formats that match LLM preferences: straightforward, conversational, and context-driven.

- Include word variations and synonyms to broaden opportunities for inclusion by LLMs.

Using LLMs for Keyword and Query Research

Use AI platforms to identify questions and long-tail queries your audience asks. This reveals question patterns and semantic clusters that traditional keyword tools miss, helping you target actual user intent, while keeping expectations realistic about how citations differ from backlinks in AI-driven discovery.

- Query multiple LLMs: “What questions do people ask about [your topic]?”

- Request keyword groupings by intent to build topical authority.

- Generate long-tail variations matching natural conversational search patterns.

- Identify semantic relationships between related terms for comprehensive coverage.

- Test your target queries across Perplexity and Gemini to see current answer gaps.

Step 5: Keep Content and Publish Dates Fresh

Keeping content recent is a strong signal for LLM SEO because AI models give priority to up-to-date sources.

- Set a regular review interval, refresh top pages every 3–6 months for ongoing accuracy.

- Show the latest publish or update date on both the visible page and schema tags.

- Remove any obsolete information and add new, relevant case examples.

- Outline what you’ve changed using summary or changelog blocks for greater transparency.

lastModified dates in schema markup.

Pages updated within the last 6 months get cited 2.5x more often than older content, even when the older content ranks higher in Google.

Step 6: Avoid Relying on AI-Generated Content

Maintaining genuine authority in LLM SEO demands original, people-written content; overuse of AI-generated text lowers your chances of being cited.

- Do not publish AI-created content as originally produced, unique insights score better for LLM references.

- Share detailed expertise, stories, and facts that cannot be found in typical AI-generated text.

- Double-check stats and statements to prevent errors created by AI outputs.

- Use case studies, your own data, or unique stories to boost E-E-A-T.

Result: Within 90 days, Perplexity and Gemini began citing them for estate planning queries in their metro area, despite competing against national legal sites with thousands of pages. The firm didn’t gain Google rankings, but citation volume drove 40% more qualified consultation requests. This proves small businesses can win in LLM environments when execution is precise.

Step 7: Earn Brand Mentions and Citations

Establishing authority in LLM SEO depends on authentic third-party mentions and citations, a critical aspect of off-page optimization. A practical way to accelerate this is through LLM seeding and AI-driven link building, earning presence in the sources models already rely on for answers. These off-page SEO strategies complement your on-site optimization efforts.

Build these connections to increase your site’s selection in LLM-driven searches.

- Find relevant blogs and industry outlets for natural reference opportunities.

- Develop solid relationships with journalists and digital leaders, prioritizing real mentions over paid placements.

- Monitor citations within AI responses and adjust outreach based on real data.

- Offer insightful data and commentary via podcasts, interviews, or community sessions.

Getting Listed on Affiliate, News Sites, and Reddit

Third-party mentions on reputable affiliate sites, news outlets, Reddit discussions, and industry directories signal authority to LLMs. Many practitioners discuss LLM SEO on Reddit, providing insights on how it is shaping search in the generative era. LLM-based SEO provides a roadmap for brands to increase visibility across AI search engines like ChatGPT, Perplexity, Gemini, Google AI Overviews, and AI Mode.

Platforms like Perplexity and Gemini increasingly cite user-generated content from Reddit and forums, making these mentions valuable for multi-platform visibility.

- Identify affiliate programs or review sites relevant to your industry.

- Contribute expert insights to news articles and industry reports.

- Participate authentically in relevant Reddit communities and forums.

- Get featured in comparison articles, roundups, and “best of” lists.

- Ensure your brand information is consistent across all third-party mentions.

Step 8: Grow Branded Search Volume

Boosting branded search directly increases recognition and citation in AI systems, strengthening your overall authority with large language models.

- Motivate returning visitors and fans to look up your brand name together with priority topics.

- Generate brand exposure through event partnerships or collaborations with key creators.

- Build a consistent brand identity, LLMs are more likely to cite well-recognized names.

- Track shifts in branded search volume as a way to measure your standing in LLM and AI search.

What Are the Key LLM SEO Optimization Techniques?

The key LLM SEO techniques include raising content clarity standards, utilizing chunked formatting with answer-first structure, demonstrating authority through E-E-A-T signals, optimizing for AI summarization, and implementing comprehensive schema markup.

These tactics make your content easy for both people and AI models to understand, extract, and reference, which matters because LLMs need context to interpret meaning correctly and cite reliably.

Raise Content Quality and Clarity Standards

Clear communication is fundamental to LLM SEO. Avoid buzzwords, instead, describe topics in plain terms as if explaining to a peer.

- Example: Rather than “innovative tool,” use “AI-based system that instantly summarizes lengthy reports.”

- Direct, exact language improves comprehension for both humans and machines.

Utilize Structure and Chunked Formatting

Breaking information into segments helps large language models process data more easily, and the formats that work best are consistent with effective LLM citation strategies. Well-organized content not only improves user experience but also signals clarity to AI models.

- Use H2 and H3 headings for every subtopic.

- Add bullet points for instructions, comparisons, or checklists.

- Keep paragraphs short, focusing on a single point per paragraph.

- Use tables and fact blocks for summarizing important information.

- Place FAQs after main content sections.

Creating Citable Content for LLM Extraction

Citable content is structured so LLMs like Perplexity and Gemini can extract and cite specific sections without additional context. Use the inverted pyramid structure, placing critical information first, to maximize extraction rates across AI platforms, especially in cases where rankings and citations don’t align.

- Start each section with a direct, complete answer (answer-first intros).

- Use descriptive headings that include the question or topic (e.g., “How to Track LLM Citations”).

- Break complex topics into numbered steps or bulleted lists to make them easier to isolate.

- Keep paragraphs under 3–4 sentences for better AI parsing and lifting.

- Use bold text to highlight key terms and concepts within paragraphs.

- Include tables and structured data blocks that LLMs can extract as standalone answers.

Demonstrate Authority and Transparency for LLMs

Signals of authority and E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) encourage LLMs to cite your pages. Highlighting your background and transparent sourcing strengthen the machine learning model’s confidence in your site.

- Show credentials by listing author bios, backgrounds, and credible references.

- Provide direct links for claims, statistics, or benchmarks you mention.

- Include personal experience or first-hand knowledge where relevant.

Optimize for LLM Summarization and Snippets

To increase the likelihood of AI citation, structure your content so sections can be easily quoted as stand-alone summaries.

- Place essential information in the first sentence or two of each main section.

- Highlight major facts using bullet points for rapid reading.

- Conclude complex explanations with a brief summary paragraph.

- Essential Reminder: Start every section with a statement that can be extracted as a quick answer by AI.

Leverage Schema for Enhanced LLM SEO

Schema markup improves how well large language models understand your content and enhances the structure presented in search results.

- Apply Article schema to each blog or guide post.

- Add FAQPage schema for quick answer extraction where appropriate.

- Use HowTo schema for instructional content.

- Include Author and Person schema to signal trust and authority.

What Advanced Tactics Boost LLM Visibility?

Advanced LLM visibility tactics include using explicit entity context, implementing conversational FAQ patterns with a dual-format schema, configuring the llms.txt and robots.txt files for AI crawlers, and strengthening technical foundations such as HTTPS, page speed, and mobile-first design.

These extra layers maximize your content’s accessibility and citation potential across all major AI platforms. Once you’ve mastered the essential steps, these advanced techniques give you an edge in competitive niches.

Use Explicit Context and Clear Entities

LLMs depend on fully explained references for accurate citation. Apply explicit context to all parts of your content by following these steps:

- Spell out product, service, or industry names with full terms on initial use.

- Introduce all acronyms, such as large language model (LLM), before abbreviating.

- Repeat your primary term or entity name in key supporting sentences for entity linking.

- Avoid phrases like “click here”; use clear, descriptive link labels pointing to specific resources.

Use FAQs and Conversational Patterns

Including well-constructed FAQs and conversational Q&A formats is a powerful method for LLM SEO. This approach allows both people and large language models to quickly and accurately extract and cite your information.

Guide AI Crawlers with llms.txt and Robots.txt

The llms.txt file controls how AI search systems access your site, while robots.txt determines which AI crawlers can index your content. Configure both to maximize visibility across Perplexity, Gemini, Claude, and emerging AI platforms.

- Place a plain-text

llms.txtfile at your site root listing URLs for AI to access and cite. - Include the homepage, primary guides, FAQ hubs, and key product pages.

- Update

robots.txtto allow AI crawlers like PerplexityBot, GoogleOther, and ClaudeBot. - Avoid blocking legitimate AI crawlers, visibility depends on crawlability.

- Combine

llms.txtwith comprehensive schema markup for dual visibility.

Technical Foundations for LLM Optimization

Strong technical SEO is mandatory for LLM visibility. Page speed, mobile-first design, HTTPS security, and structured data all impact how LLMs crawl and cite your content.

- HTTPS is Mandatory: AI platforms prioritize secure sites for trust and citation.

- Page Speed Matters: Faster sites get crawled more frequently by AI bots, improving indexing rates.

- Mobile-First Design: LLMs increasingly favor mobile-optimized content as mobile queries dominate.

- Structured Data: Implement JSON-LD schema across all key pages for better AI interpretation.

- Clean URL Structure: Use descriptive, hierarchical URLs that clearly indicate content topics.

- Internal Linking: Strong internal link architecture helps LLMs understand topical relationships and content depth.

How Do I Track and Measure LLM SEO Performance?

Tracking AI impact and LLM SEO performance requires specialized monitoring tools, because traditional analytics platforms like Google Analytics and Search Console do not capture AI citations, prompt-based visibility, or LLM-generated influence.

Measurement needs to happen at the platform level. For example, when we want to see how one LLM handles citations, we track Perplexity results directly, looking at which queries trigger citations, which URLs are used, and how visibility changes over time via the Perplexity Visibility Tracker.

Instead of measuring rankings and clicks alone, LLM SEO focuses on whether AI systems cite, summarize, or recommend your content across platforms such as ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews.

To measure this accurately, brands rely on monitoring citation frequency, platform distribution, and query-level visibility, metrics that reflect influence, not just traffic.

Forward-thinking teams are also addressing hallucination detection concerns and algorithmic bias mitigation to ensure their content remains trustworthy to AI systems. These advanced tactics represent the cutting edge of SEO with LLMs and position brands to significantly improve marketing ROI

What Are LLM SEO Tracking Tools?

LLM SEO tracking tools are platforms that help you monitor how your brand, pages, and content appear inside AI-generated answers across tools like ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews.

Instead of only measuring rankings and clicks, these tools track citation frequency, brand mentions, query coverage, and source attribution, so you can understand whether AI systems are actually using your content when users ask questions.

In simple terms, they act like a visibility layer for AI search, because standard SEO tools typically can’t show when an LLM summarizes you, recommends you, or cites your pages.

Essential LLM SEO Tools For 2026

As of January 2026, several tools have emerged to effectively monitor and optimize Large Language Models (LLMs). Here are some notable options:

Wellows: Tracks AI search visibility by measuring where your brand (and competitors) appear inside AI-generated answers, including citations/mentions and the sources AI systems rely on useful for monitoring “share of AI voice” and closing visibility gaps across LLM-driven discovery.

AIclicks: Tracks AI citations and visibility across LLM platforms (including Gemini and Perplexity) so you can confirm where your pages appear inside AI answers.

Profound: Monitors brand mentions, tone, and source attribution across ChatGPT, Claude, Gemini, and Google AI. Overviews are useful for reputation + competitive tracking in AI search.

Fibr AI: Helps with visibility + attribution by showing which AI platforms contribute to awareness and downstream engagement (helpful when GA4 alone can’t explain “AI influence”).

SEMrush AI Features: Adds early AI visibility signals alongside traditional keyword/SERP tracking, giving a combined view of classic SEO + emerging AI search performance.

Manual Prompt Testing: Test target prompts across ChatGPT, Claude, Gemini, and Perplexity to validate real outputs, confirm citations, and spot competitor takeovers (still one of the most reliable QA steps).

Nightwatch: Combines traditional rank tracking with LLM monitoring; also shows the web searches AI systems run for real-time answers useful for tracking prompts, citations, and local AI results in one dashboard.

Keyword.com: Extends rank tracking into AI environments by tracking citations/mentions for keywords across platforms like ChatGPT, Claude, and Gemini, with reporting workflows for teams and clients.

Otterly AI: Tracks brand representation in AI search and reports “share of AI voice” style visibility, plus GEO-type audits to diagnose why your brand is (or isn’t) appearing.

Peec AI: Focuses on LLM brand visibility with sentiment + competitive benchmarking across major AI platforms, best when you need monitoring rather than full SEO tooling.

First Answer: Compares AI responses against approved/official brand documentation to flag inaccuracies, especially valuable for regulated industries or high-risk messaging.

Key Metrics to Monitor in LLM SEO

A single citation in Perplexity or Gemini can reach thousands of users who never visit your site but now know your brand as the authoritative source.

- Citation Frequency: How often your site is referenced in LLM citations for target queries.

- Platform Distribution: Which AI platforms cite you most (Perplexity, Gemini, Claude, etc.).

- Query Coverage: Percentage of target queries where your content appears in AI responses.

- Competitor Citations: Track competitor mentions to identify gaps in your LLM strategy.

- LLM Traffic Sources: Use GA4 UTM tracking to separate AI-driven traffic from traditional search.

- Prompt Cluster Analytics: Identify which question patterns trigger your citations most frequently.

How to Audit Your Pages for LLM Inclusion

Regular audits identify which pages perform well across AI platforms and which need optimization. This process reveals technical issues, content gaps, and platform-specific visibility problems.

Following a structured brand visibility audit framework helps identify exactly where your content stands across AI platforms.

- Test 20–30 core queries across Perplexity, Gemini, and Claude monthly.

- Document which pages get cited and which queries return competitor results.

- Check schema implementation on underperforming pages using Google’s Rich Results Test.

- Review pages with low LLM visibility for semantic clarity and answer-first structure.

- Use Bing Webmaster Tools to identify crawl errors blocking LLM indexing.

- Compare LLM visibility against Google rankings to identify platform-specific issues.

LLM SEO Implementation Checklist: Steps for Success

Here is a detailed LLM SEO implementation checklist:

Common LLM SEO Pitfalls That Limit AI Visibility

Top LLM SEO Mistakes to Avoid

Relying on Outdated SEO Tactics

Ignoring Conversational Search Language

Missing Citations and Creator Signals

Skipping FAQs and Summaries

Allowing Content to Become Outdated

Failing to Conduct Regular Audits

Limiting Content Formats

Using Ambiguous Language or Excessive Jargon

Inconsistent Branding Signals

Neglecting Technical SEO Foundations

Overvaluing Backlinks Over Authority

Ignoring AI-Native Traffic Sources

What’s Next for LLM SEO and AI Search?

The future of llm seo will continue to develop as language models advance in capability, citation practices become widely adopted, and AI-enhanced search replaces more traditional listings. Upcoming AI trends anticipate expanded multi-modal search that blends text, image, and video, making it essential for optimization to include every available format.

Technical schema and integration with knowledge graphs will take on increased significance, with technical accuracy and fact-checking becoming central for sustainable visibility in LLM-driven environments.

Innovations in search may soon provide advanced tracking for model references and improved correction of errors or misattribution on a large scale.

The next chapter in SEO focuses on meeting the needs of AI-driven answers, quickly adapting strategies, and evaluating presence as AI search continues to redefine established practices.

FAQs

LLM SEO involves tailoring content so that large language models like Gemini, Perplexity, and Claude can easily interpret and display it in their outputs. It focuses on semantic clarity, structured data, and answer-first formatting to maximize citation across AI platforms.

While classic SEO focuses on backlinks and click-throughs, LLM SEO prioritizes clarity, structured layouts such as lists and FAQs, and explicit sourcing. Traditional SEO serves crawlers, while LLM SEO serves language models. Relying solely on legacy SEO may reduce visibility as information discovery increasingly shifts to AI responses.

LLM SEO aims at being visible in AI-driven search results, while LLMO covers wider brand presence in any context where large language models generate answers. LLM SEO is rooted in SEO basics, but adapts for how LLMs find and present information.

LLMs likely won’t eliminate search engines like Google, but they may make conventional search less essential. Google will remain but may become less central as users shift to direct answers.

A recent SEOFOMO survey found 39% of SEOs worry about AI Overviews, and 34% consider LLMs a challenge to their consulting work. This suggests that focusing on LLM SEO is vital for staying relevant in changing search habits.

Use dedicated LLM tracking tools like AIclicks, Profound, and Fibr AI to monitor citations across Perplexity, Gemini, and other AI platforms. Track citation frequency, query coverage, and competitor mentions separately from traditional Google Analytics. Manual testing across multiple LLMs provides qualitative insights into answer quality and positioning.

Structured data using JSON-LD schema helps LLMs accurately extract and understand your content. Schema markup identifies key entities, content types, and relationships that LLMs use to determine citation relevance. Pages with comprehensive schema (Article, FAQPage, HowTo) get cited significantly more often across AI platforms.

Yes. LLM citation criteria differ from traditional Google ranking factors. AI platforms prioritize semantic relevance, answer completeness, and structured formatting over backlink profiles. Content optimized for AI Overviews can appear in AI search results even if it ranks lower in traditional search results.

LLM SEO focuses on optimizing for citation in AI-generated answers across platforms like Perplexity and Gemini. Generative Engine Optimization (GEO) is a broader term covering optimization for all generative AI outputs, including image generation, code assistants, and conversational interfaces. LLM SEO is a subset of GEO focused specifically on text-based AI search visibility.

Absolutely. Small businesses often compete better in LLM environments because AI platforms prioritize content quality and relevance over domain authority. Focused topical expertise, clear answer-first content, and proper schema implementation can help small sites get cited alongside larger competitors in AI responses.

Final Thoughts

The path forward requires shifting focus from rankings to citations, emphasizing clarity and structure that makes AI platforms trust your expertise enough to quote you, start by optimizing one priority page with the 3-Layer Visibility Stack and measure results within 30 days.

As AI-driven platforms become central to information discovery, building a strong LLM SEO framework is critical for sustained digital visibility. By emphasizing clarity, credibility, and a structure accessible to both people and large language models, your content is more likely to be referenced and included in AI-powered responses.

The focus now shifts from rankings and backlinking to being selected and trusted by AI search platforms. Adjusting your strategy today secures a lead as user habits increasingly prioritize LLM-driven search tools.

Take your first step by updating a priority content page for LLM SEO: add FAQ sections, incorporate structured data, and answer users’ main questions upfront. This not only enhances your visibility but also establishes your authority in the rapidly evolving era of AI search optimization.