When was the last time you asked ChatGPT, Gemini, or Perplexity about a brand—maybe even your own—just to audit brand visibility on LLMs?

Chances are, the way these large language models (LLMs) describe your company will influence how customers, investors, and even competitors perceive you. Unlike search engines, where you can track rankings and clicks, LLMs generate direct answers. This marks a shift from SEO to GEO, where the goal is to optimize how generative engines understand and present your brand.

That means your brand visibility is no longer just about appearing on page one of Google—it’s about how accurately and prominently you show up in AI-generated responses.

When brands don’t actively audit how they appear in AI-generated answers, they often lose visibility without realizing it—one of the most common reasons websites are ignored by AI search even though they still perform well in traditional SEO.

According to a Salesforce survey, 62% of consumers now consult AI assistants before making a purchase decision. If your brand isn’t being recognized—or worse, is being misrepresented—you’re losing authority, trust, and business opportunities. That’s where auditing your brand visibility on LLMs becomes essential.

In this guide, you’ll learn what an LLM brand audit is, why it matters, and how to conduct one step by step. We’ll explore the tools, metrics, and strategies you need to not only measure your presence but also improve how LLMs talk about your brand.

- Brand visibility on LLMs is about how often, how accurately, and in what context your brand appears in AI-generated answers.

- LLMs like ChatGPT, Gemini, and Perplexity now shape discovery, trust, and purchase decisions—often before users visit a website.

- Auditing brand visibility on LLMs means testing real prompts to see how AI systems mention, frame, and compare your brand.

- Visibility is driven by mentions, sentiment, accuracy, and context—not rankings or clicks.

- Brand visibility differs from brand awareness: visibility creates presence; awareness shapes perception.

- Without audits, brands risk misinformation, poor framing, and competitors dominating AI responses.

- A structured audit includes prompt testing, competitor benchmarking, source analysis, and sentiment review.

- Key metrics include mention frequency, response position, sentiment, and share of voice.

- Explicit and implicit mentions both matter for true AI visibility.

- Wellows enable continuous tracking and optimization across major LLMs.

- Regular monitoring helps brands protect authority, fix inaccuracies, and improve AI-driven visibility over time.

What Does Brand Visibility Mean in the Context of LLMs?

Brand visibility on large language models (LLMs) means how often your brand shows up in AI-generated answers, how accurately it’s described, and how it’s positioned compared to alternatives.

Because LLMs generate direct responses (not ranked links), visibility depends on whether the model understands your entity, trusts the sources it pulls from, and consistently frames your brand in the right context.

To do this well, you need to evaluate a few core factors.

Brand visibility on large language models is defined by four core dimensions:

- Mentions: Whether your brand appears when users ask relevant questions inside AI assistants.

- Sentiment: The tone applied when your brand is mentioned and whether it builds trust or creates doubt.

- Accuracy: Whether details about your brand are correct, current, and aligned with your official positioning.

- Context: How your brand is framed compared to alternatives or competitors inside AI-generated answers.

Together, these factors show how well your brand performs in AI-generated answers. Strong brand visibility on large language models ensures your company is consistently present, factually represented, and placed in authority-driven contexts across key prompts.

These dimensions are commonly tracked through Brand Performance Metrics in AI Search, which quantify mentions, sentiment, accuracy, and contextual placement across LLMs.

Wellows provides a ChatGPT Visibility Tracker that shows how your brand appears across ChatGPT, Gemini, Perplexity, and Google AI surfaces, including explicit mentions, implicit mentions, and competitor comparisons.

How is Brand Visibility different from Brand Awareness on LLMs?

Brand visibility and brand awareness are closely related but distinct concepts—especially when applied to large language models (LLMs) like ChatGPT, Gemini, and Perplexity.

Brand Visibility refers to how often and how prominently a brand appears across digital surfaces, including AI-generated answers, search results, and other online touchpoints.

In the context of LLMs, visibility is about whether a brand is surfaced, cited, or positioned within responses generated by AI systems. A brand with strong visibility is consistently included in relevant answers, comparisons, and recommendations when users ask questions.

Brand Awareness measures how familiar users are with a brand and how easily they can recognize or recall it.

Within LLM environments, awareness reflects whether a brand is generally known or understood by users and whether its name, purpose, or reputation is recognizable when encountered in AI-driven content—even if it is not always actively mentioned or recommended.

In LLM-driven discovery, brand visibility determines whether a brand appears in AI-generated responses at all, while brand awareness influences how users perceive and interpret that brand once they encounter it. Visibility creates presence and opportunity; awareness shapes recognition and trust. Together, they ensure a brand is both discoverable within AI systems and correctly understood by the people who rely on them.

How Do I Check If My Brand Is Showing Up in ChatGPT and Other AI Chatbots?

To check brand visibility in ChatGPT SEO contexts and other AI chatbots, you need both direct testing and structured methods that explain how to measure brand visibility in ChatGPT beyond isolated prompts.

1. Start with direct prompts inside ChatGPT Gemini and Perplexity

Begin by asking each AI system natural queries that mirror how real users speak which is the simplest way to see brand visibility in ChatGPT and detect early LLM mentions of company or product names.

- What is [Brand Name]

- Is [Brand Name] good for [use case]

- Why does [Brand Name] show up in AI chatbots

- Which tools compete with [Brand Name]

These checks help you see if your brand appears how it is described and whether the information is fresh accurate and aligned with your positioning.

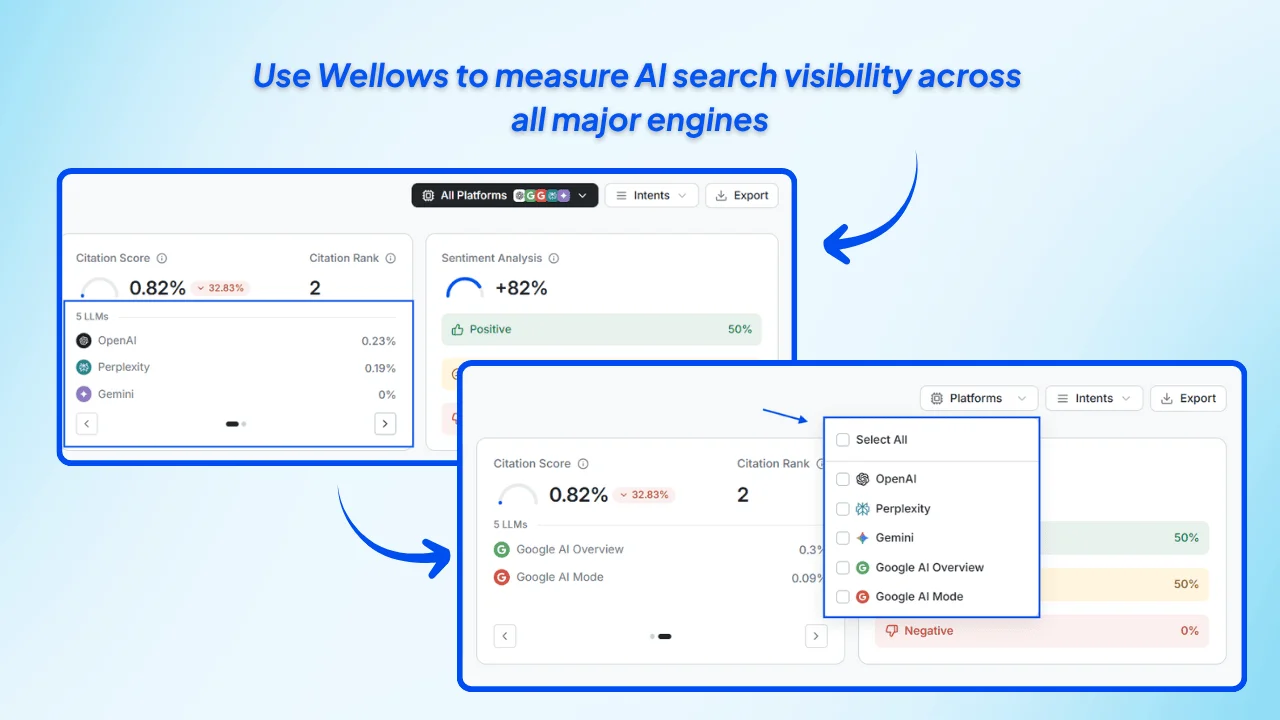

2. Use Wellows to measure AI search visibility across all major engines

Manual testing only shows fragments. Wellows gives the complete picture. As an AI search visibility and autonomous marketing platform Wellows shows whether your content appears inside ChatGPT answers Gemini summaries Perplexity citations AI Overviews and AI Mode.

- Check if your blogs guides and product pages appear when users ask brand related questions.

- See where your brand name shows up across chat based answers and AI generated summaries.

- Identify which prompts surface your brand and which prompts overlook it.

- Compare search visibility and AI visibility inside one unified dashboard.

Wellows analyses extractable passages schema signals directory consistency and off site mentions so you can see exactly how AI systems understand your content.

3. Use insights to improve how ChatGPT and other AI chatbots describe your brand

Once Wellows reveals where your brand is showing up you can strengthen your pages so AI systems reference them more consistently. This keeps alignment between “brand visibility check,” “brand showing up,” and “AI chatbot visibility.”

- Update pages that already appear with clearer definitions stronger context and correct facts.

- Fix inaccurate or outdated details that AI systems may surface.

- Create answer ready passages so AI systems can extract clean factual summaries.

With Wellows you can check if your brand is showing up in ChatGPT and other AI chatbots and turn these insights into ongoing AI search visibility growth.

What Does It Mean to Audit Brand Visibility on Large Language Models?

Auditing brand visibility on large language models means running a structured review of how AI systems like ChatGPT, Gemini, and Perplexity mention, describe, and position your brand across real user prompts.

This structured process is commonly referred to as an LLM visibility audit, and it helps brands understand whether they are being surfaced accurately, consistently, and competitively in AI-generated answers.

At its core, an LLM visibility audit reveals how LLMs see your brand—what information they rely on, how they frame your authority, and where gaps or misinterpretations exist.

If you’re wondering how to evaluate brand presence on LLMs, the key is to test real prompts and score visibility across mentions, sentiment, accuracy, and competitor context.

The goal is to measure whether your brand appears consistently, is represented accurately, and is framed in the right context—especially compared to competitors.

To analyze brand visibility on LLMs, you need to track how often your brand appears, how it’s framed, and whether AI systems position it as an authority or an alternative across different prompts.

What are the Key Factors in Auditing Brand Visibility on LLMs?

Because LLMs rely on external sources to ground their answers, auditing visibility also requires understanding how AI selects sites to cite, since citation eligibility determines which pages are used as factual references when models generate brand-related responses.

Key factors to consider in auditing brand visibility on LLMs include:

- Brand Recall: Measure how frequently and how visibly your brand appears in LLM responses to relevant prompts. Strong recall indicates that the model consistently recognizes and surfaces your brand when it is contextually relevant.

- Accuracy of Representation: Verify that details shared by LLMs about your brand are correct, current, and aligned with official messaging. Inaccurate or outdated information can misinform users and weaken trust.

- Entity Recognition: Confirm that LLMs correctly identify your brand, products, and leadership without confusing them with similarly named entities. Clear entity recognition is critical for maintaining authority and credibility.

- Comparative Positioning: Review how your brand is framed alongside competitors in AI-generated answers. Identify whether it appears as a leader, an alternative, or a secondary option—and adjust positioning accordingly.

- Tone and Sentiment: Evaluate the emotional tone used when your brand is mentioned. Positive or confident framing supports trust, while neutral or negative language may reduce perceived reliability.

- Content Sources and Citations: Examine which external sources LLMs rely on when referencing your brand. Prioritize accuracy by ensuring those sources are authoritative, consistent, and up to date.

- Data Consistency: Maintain uniform brand information across your website, social profiles, and third-party listings. Inconsistent data can confuse both LLMs and users and reduce visibility.

- Structured Data and Schema Markup: Use clear schema markup to help LLMs interpret and categorize your brand information accurately, improving extractability and representation.

- External Mentions and Digital PR: Strengthen your presence on trusted third-party sites, industry publications, and directories to reinforce credibility and improve recognition by LLMs.

- Continuous Monitoring and Optimization: Track how your brand appears across multiple LLMs over time and refine content, sources, and structure as models and datasets evolve.

By systematically reviewing these factors, you can improve how LLMs surface, describe, and position your brand—ensuring visibility is both accurate and authoritative.

Because large language models now influence real buying decisions, an audit brand visibility process is essential. It ensures your brand is represented accurately and helps you strengthen authority across AI-generated answers.

With Wellows, you can run a complete brand visibility audit across ChatGPT, Gemini, Perplexity, AI Overviews, and AI Mode. As an AI search visibility and autonomous marketing platform, Wellows shows how your pages appear in answers, which prompts surface your brand, and where inaccuracies or gaps exist so you can take action before misinformation spreads.

Why Should You Audit Brand Visibility on LLMs?

AI assistants and large language models (LLMs) like ChatGPT, Gemini, Claude, and Perplexity are increasingly influencing how people discover products and make purchase decisions — which makes it essential to audit brand visibility on LLMs. Auditing your brand’s visibility on these platforms is no longer optional — it’s a requirement for protecting trust, accuracy, and competitive positioning.

A recent survey by Commerce and Future Commerce (2025) found that about 1 in 3 Gen Z consumers and 1 in 4 Millennials now turn to AI platforms instead of traditional channels when seeking shopping advice or discovering products.

Risks of Not Auditing Your Brand Visibility

Even if your brand appears in LLM responses, it doesn’t guarantee accurate or favorable representation. Failing to run regular audits can expose you to serious risks that weaken credibility, erode trust, and give competitors an advantage—especially as LLMs increasingly rely on brand signals to decide which companies to highlight.

The table below highlights the most common risks of not auditing your brand visibility.

| Risk | Description / Consequence |

| Misinformation or Outdated Information | If LLMs rely on stale content, your brand might be described incorrectly (e.g. old leadership, outdated product lines, wrong features), harming credibility. |

| Poor Sentiment or Context | You might appear in responses that position your brand negatively or as secondary, which can influence perception and trust even if you’re mentioned. |

| Competitors Dominate | Competitors who do audit and optimize will show up first in LLM-prompts, in favorable authority contexts, making your brand less visible. |

| Missed Opportunities | Without auditing, you can’t see where your gaps are (e.g., source weaknesses, missing content, lack of citations), so you miss chances to improve. |

| Customer Confusion | If responses to users are inconsistent, vague, or wrong, users may form wrong expectations or distrust your brand. |

Many of these risks are accelerated by content decay, where older pages, mentions, or sources lose accuracy, freshness, or contextual relevance—causing LLMs to surface outdated or weaker brand narratives over time.

Audit helps you see where you currently stand across mentions, sentiment, accuracy, and context — and gives you the baseline to improve.

It’s worth noting that some discussions online also cover how to audit brand visibility on Learning Management Systems (LMS) or auditing brand visibility on LMS platforms. These are separate processes: LMS audits measure course visibility inside e-learning tools, while LLM audits focus on brand perception in AI-generated responses.

How Do You Prepare for a Brand Visibility Audit?

Before you start testing prompts and collecting answers, you need a clear preparation framework. A structured setup ensures your audit produces measurable, repeatable insights rather than scattered observations.

How Should You Prepare for a Brand Visibility Audit?

1. Define Your Objectives

Not every brand audit has the same goal. Decide whether you want to focus on:

- Visibility: Are you being mentioned when users ask relevant questions?

- Accuracy: Is the information about your brand factually correct and up to date?

- Sentiment: Are mentions framed in a positive, neutral, or negative tone?

- Competitive Positioning: How do you compare when your brand is mentioned alongside competitors?

Having clear objectives helps you prioritize what to measure and where to act.

2. Select Priority LLMs

Different industries lean on different platforms. For example:

- ChatGPT (OpenAI) – widely adopted by general consumers for product discovery.

- Google Gemini – integrated into Google ecosystem, important for search-driven visibility.

- Anthropic Claude – growing adoption in enterprise and professional settings.

- Perplexity AI – popular among researchers, students, and professionals seeking cited sources.

Choose 2–3 platforms most relevant to your audience, region, and vertical instead of spreading your efforts too thin.

3. Build a Keyword & Prompt List

Think like your audience. What kinds of questions would they ask an AI assistant when researching your brand or your industry? Start by aligning prompts with user intent instead of just keywords.

Develop prompts around:

- Branded Queries: “What is [Brand]?”, “[Brand] reviews”, “[Brand] vs. [Competitor]”

- Category Queries: “Best [product/service] providers in [industry]”

- Problem-Solution Queries: “How can I solve [pain point]?” where your brand should appear as a solution.

This ensures you capture both direct and indirect visibility opportunities. Need a ready prompt set for lean teams? See AI-Visible Marketing for Startups for examples you can adapt in minutes

4. Set Up a Recording Sheet

To make your audit measurable and repeatable:

- Use a spreadsheet or tracking template to log prompts, responses, sources, sentiment, and accuracy.

- Include columns for date, platform, prompt, response summary, and visibility score.

- This allows you to compare results over time and spot improvements or declines.

With objectives, platforms, prompts, and tracking in place, you’re ready to move into the actual audit process.

Quick GEO Audit Summary (5 steps)

This quick summary outlines the core stages of a GEO visibility audit, giving you a high-level view of how brands assess and improve their presence across AI-powered search engines.

- Map where your brand appears

- Audit schema + extractable passages

- Improve clarity for AI readability

- Benchmark competitors

- Monitor over time

If you want the detailed, repeatable workflow agencies use, follow the 7-step audit below.

What Steps Should You Follow to Audit Brand Visibility on LLMs?

Conducting an audit doesn’t have to be complicated, but it should be systematic. When agencies audit brand visibility on LLMs, a structured process helps them where they stands today, how AI systems currently describe you, and what actions to take next.

Step 1: Run Basic Prompts

Start with the obvious. Ask questions like:

- “What is [Brand]?”

- “Is [Brand] reliable?”

- “[Brand] vs. [Competitor]”

This gives you a baseline of whether your brand appears in responses at all and how prominently it is positioned.

Step 2: Run Advanced Prompts

Go beyond brand-specific queries to test visibility in broader industry or problem-solving contexts. Examples include:

- “Best solutions for [pain point]”

- “Top providers in [industry]”

- “Which companies offer [service/product]?”

These prompts reveal whether your brand shows up organically when users don’t explicitly name you.

FinTech Startups often emerge in these broader contexts, whether users are asking about budgeting apps, investment tools, or crypto wallets, making it essential to capture both branded and non-branded AI prompts.

Step 3: Analyze Sources

LLMs rely on external data to shape their responses. Identify where information about your brand is coming from, such as:

- Wikipedia and knowledge graph entries

- Business directories and review sites

- News coverage or blogs

- Your own website

Ensure you’re reinforcing them with structured data. This step highlights which sources are helping—or hurting—your visibility.

Step 4: Audit Your Website & Content Structure

Make sure your own digital properties are optimized for AI consumption by following an on-page content checklist that covers schema, FAQs, and factual accuracy.

Check for:

- Schema markup (FAQ, product, article)

- Clear factual pages (About Us, Team, Product details)

- Updated FAQs that directly answer user questions

- Consistent use of brand terms and positioning statements

Well-structured, authoritative content increases the chance that LLMs reference your site correctly.

Step 5: Measure Sentiment and Accuracy

When your brand appears, note:

- Is the tone positive, neutral, or negative?

- Are product details, leadership, and facts correct?

- Whether details are correct or outdated to check if LLMs mention your products correctly.

This helps separate simple mentions from meaningful visibility.

Step 6: Benchmark Against Competitors

Repeat the same set of prompts for 2–3 key competitors. Record their:

- Mention frequency

- Source quality and diversity

- Sentiment framing

- Accuracy of details

Competitor benchmarking gives you context for whether you’re ahead, behind, or on par—similar to how pattern recognition in GEO helps uncover visibility gaps across AI-driven platforms.

Step 7: Document Findings & Set a Re-Audit Routine

Finally, log your results in a spreadsheet or tracking tool with columns for:

- Prompt used

- Platform (ChatGPT, Gemini, Claude, Perplexity)

- Response summary

- Visibility score

- Sentiment rating

Schedule regular audits (monthly or quarterly) to run a consistent brand visibility LLM audit, monitor shifts in visibility, track improvements, and catch new issues before they escalate.

This step-by-step approach ensures you’re not just checking if your brand appears, but also evaluating how it’s positioned, how accurate the information is, and how you compare against competitors.

What Metrics Should You Track During an LLM Audit?

When auditing brand visibility on LLMs, including LLM SEO, it’s not enough to simply note whether your name appears. You need clear, measurable metrics that show how often you’re mentioned, how you’re framed, and how you compare to competitors.

Tracking the following indicators will give you a structured way to evaluate performance and spot opportunities for improvement.

1. Brand Mention Frequency

- Count how often your brand appears across all tested prompts to calculate an LLM visibility score and benchmark brand presence in LLMs.

- Example: Brand shows up in 8 of 20 prompts → 40% mention frequency.

2. Sentiment Scores

- Categorize mentions as positive, neutral, or negative.

- Reveals whether LLMs position your brand as credible, generic, or untrustworthy.

3. Response Position

- Track where your brand appears in answers:

- First mention → strong authority.

- Buried mention → weaker influence.

- Excluded → major visibility gap.

4. Source Diversity and Authority

- Check how many different sources the LLM uses when citing your brand.

- Prioritize high-authority domains (news sites, directories, knowledge bases) over low-quality sources.

5. Share of Voice vs. Competitors

- Compare your mention frequency, sentiment, and positioning against key competitors.

- Identify whether competitors dominate AI-generated results.

Can I See How Often My Company Is Mentioned in AI-Generated Responses?

Yes, you can see how often your company is mentioned in AI-generated responses, and the most complete way to measure this is through Wellows. Checking answers manually inside ChatGPT, Gemini, or Perplexity only reveals isolated moments.

Wellows gives you a full view of how your company appears across generative engines at scale, helping you track brand mentions in large language models instead of relying on one-off checks.

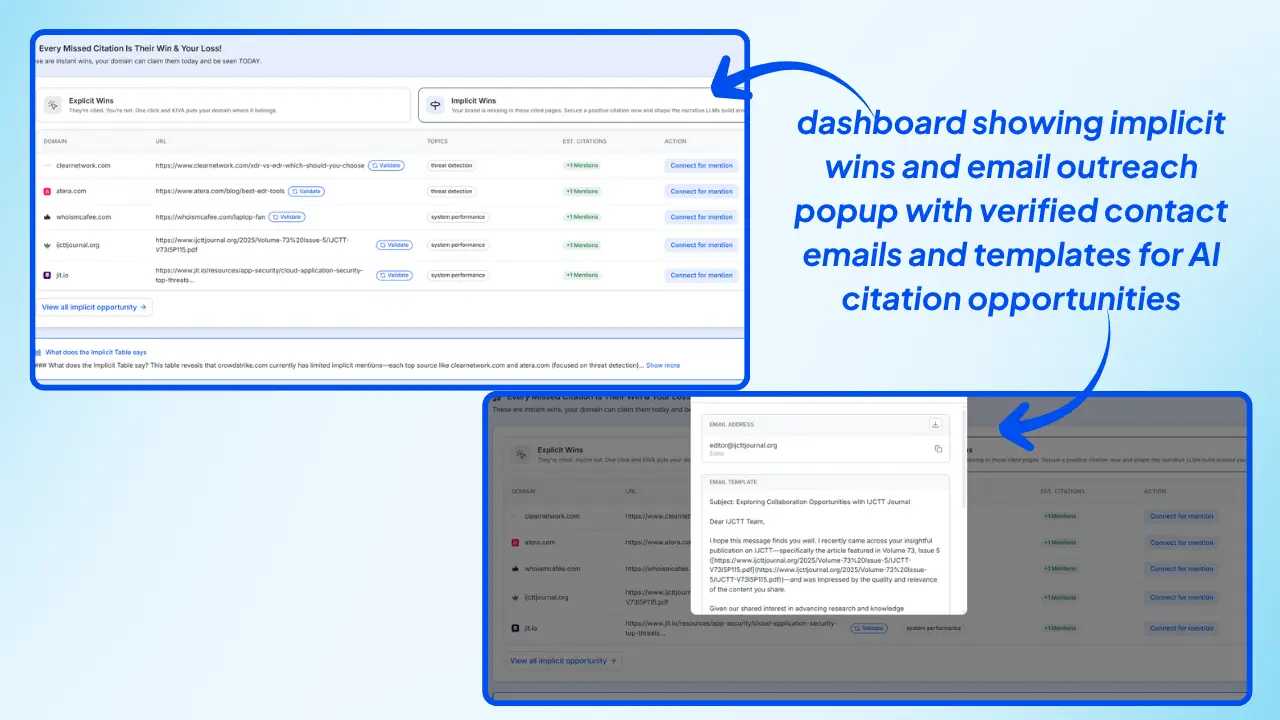

As an AI search visibility and autonomous marketing platform, Wellows tracks both explicit mentions and implicit mentions, functioning as a dedicated LLM visibility checking tool for modern brands.

This shows how often your company is mentioned and how consistently AI systems surface your brand across different types of questions.

How Wellows Measures Explicit and Implicit Mentions

Explicit mentions occur when your company name is stated directly in an AI-generated response. Wellows detects when:

- your company name appears in direct answers,

- your product names show up in feature explanations,

- AI systems list your brand inside comparisons or recommendations.

Implicit mentions occur when AI systems reference your brand without naming it. Wellows identifies when:

- your category description matches your offering (e.g., “the platform that automates SEO workflows”),

- your capabilities appear as answer elements without attribution,

- your market positioning shows up inside competitor comparisons where your name is implied but not stated.

By tracking explicit and implicit mentions together, Wellows reveals your full AI search visibility footprint — including the hidden visibility that most brands miss when they rely only on manual testing.

Why It Matters for AI Search Visibility

Explicit mentions show direct authority. Implicit mentions reveal influence. When you measure both, you can understand how AI engines truly perceive your company and take action to strengthen the passages, schema signals, and off-site factors that shape AI-generated responses.

With Wellows, you can see how often your company is mentioned in AI-generated responses — explicitly and implicitly — and use those insights to grow visibility across ChatGPT, Gemini, Perplexity, AI Overviews, and AI Mode from a single dashboard.

If you’re aligning this work with client expectations, then you should know why agencies can’t guarantee AI results that helps frame the reality: you can’t promise certainty in AI—but you can prove progress with the right measurement.

How Do I Audit My Brand’s Citation Frequency in ChatGPT and Perplexity?

Auditing your brand’s citation frequency in ChatGPT and Perplexity helps you understand how often your company appears in AI-generated answers, how it is framed, and how it compares to competitors.

Start with manual prompts such as “What is [Brand]?”, “Best tools for [problem]”, or “Top companies in [industry]” to see if your brand is mentioned and how it is described. Then move to structured tracking so you are not relying on one-off checks.

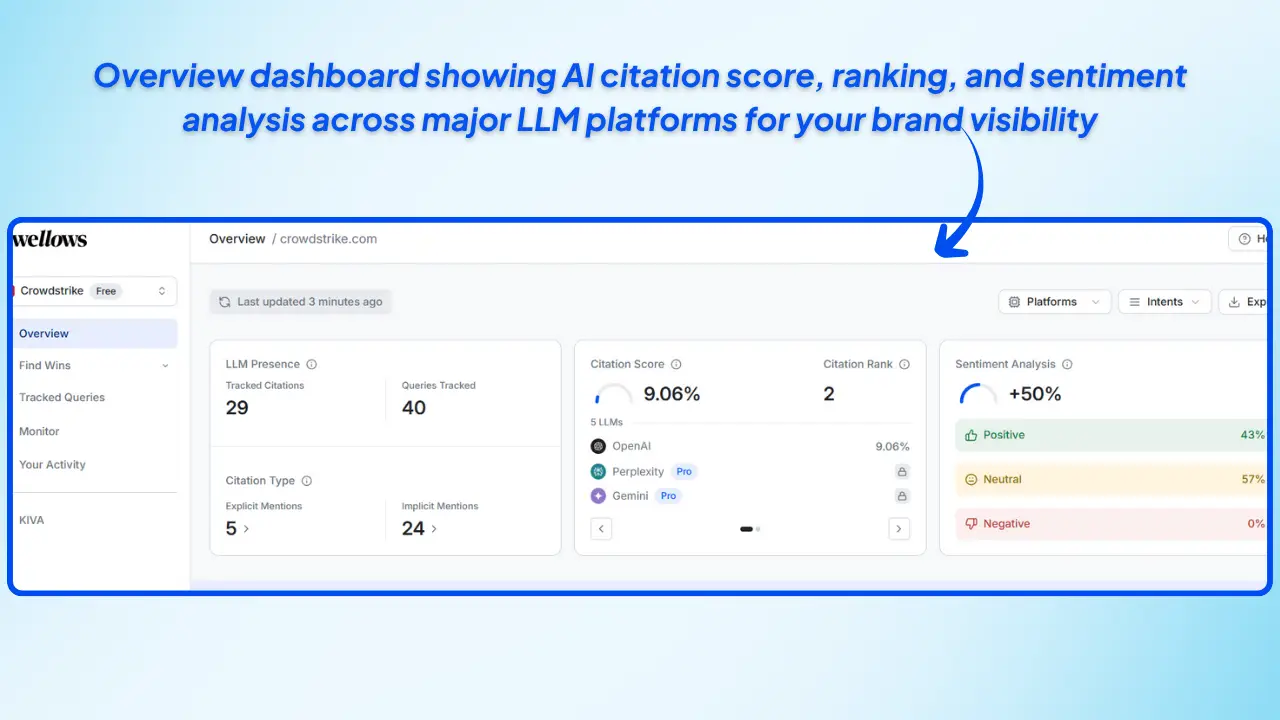

With Wellows, you can see citation scores and AI search visibility in a single view, including:

- LLM presence across ChatGPT, Perplexity, Gemini, and other engines.

- Tracked citations for your brand across all monitored queries.

- Queries tracked so you know which questions surface your brand.

- Citation type broken into explicit mentions (direct brand name) and implicit mentions (category or feature-level references).

- Citation score and rank that show how visible your brand is compared to competitors.

- Sentiment analysis across positive, neutral, and negative responses.

These insights make it easier to audit citation frequency in ChatGPT and Perplexity, close gaps in AI search visibility, and prioritize pages and topics that need stronger authority signals.

Why Is It Important to Monitor How AI Systems Represent My Brand?

Monitoring how AI systems represent your brand is important because large language models now act as primary discovery channels, making it necessary to monitor LLM brand visibility over time.. When users ask ChatGPT, Gemini, or Perplexity about a company or product category, the answers they generate shape trust, credibility, and buying decisions.

If those answers are inaccurate or outdated, users form the wrong impression before ever visiting your site.

Consistent monitoring helps you catch misinformation early, identify weak authority signals, and ensure AI descriptions match your real products and positioning. It also protects reputation as more people depend on generative engines instead of traditional search.

How Wellows Supports This as an AI Search Visibility Platform

This is where Wellows becomes essential. As an AI search visibility and autonomous marketing platform, Wellows shows exactly how your brand appears across ChatGPT answers, Gemini summaries, Perplexity citations, AI Overviews, and AI Mode.

It tracks both explicit mentions (direct references to your brand) and implicit mentions (when AI describes your category or features without naming you), giving you a complete view of your AI visibility.

![]()

1. Compare citation scores and brand reach

Wellows measures how frequently your brand appears across major AI engines and compares your visibility with competitors. Citation Score, explicit mentions, and implicit mentions help you understand performance and authority across AI-driven discovery.

2. Analyze industry trends and shifting market context

Wellows highlights rising brands, emerging trends, and category movements. This shows where your brand is gaining traction, where it is losing ground, and where opportunities are opening in the AI ecosystem.

3. Track historical visibility for continuous optimization

Monitoring records daily changes in your Citation Score and visibility signals. This time-series data helps teams identify improvements, correct declines early, and maintain consistent brand authority as AI models update.

By combining monitoring with Wellows, brands gain clarity across all major AI systems and the insights needed to strengthen accuracy, protect reputation, and improve visibility across generative engines

How Often Should I Monitor My Brand’s Presence in Perplexity AI Results?

Monitoring your brand’s presence in Perplexity AI is essential because Perplexity updates its citations, answer structures, and source preferences frequently. These shifts affect how often your brand appears, how it’s described, and whether you’re being recommended over competitors—making tools like a Perplexity Visibility Tracker critical for detecting changes before visibility gaps widen.

To stay ahead, you should monitor visibility on a predictable cadence.

Recommended Monitoring Frequency

Weekly Checks

Perplexity refreshes data and source citations rapidly, so reviewing your presence once a week helps you catch changes early — including new mentions, lost mentions, sentiment shifts, or emerging competitors.

Monthly Trend Review

A deeper monthly review helps you analyze patterns over time, evaluate improvements, and measure the impact of updates to your content, schema, or off-site signals.

How Wellows Makes Perplexity Monitoring Easier

As an AI search visibility and autonomous marketing platform, Wellows tracks how your brand appears inside Perplexity answers — including:

- Explicit mentions (direct brand citations)

- Implicit mentions (capabilities or features mapped to your brand)

- Citation Score and ranking

- Sentiment framing across answer types

- Comparisons vs. competitors inside Perplexity results

- Historical trends to see gains or declines over time

With centralized monitoring across ChatGPT, Gemini, Perplexity, AI Overviews, and AI Mode — powered by tools like the AI Overview Visibility Tracker — Wellows ensures you always know when visibility changes and why.

In short: Weekly checks help you track brand visibility in ChatGPT and monitor brand presence in LLMs before visibility gaps grow. Monthly reviews reveal long-term trends. Wellows keeps both automated and accurate so you never miss a change in Perplexity AI visibility.

How Do I Compare My Brand’s Visibility Against Competitors in ChatGPT and Gemini?

Comparing your brand’s visibility against competitors in ChatGPT and Gemini is now essential because users rely on AI systems to recommend companies, products, and solutions. Instead of ranking links, these models generate direct answers — which means you’re competing for mention frequency, accuracy, authority, and sentiment inside AI-generated responses.

Here’s how to compare your visibility effectively:

- Check how often each brand appears when users ask category, comparison, or solution-based questions.

- Review the framing and sentiment to see whether your brand or competitors are positioned as leaders, safe options, or secondary mentions.

- Analyze source patterns such as structured data, directories, and authoritative coverage that AI systems rely on to build responses.

- Look for implicit visibility — cases where AI describes a capability or category but names only your competitors.

- Evaluate content strength to understand why a competitor appears more often in ChatGPT or why Gemini prioritizes certain structured pages.

How Wellows Helps You Compare Visibility Accurately

Wellows makes this process measurable by showing how your brand and competitors appear across ChatGPT answers, Gemini summaries, Perplexity citations, AI Overviews, and AI Mode — all in one dashboard.

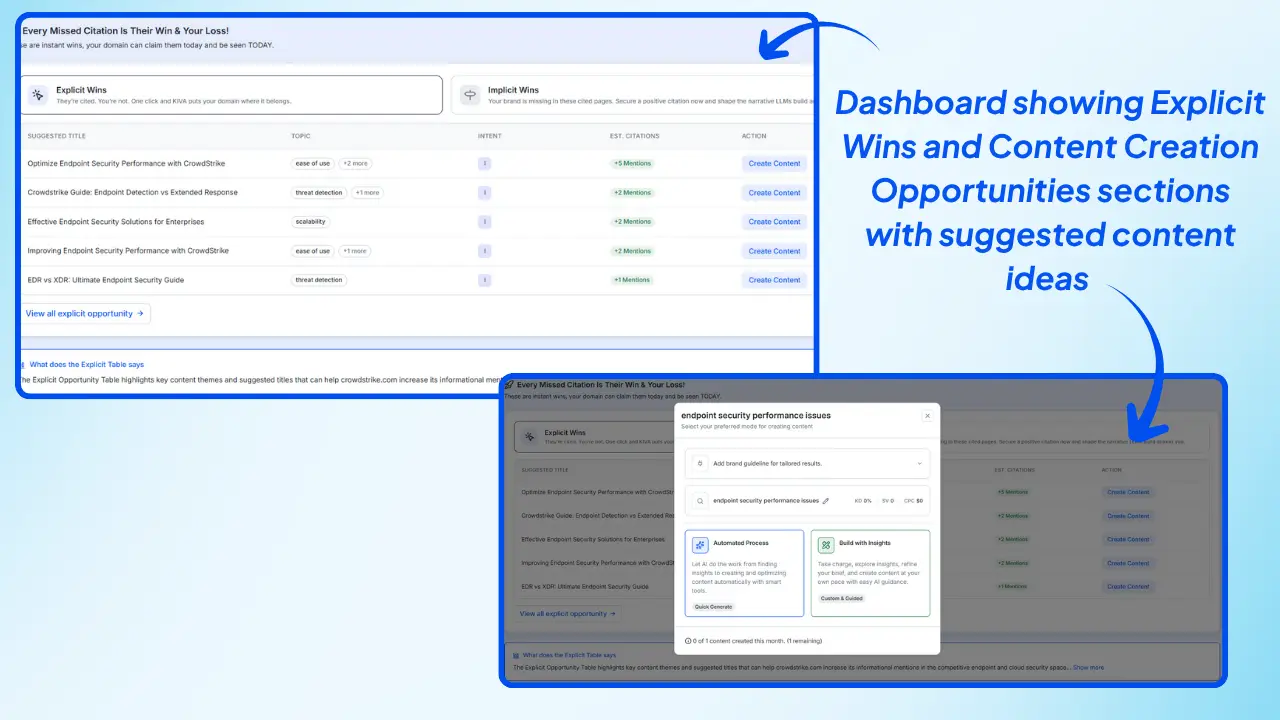

![]()

- Tracks explicit mentions and implicit mentions to show where competitors win visibility you don’t.

- Benchmarks Citation Scores across all major AI engines so you can see who has stronger authority signals.

- Reveals source and schema factors that boost competitor visibility inside Gemini and ChatGPT.

- Shows market-wide visibility shifts including rising brands, declining brands, and new competitive threats.

With a full view of competitive visibility, you can understand where you stand today, why certain competitors appear more often, and what actions will strengthen your presence across AI-generated answers.

What Are the Common Pitfalls in Auditing Brand Visibility?

Even a well-planned audit can fall short if you overlook key factors. To get a complete picture of how LLMs present your brand, avoid these common mistakes:

Common Mistakes

- Only Testing One LLM – Auditing just one model (like ChatGPT) gives an incomplete view since each LLM uses different data sources.

- Ignoring Negative or Misleading Results – Overlooking harmful or incorrect responses can damage brand trust.

- Not Benchmarking Competitors – Without comparing visibility against competitors, you can’t measure your real position.

- Tracking Only Mentions, Not Sentiment or Context – A mention alone isn’t enough; tone and accuracy matter too.

- Using Inconsistent Terminology – Different descriptions across sources confuse LLMs and weaken brand authority.

How to Improve Online Brand Visibility after an Audit?

An audit is only valuable if it leads to action, which is why the best way to audit your brand’s current presence in generative AI platforms is through repeatable LLM visibility tracking. Once you’ve identified gaps in how LLMs represent your brand, the next step is to fix inaccuracies, strengthen authority signals, and improve your presence across platforms.

Here’s how:

Update or Correct External Sources

- Ensure that business directories, Wikipedia entries, knowledge panels, and review sites contain up-to-date information.

- Correct outdated product details, leadership names, or service descriptions that LLMs may pull into responses.

Optimize On-Site Content with Schema, FAQs, and Factual Accuracy

- Add structured data (FAQ, Product, Article schema) to make content machine-readable.

- Create or update FAQ pages that directly answer user queries.

- Keep “About Us” and product/service pages factually precise and consistent.

Publish Expert Content and Comparisons

- Develop thought leadership articles, white papers, and industry comparison guides, ensuring they’re backed by strong digital PR and authoritative external mentions that LLMs can reference.

- Cover not just branded queries but also problem-solution content where your brand should appear as a recommended option.

Strengthen Off-Site Authority

- Secure reviews on trusted platforms, industry directories, and verified marketplaces.

- Build digital PR through guest articles, interviews, and media mentions.

- High-quality external signals increase the likelihood that LLMs cite your brand.

Track Improvements in Recurring Audits

- Schedule monthly or quarterly re-audits to measure changes in visibility, sentiment, and share of voice.

- Use consistent prompts and scoring frameworks to compare results over time.

- Continuous tracking ensures you stay ahead as LLM algorithms evolve.

What Tools are Best for Auditing Brand Visibility on LLMs?

Auditing your brand’s visibility across large language models (LLMs) helps you understand how AI systems represent your company and where visibility gaps exist.

To properly audit and improve AI presence, brands increasingly rely on tools for tracking LLM brand visibility that measure mentions, sentiment, citations, and competitive positioning across generative engines.

Following are the tools for measuring brand visibility in llms:

- Wellows: A platform focused on measuring how brands appear across AI-generated answers in systems like ChatGPT, Gemini, and Perplexity. Wellows tracks direct and indirect brand mentions, sentiment, citation presence, and competitor visibility to help teams understand how LLMs interpret and surface their brand.

- LLM.co: Provides structured brand positioning audits that evaluate how LLMs describe your brand, including sentiment analysis, entity recognition accuracy, and comparative positioning across competitors.

- Total Authority: Offers AI visibility audits that assess how often and where your brand appears in AI-generated answers, with insights into competitor dominance, content strengths, and authority gaps.

- LLM Visibility: Delivers an all-in-one AI visibility management platform that tracks brand mentions, competitor presence, and content performance within AI-powered search environments.

- Riff Analytics: Includes an LLM Brand Visibility Tracker that monitors brand mentions across major AI models, helping teams understand positioning, visibility trends, and areas that need optimization.

- Scrunch AI: Focuses on persona-driven AI visibility, analyzing how different audience segments encounter your brand in AI-powered discovery and providing insights to refine content and positioning strategies.

Can I Use Python Scripts to Systematically Check My Brand Mentions in LLMs?

Yes — you can use Python scripts to systematically check your brand mentions in large language models (LLMs). Python allows you to send automated prompts to AI systems like ChatGPT, Gemini, Claude, or Perplexity and then review the responses for explicit or implicit references to your brand.

- Automated Prompting: Send structured prompts at scale to different LLM APIs.

- Response Collection: Store and review answers to see when and how your brand appears.

- Text Analysis: Use simple keyword matching or NLP libraries to detect brand mentions.

![]()

This method is useful for small-scale audits, prototype testing, or checking how different prompts influence brand visibility. It also helps you understand whether LLMs use accurate information, how they frame your brand, and whether competitors appear more frequently in similar contexts.

However, Python scripts have limitations. API costs can scale quickly, rate limits restrict large audits, and manual scripts do not provide sentiment scoring, competitor benchmarking, historical tracking, or implicit-mention detection. For full visibility, many teams pair scripting with automated monitoring tools.

Python scripts are effective for controlled tests, but they are not a complete solution for large-scale LLM visibility tracking.

FAQs

Conclusion

Auditing brand visibility on LLMs is no longer a nice-to-have—it’s a necessity. As consumers increasingly rely on AI assistants like ChatGPT, Gemini, Claude, and Perplexity to shape their opinions and guide their decisions, your brand’s presence in these answers directly impacts trust, credibility, and conversions.

A structured audit ensures you know not only if your brand is being mentioned, but also how it’s being represented—accurately, positively, and competitively.

When you audit brand visibility on LLMs using a consistent framework, you can track key metrics such as mentions, sentiment, accuracy, and share of voice to protect and grow your authority in the AI-driven landscape.

Now is the time to take action. Start with simple prompts, log your results, and build a repeatable framework for monitoring visibility—just as you would follow a keyword strategy checklist in traditional SEO. Treat it as an ongoing process—regular checks and updates will keep your brand positioned correctly as LLMs evolve.

The sooner you begin auditing, the sooner you can close gaps, correct misinformation, and strengthen your competitive edge.