According to Statista, a majority of users now rely on AI systems to assist with complex information discovery, which explains why traffic can fall even when SERP rankings remain stable (Statista, 2024).

The core difference lies in retrieval. Google indexing only confirms that a page exists, while LLM systems decide whether that page is reliable enough to reuse in answers. ChatGPT (OpenAI’s LLM answering engine) and Perplexity AI (a citation-first AI search engine) apply stricter confidence checks, which is why Google ranking does not ensure visibility in ChatGPT.

AI answer engines also prioritize consistency over freshness alone. Google AI Overviews favors sources that repeatedly align with known entities and stable explanations, even when newer pages are available. This behavior creates a clear disconnect between rankings and AI citations, reinforcing the gap between Google rankings and LLM citations.

Understanding AI Search Dynamics: How AI Engines Discover, Select, and Exclude Websites

Understanding why websites are ignored by AI search requires looking beyond rankings and into how AI systems select sources, especially if you want to understand how to rank in Perplexity by improving your chances of being cited.

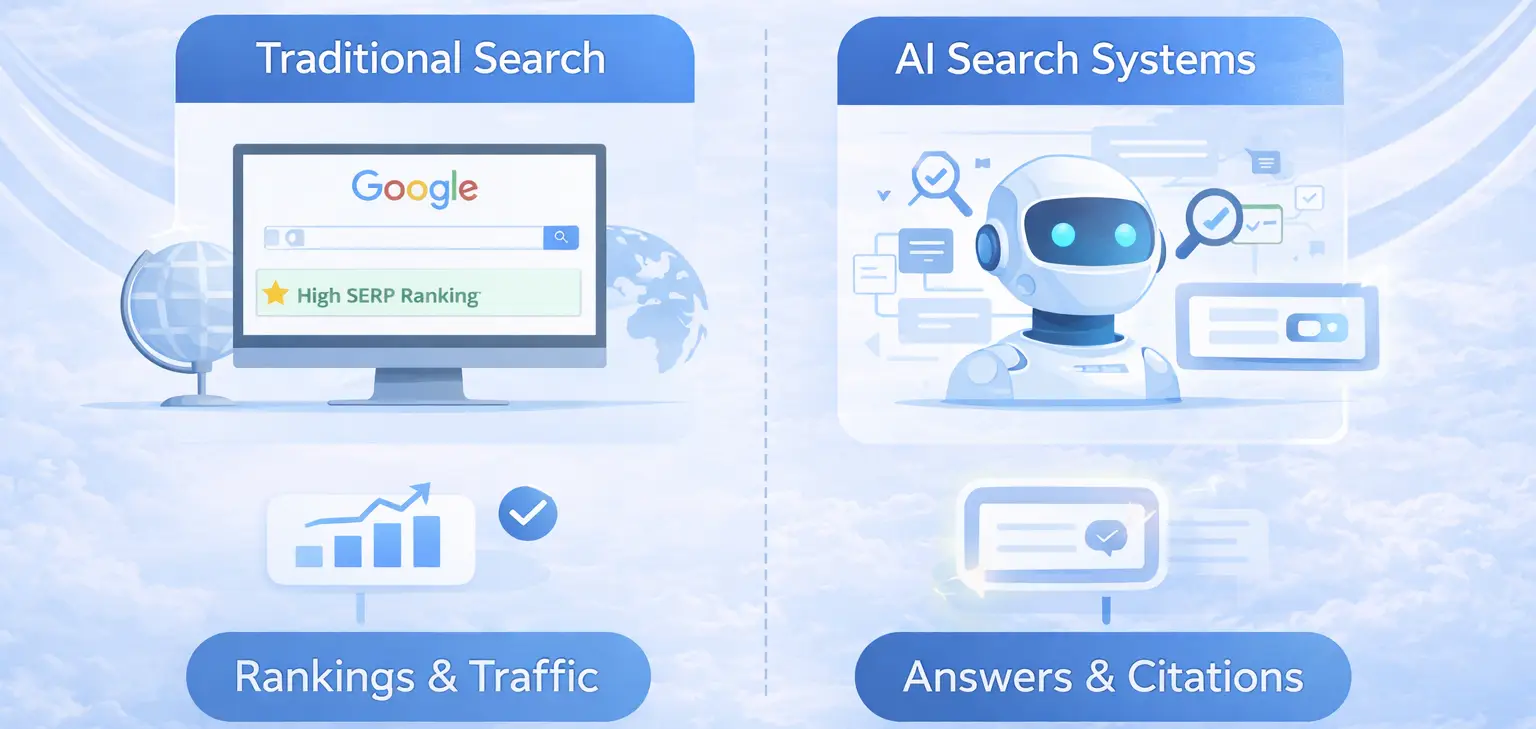

Compared to traditional search, AI search engines do not work on a simple crawl–rank–click model. They discover content first, then decide whether it is reliable enough to be reused in generated answers. This separation is why many sites are visible to crawlers but still face AI exclusion.

That reuse logic is the core of how to rank high on chatgpt because visibility depends on being selected as a dependable source, not simply being indexed.

Discovery does not guarantee usage. AI systems may access a page but still reject it if confidence signals are weak or inconsistent across sources.

Selection is trust-driven. Only sources that demonstrate stable explanations and strong entity signals are selected for answers.

| Traditional Search | AI Search Systems |

|---|---|

| Focuses on crawling and indexing pages | Focuses on retrieving trusted information units |

| Ranks pages using links and keywords | Evaluates entity trust and synthesis quality |

| Returns a list of results | Generates direct answers |

LLMs (Large Language Models) such as Google Gemini rely on Retrieval-Augmented Generation (RAG), where content is pulled, validated, and combined before being used. This process explains how AI selects sites to cite, rather than relying on rankings alone.

AI systems also expand a single question into multiple related variations through query fan-out. Each variation is checked independently, and only sources that remain consistent are selected. This behavior highlights the structural gap between generative systems and keyword-based models like ChatGPT vs traditional search.

Reasons AI Search Overlooks Websites

Reasons why websites are ignored by AI search are often mistaken for technical SEO problems. In reality, these are meaning and authority issues, and the cost of ignoring AI search becomes visible when AI systems cannot clearly connect your content with expertise, intent, and relevance.

Weak topical authority. When content only covers a topic at a surface level, AI systems cannot confirm expertise or depth. This weakens signals tied to the E-E-A-T framework and reduces selection confidence, which directly aligns with key generative engine visibility factors.

Repetitive SEO content. Pages that reuse the same keyword-focused patterns without adding original insight fail AI synthesis checks. Large language models detect repetition quickly and deprioritize content that does not expand understanding.

Poor entity clarity. When brand entities, topics, and relationships are not clearly defined, AI systems struggle to place the content within knowledge graphs. This lack of clarity lowers trust and reduces the chance of being retrieved for AI-generated answers.

Challenges in AI Search Algorithms (and Why Websites Can’t Control Them Fully)

AI search systems are built to reduce uncertainty, not to include every possible source. Many visibility gaps happen because of system-level limits rather than publisher mistakes.

This is another reason why websites are ignored by AI search even when no clear SEO mistakes exist.

Conservative citation behavior. AI models prefer a small, repeatedly validated set of sources instead of expanding coverage. This limits risk but excludes many relevant pages, especially when outputs change between sessions due to AI answer variability.

Hallucination avoidance. To prevent incorrect answers, models apply strict filters before reusing content. If information cannot be verified across multiple signals, it is skipped, even when the content itself is accurate.

Model variance. Different systems interpret the same content differently. ChatGPT-4o and Claude use distinct safety rules and confidence thresholds, which explains why source selection can change between versions, as seen after the ChatGPT-4o prompt leak.

Issues with Website Indexing in AI Systems

Indexing in AI systems works differently from traditional search. Being indexed does not guarantee usage. Indexing only confirms that content exists, while retrievability determines whether it can be used in AI-generated answers. This gap explains why many sites appear healthy but still experience AI exclusion.

Did you know?

Did you know?

How Content Quality Influences AI Search Results

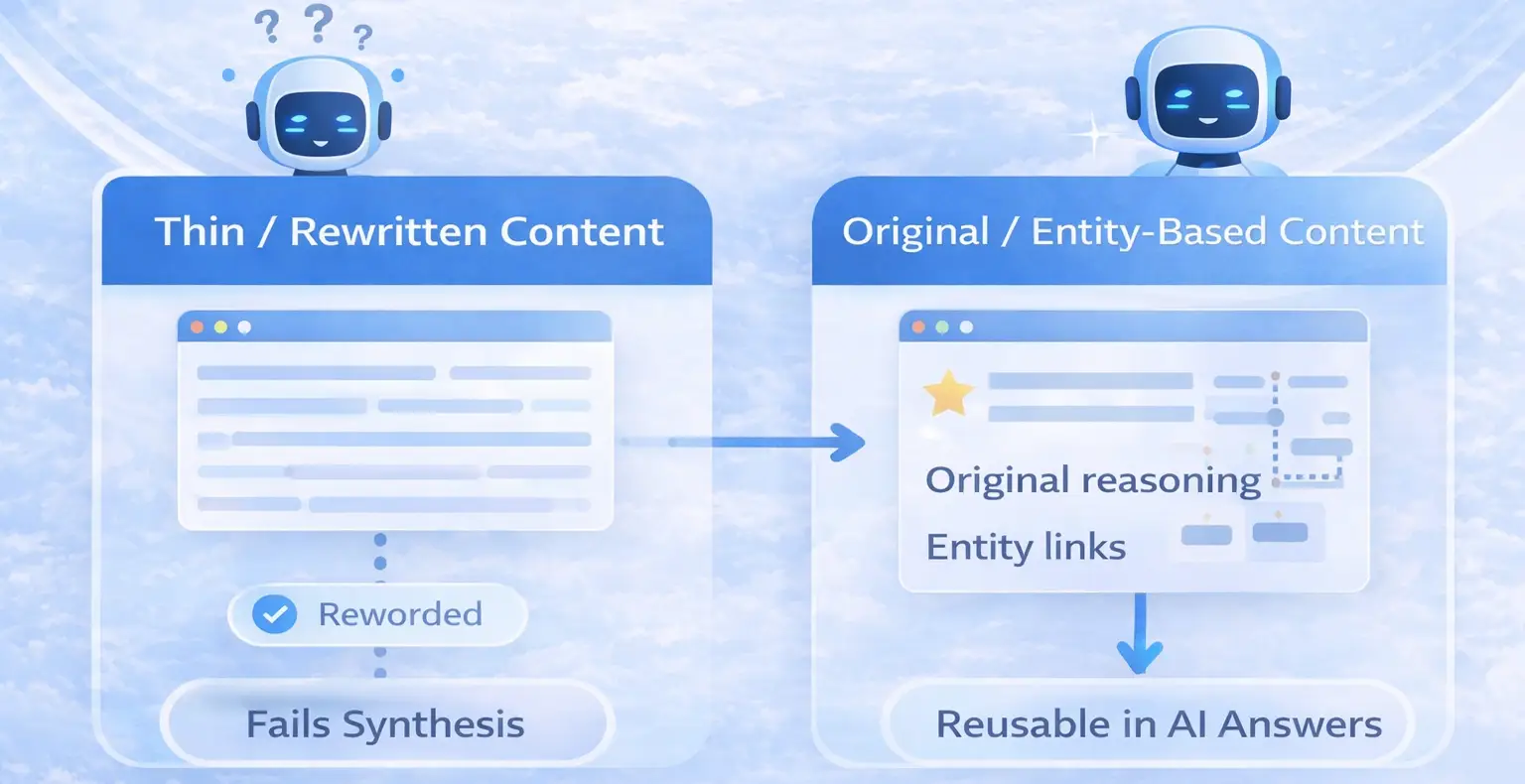

AI search engines assess content based on depth, clarity, and reasoning. Thin pages or lightly reworded SEO content fail because they do not provide original analysis that AI systems can confidently reuse.

- Original analysis enables synthesis. AI systems favor entity-based content that explains relationships, causes, and outcomes instead of restating known facts. When content builds topic authority through clear reasoning, it becomes easier for models to reuse it across answers, which is why entity-based content stands out in LLMs.

- Reasoning matters more than repetition. AI models follow prompt patterns that reward structured thinking over keyword density. Content aligned with how questions are asked and answered performs better than rewritten SEO pages, reinforcing why prompts matter more than keywords in generative engines.

Technical SEO Issues That Lead to AI Search Neglect

AI search systems fail to use content when structure and meaning are unclear. The issue is not speed or performance. It is interpretability—whether AI systems can clearly read, separate, and prioritize information.

How Website Design Affects AI Search Visibility

Website design influences how clearly information is interpreted by AI systems. For AI search, design is not about visual appeal or conversion optimization, but about structural clarity and context.

- Why content hierarchy affects AI comprehension: Website design signals meaning through structure. Clear content hierarchy helps AI systems identify what matters most on a page and how ideas relate to each other. When sections are logically ordered and consistently formatted, AI models can extract information more reliably. This is why planning content structure in advance, supported by structured SEO briefs for AI search success, improves AI visibility.

- How context windows shape AI interpretation: AI systems process content within limited context windows, not full pages at once. When related information is scattered or separated by unrelated elements, meaning is lost. Strong information architecture keeps connected ideas close together, improving interpretability. This explains why context matters in the age of LLMs for AI-driven search visibility.

Why Outdated Websites Are Ignored by AI Searches

AI search engines deprioritize outdated websites not only because content is old, but because entity signals weaken over time. This is a core reason why websites are ignored by AI search even when pages still exist and rank. When information stops evolving, AI systems lose confidence in its relevance and accuracy.

| Issue | How AI Interprets It | Impact on Visibility |

|---|---|---|

| Content decay | AI detects outdated explanations, examples, and data points | Lower entity freshness and reduced temporal relevance aligned with current GEO stats and trends |

| Stagnant brand entities | Entities show no progression or adaptation to new search behavior | Loss of trust signals as AI systems favor evolving sources over legacy approaches, reinforcing how traditional SEO practices evolve under GEO |

Impact of SEO on AI Search Engine Recognition

SEO still matters for AI search, but its role has changed. It now works as a supporting layer that helps content get discovered, not as a guarantee that content will be selected or cited by AI systems.

Then

Traditional SEO Focus

➡️ Success measured through positions and traffic

➡️ Visibility driven by keywords and backlinks

Now

AI Search + GEO Era

➡️ Success measured through LLM mentions and usage

➡️ Visibility driven by entity clarity and synthesis

SEO helps establish baseline credibility, but AI systems rely on different signals to evaluate content. This shift explains why websites are ignored by AI search despite strong traditional SEO performance. As visibility shifts from SERPs to LLM-generated answers, the difference between SEO and GEO becomes more apparent.

For consistent AI recognition, SEO must work alongside GEO rather than operate alone. When both are aligned, brands improve retrievability and trust across systems, which is why many teams now focus on combining SEO and GEO for AI visibility.

How Can Websites Be Optimized for AI Search Recognition?

Optimizing for AI search requires a shift from page-level tactics to system-level thinking, and that change is largely why content optimization feels way harder than SEO for most teams — once you see exactly where the friction lives, the fixes become far more obvious.

AI recognition depends on how consistently a brand can be retrieved, trusted, and reused across different queries and answer formats.

What Can Be Done to Improve Website Visibility in AI Searches?

Improving visibility in AI search requires ongoing measurement and controlled iteration. AI systems respond to consistency, clarity, and governance, not one-time optimizations.

AI visibility with and without structured execution

This comparison shows how governance and measurement affect whether content is reused by AI systems.Sustained visibility improves when teams treat AI search as a governed channel. Content governance helps maintain consistency across updates, aligned with content governance for GEO teams.

As an AI search visibility platform, Wellows supports audits, brand monitoring, and iteration through measurable signals such as Citation Score.

FAQs

Websites are often excluded because their content lacks clear entity signals, original reasoning, or consistent explanations. AI systems avoid reusing sources that feel generic, repetitive, or risky, even if those pages perform well in traditional search.

Optimization for AI search focuses on retrieval and reuse rather than rankings. Content performs better when it demonstrates topic authority, clear intent coverage, and explanations that AI systems can confidently synthesize across multiple queries.

Website design affects how clearly AI systems understand structure and importance. Poor content hierarchy or scattered context makes it harder for AI models to identify key information and reuse it in generated answers.

Outdated websites experience entity decay. When explanations, data, or brand signals stop evolving, AI systems reduce trust and deprioritize those sources in favor of content that reflects current understanding.

This means the content is accessible but not retrievable. AI systems may be aware of a page but still exclude it during answer generation if confidence, consistency, or authority signals are weak.

Conclusion

Why websites are ignored by AI search is no longer a ranking problem. It is a retrieval and trust problem. Content must be selected, trusted, and reused by AI systems to remain visible.

Retrieval matters more than rankings. AI visibility depends on whether content is reused inside generated answers, not where it appears in traditional SERPs.

Authority outweighs optimization tricks. AI systems favor sources that show consistent expertise and reliable explanations across multiple queries.

Visibility matters more than traffic. Being present inside AI-generated answers shapes brand recognition long before users visit a website.