Search authority is no longer defined by rankings alone. In 2026, visibility depends on whether large language models recognize and cite your brand inside AI-generated answers.

Users now turn to tools like ChatGPT, Gemini, and Google’s AI Overviews for instant responses, shifting discovery away from traditional search pages.

At the center of this change are LLM citations, the sources AI systems use to justify their answers. This blog explains what LLM citations are, how they work, how to earn and monitor them, how Wellows helps you to get cited, best strategies and a lot more.

- LLM citations reward content that is easy to extract, verify, and reuse, not content written only for rankings or persuasion.

- Citations are more valuable than mentions alone, especially when your brand name and source link appear together in an AI answer.

- Freshness strongly influences citation selection, with recently published or regularly updated pages cited more often for evolving topics.

- Semantic precision matters more than broad coverage, as LLMs favor pages that directly answer specific questions.

- Unlinked mentions and expert quotes still shape AI visibility, even without traditional backlinks.

- Citation performance must be tracked across models, since ChatGPT, Gemini, and others surface different sources for the same query.

What are LLM Citations?

LLM citations are source references shown by AI assistants when they generate an answer and want to show where specific facts, definitions, or instructions came from.

In practice, it means your page is used as supporting material behind an AI response, and the model exposes that support as a linked source, often favoring pages that pass structural and relevance checks identified by an On Page SEO Checker.

What’s the value of being cited by an LLM vs mentioned? Being cited by an LLM drives direct trust, click potential, and authority, while a mention mainly boosts brand awareness without guaranteed traffic or attribution.

Let’s see how AI citations vs mentions differ in visibility, traffic impact, trust signals, and long-term authority building:

Citations (linked sources): A citation signals trust and authority because the AI explicitly references your content as a source. Your page appears in a “Sources” area, footnote-style links, or a reference panel. This is most common for stats, step-by-steps, definitions, and time-sensitive facts.

Mentions (brand inclusion): A mention improves brand recall but offers less attribution and is harder to measure. Your brand or product name is included in the generated text, often in recommendation-style answers, even if there’s no link.

Do citations or mentions drive more awareness in answer engine search? Citations drive stronger, actionable awareness through visible attribution and clicks. Mentions mainly support passive brand recall with limited traffic impact.

The most valuable scenario is when you get both: your brand is named in the answer, and your page is also included as a source. That combination drives recall and can also drive clicks, depending on the query and interface.

How to Get LLM Citations? [8 Key Strategies]

LLM citations don’t happen by accident. They’re driven by clarity, structure, accessibility, and original value and these same principles are central to rank high on ChatGPT, where citation-ready content directly influences AI visibility.. To earn citations from tools like ChatGPT, Gemini, or Claude, content must be created in a way machines can easily parse, trust, and reuse.

Here are the 8 strategies on how to get cited by LLMs:

1. Analyze What LLMs Already Cite in Your Niche

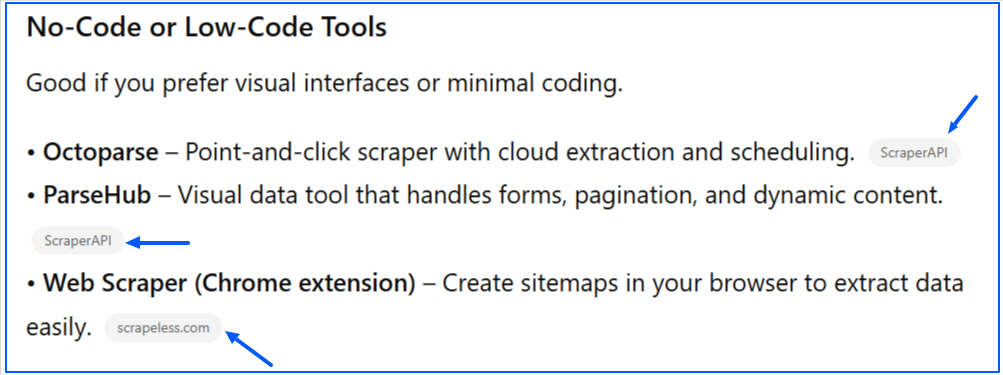

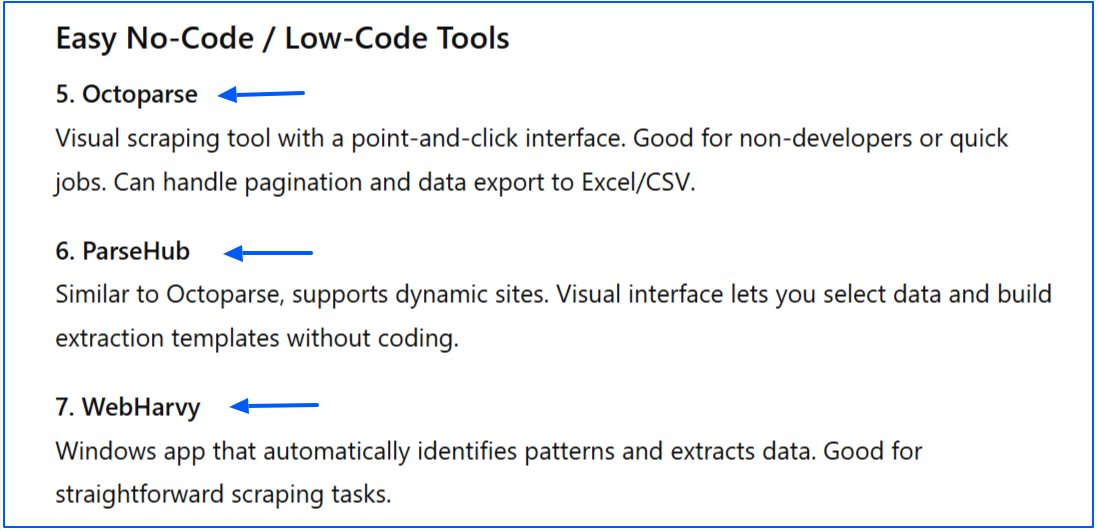

Before creating new content, identify which topics, formats, and pages AI systems already reference in your industry. Patterns often emerge around data pages, tools, glossaries, and research-driven content.

You can ask multiple AI tools the same high-intent questions from your niche and record which sources appear repeatedly, a process often handled at scale by generative engine optimization agencies to identify consistent citation patterns across models. Focus on the domains, page types, and content formats that show up most often.

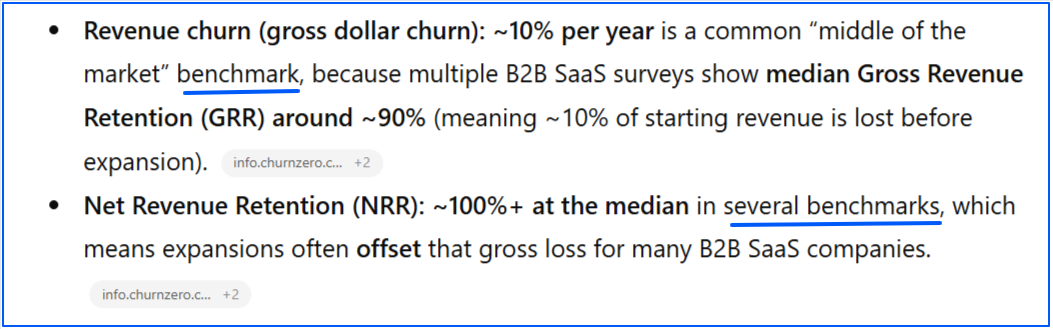

For example, asking “What is the average churn rate for B2B SaaS?” often surfaces benchmark reports and data studies rather than opinion blogs, signaling a strong preference for research-backed content.

2. Find and Fill Citation Gaps

Look for queries where competitors are cited but your brand is not, or where existing answers are outdated or unclear. These gaps are opportunities to publish the definitive resource LLMs are missing.

Wellows help you see exactly where your brand and competitors are cited across AI-generated answers for specific topics or queries. This makes it easy to spot topics where competitors are referenced with outdated information or incomplete explanations.

Publishing fresher, more accurate content around those queries gives LLMs a better source to cite.

3. Structure Content With Clear Hierarchy

Use H1 for the main topic, H2 for major sections, and H3 for supporting points. A clean hierarchy helps LLMs understand topic boundaries and follow your content’s logic.

An article titled “How Health Insurance Works” can use this structure:

H1: How Health Insurance Works H2: Types of Health Insurance H3: Employer-Sponsored Plans H3: Individual Health Plans H2: What Health Insurance Covers

Where H1 is the main heading (or title), H2s come under H1, and H3s come under their relevant H2s.

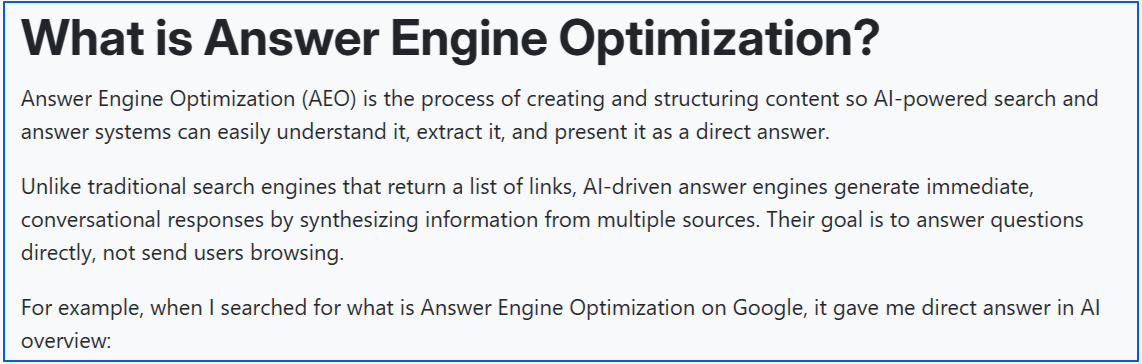

4. Lead With Definitive Answers

State definitions, explanations, or conclusions upfront. Avoid fancy tone or language. Clear, confident answers are easier for LLMs to extract and cite.

5. Use Structured Formatting

Lists, tables, and FAQ-style sections make key information easy to identify. These formats closely match LLM training patterns and improve citation likelihood.

Here is an example of how you can add FAQs in your content:

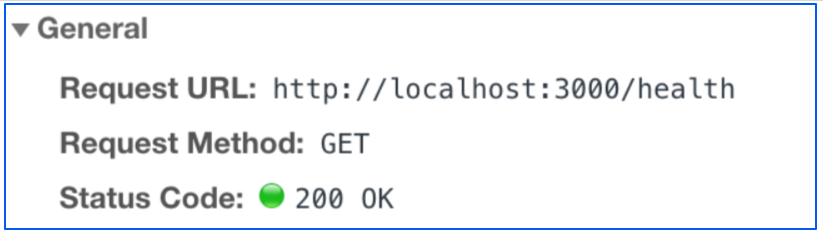

6. Ensure Accessibility and Crawlability

Content must be publicly accessible and indexable. If AI systems can’t fetch your page, they can’t cite it. Here are some examples of what to check:

- The page is not gated behind logins, paywalls, or email forms.

- Important content is rendered in HTML, not hidden behind heavy JavaScript.

- The page is included in your XML sitemap and discoverable through internal links.

- The page returns a 200 status code and isn’t blocked by robots.txt or noindex tags.

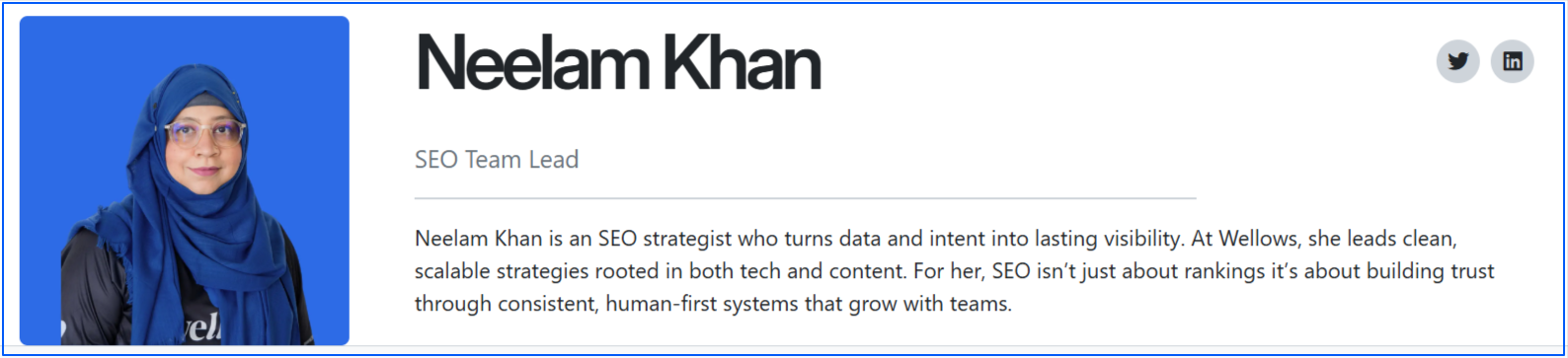

7. Reinforce EEAT Signals

LLMs rely on retrieval systems that inherit search engine trust signals. Clear author attribution, transparent sourcing, and demonstrated expertise increase the chance your content is surfaced.

8. Prioritize Original, Verifiable Insights

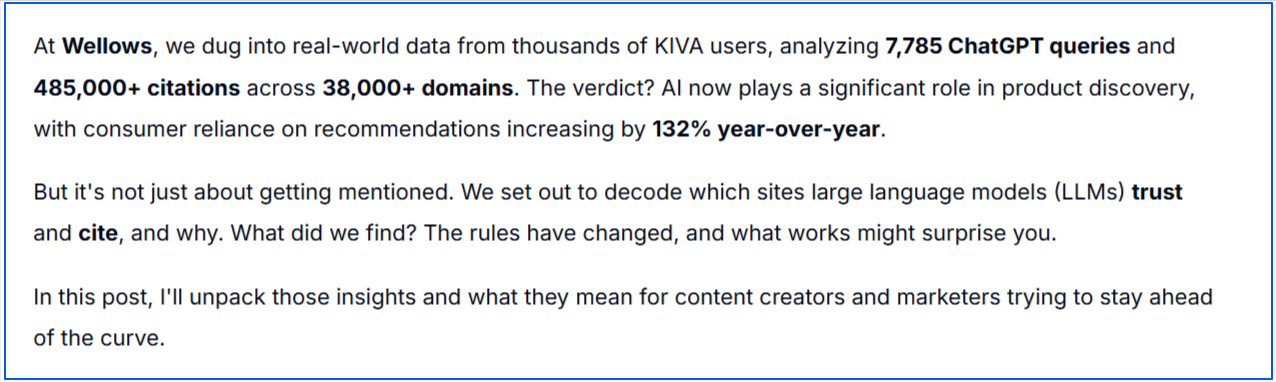

Proprietary data, first-hand research, surveys, and case studies outperform generic summaries. Original information gives LLMs something unique to reference.

Takeaway: When content is researched first, structured clearly, and backed by real expertise, it becomes a reliable input for AI-generated answers and a consistent source of LLM citations.

How Wellows Helps Brands Earn LLM Citations?

Wellows helps you check and improve how AI systems reference your brand across major AI answers.

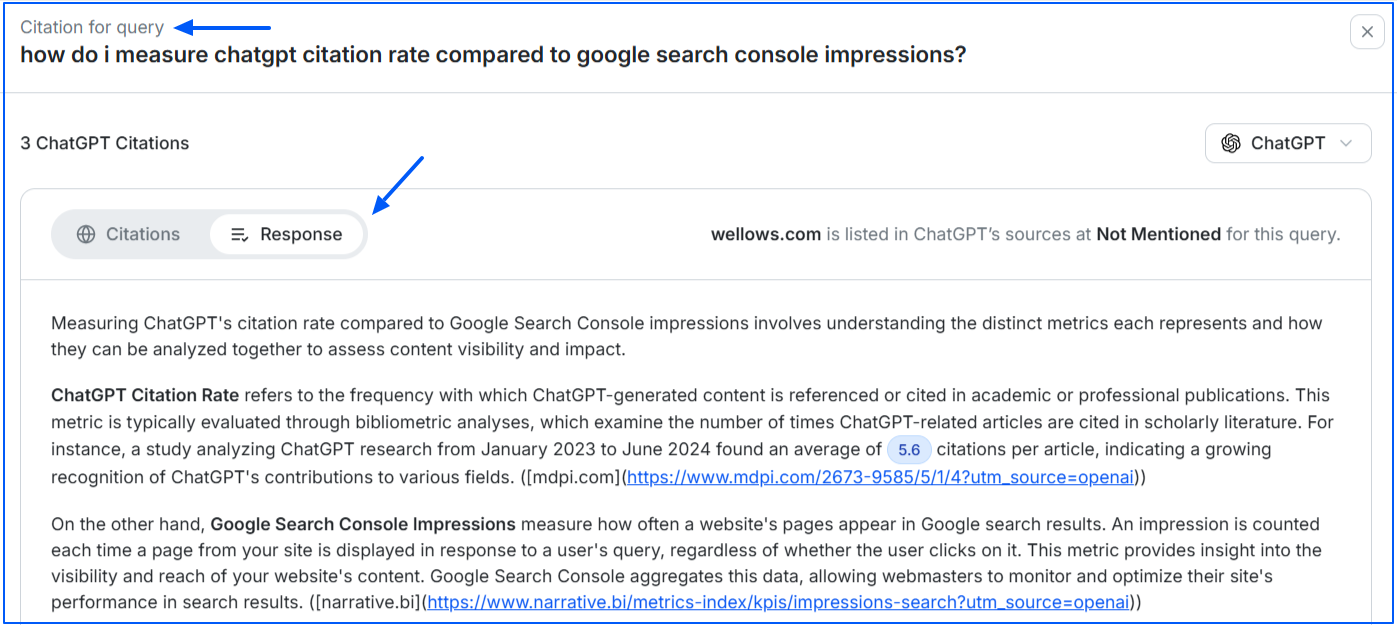

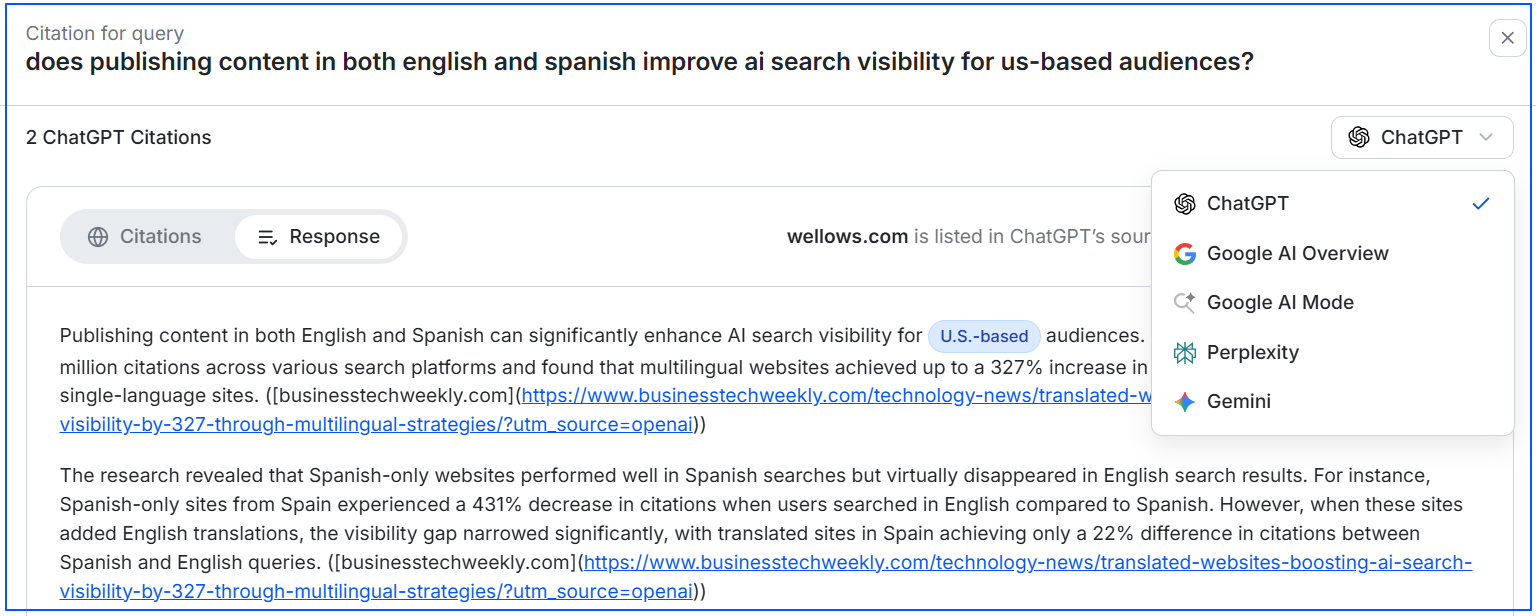

Instead of guessing why a competitor shows up, it shows you where you are cited, where you are only mentioned, and where you are missing entirely across platforms like ChatGPT, Gemini, Perplexity, Google AI Overviews, and AI Mode.

Here is how you can use it to strategically earn LLM citations:

1. Check Visibility and Citations Across AI Platforms

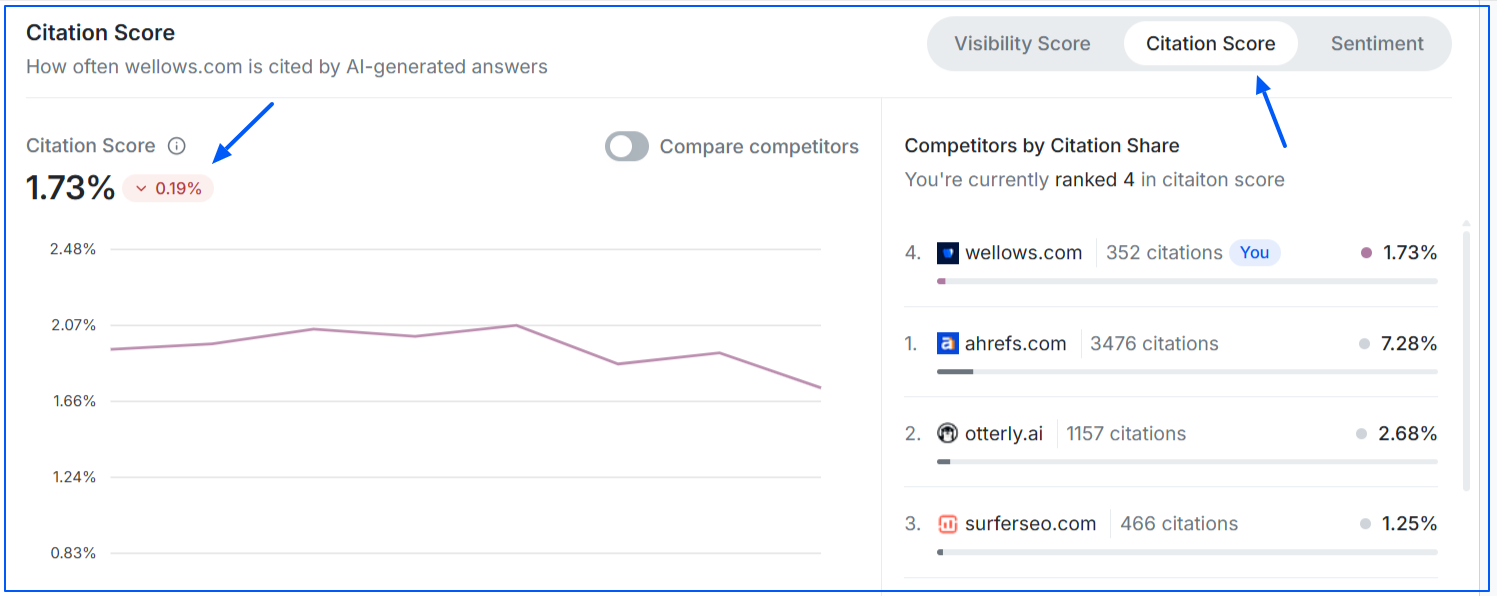

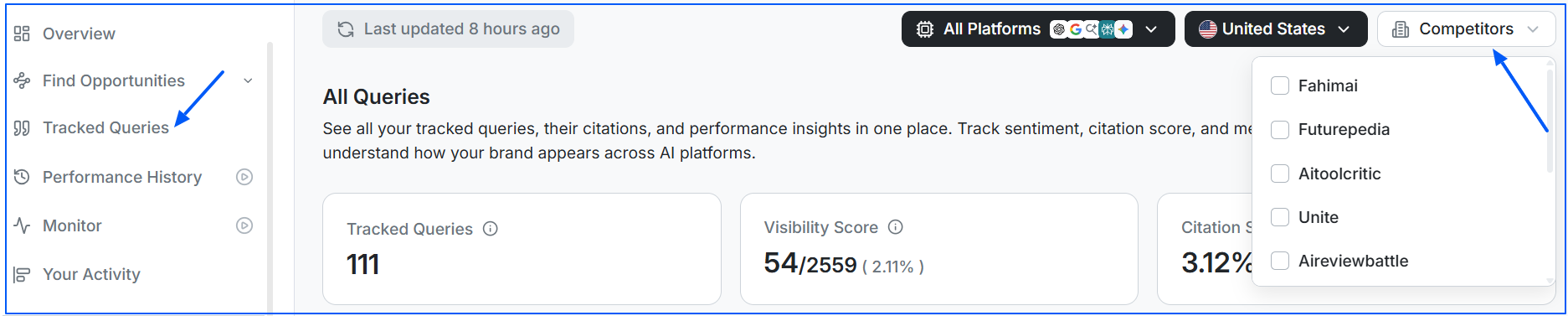

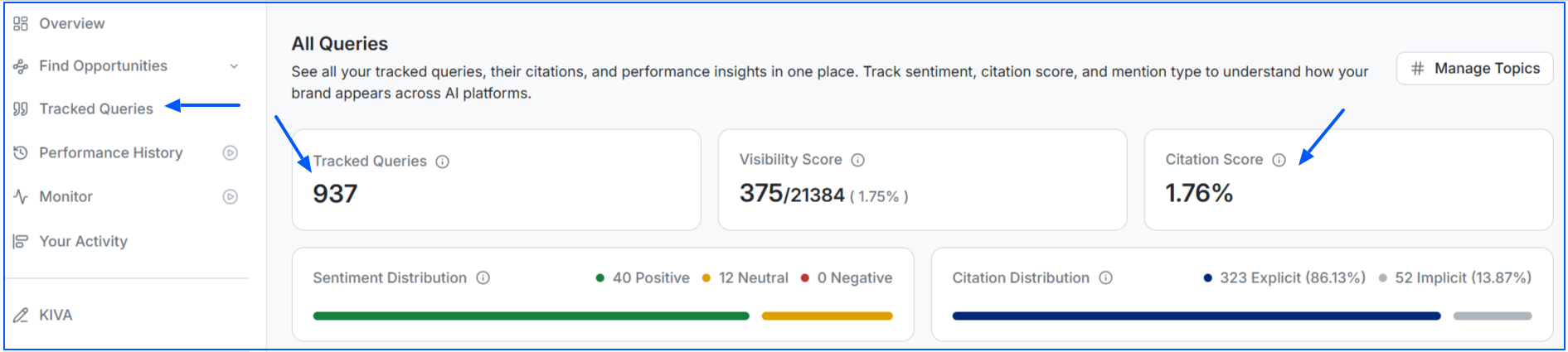

Wellows provides a Visibility Score that shows how often your brand appears in AI answers, and a Citation Score that reflects how often your brand is cited as a source by LLMs.

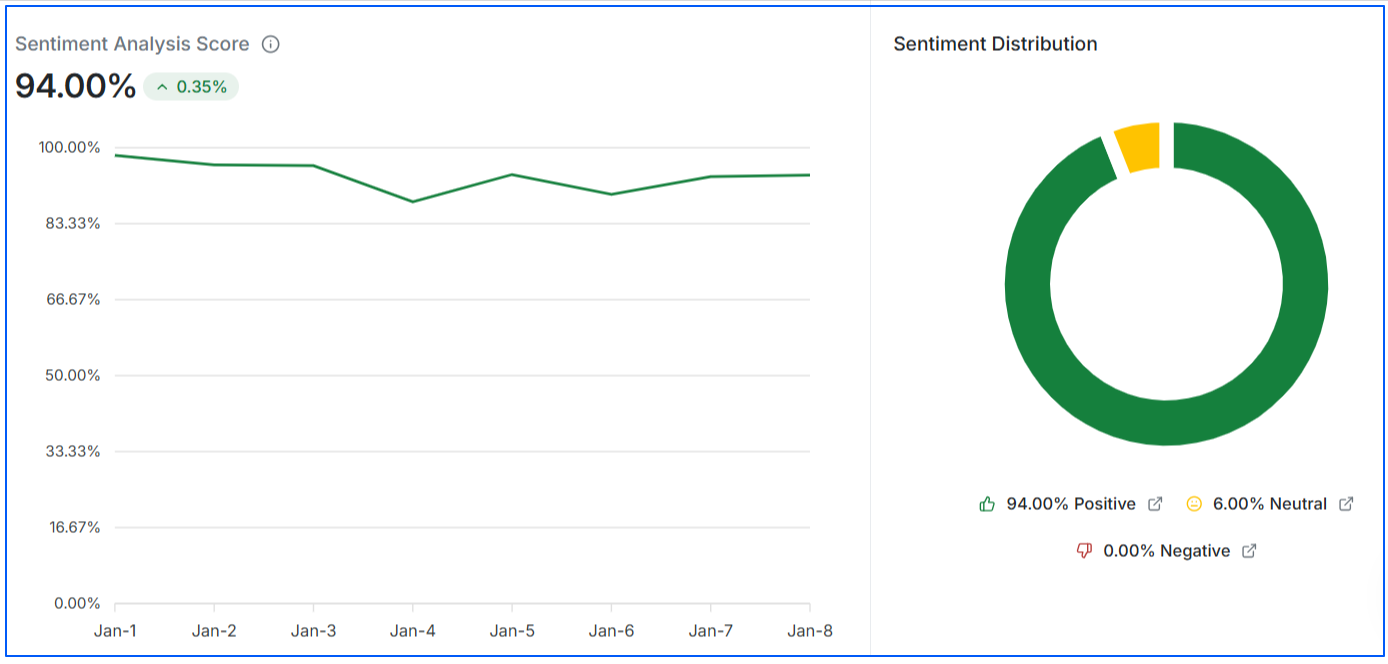

To understand how your brand is represented, you can also review sentiment analysis, which indicates whether AI platforms describe your brand in a positive, neutral, or negative context.

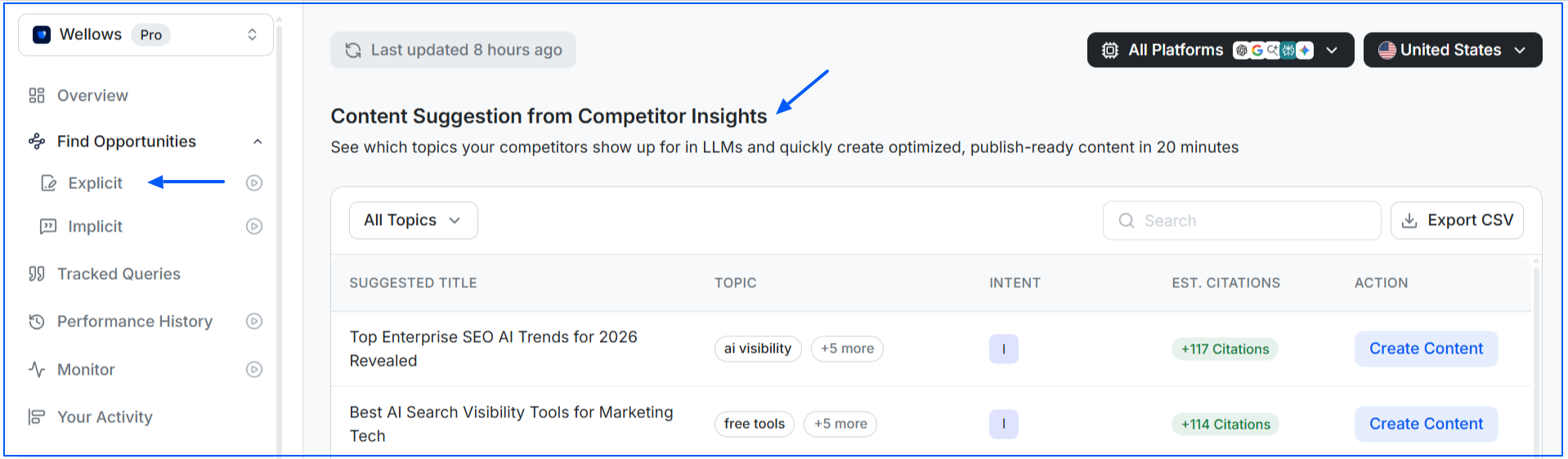

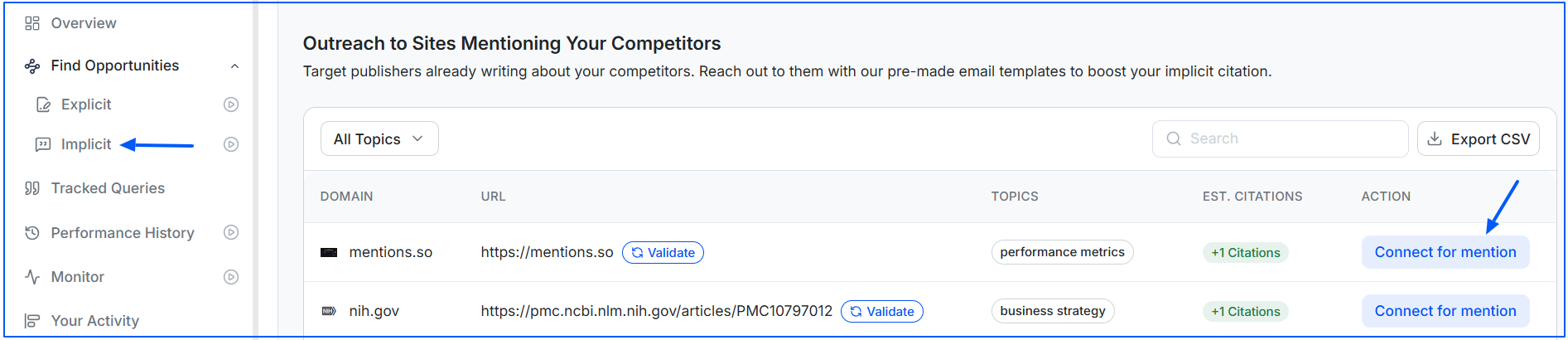

2. Find Implicit and Explicit Opportunities

This feature helps you understand whether your brand is mentioned directly (explicit citations) or indirectly (implicit mentions) by AI platforms and also helps you identify content gaps.

Explicit opportunities highlight cases where AI engines cite competitors but not your brand, broken down by keyword, platform, and intent, so you know exactly what content to create or improve.

Implicit opportunities show where AI mentions your brand contextually without a clear citation. These signals help you strengthen content so the association becomes clear and citation-worthy.

3. Perform Competitor’s Citation Analysis

Wellows lets you track citation, visibility, and sentiment scores for competitors alongside your own brand.

Using the Tracked Queries view, you can select a competitor and explore the Topics section to identify content gaps they are covering that you are not.

You can go a step further by reviewing individual AI responses, which reveal exactly which competitor pages are cited. This helps you understand what type of content AI systems prefer and what you need to outperform.

4. Outreach for Missed Mentions

Wellows helps identify verified publisher and author contacts connected to missed or unlinked mentions surfaced in AI responses.

For each opportunity, it provides ready-to-use outreach emails so you can quickly request proper attribution or updated citations without starting from scratch.

This makes it easier to turn existing references into citations that strengthen brand credibility.

5. Analyze Performance History

As you work on improving your citation presence, Wellows allows you to track changes in your Citation Score over time.

You can compare performance on a daily, weekly, or monthly basis and monitor the same metrics for competitors, making it easier to keep LLM citation tracking validate whether your content efforts are working.

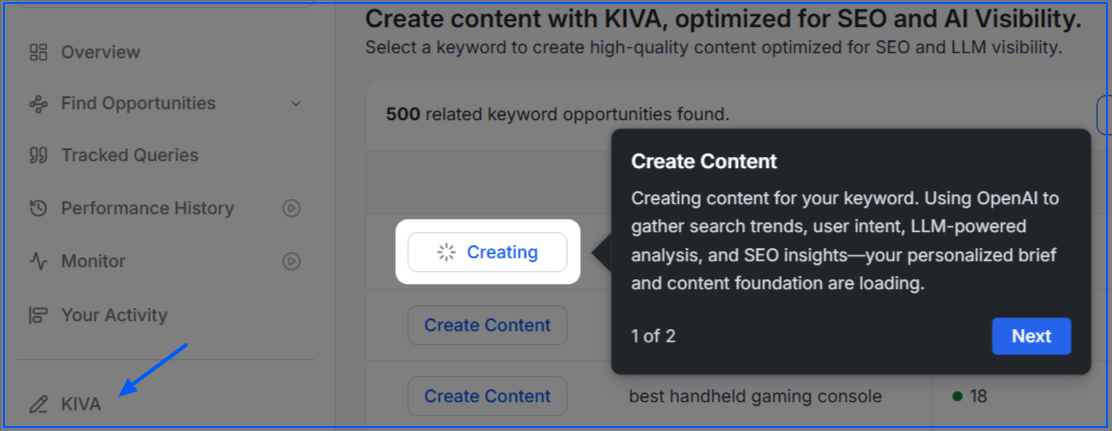

6. Move From Insight to Execution

To help you act faster, Wellows includes content briefs, content scoring, and a content creation workflow.

It is designed to turn citation insights into publish-ready content aligned with AI visibility signals.

How to Track LLM Citations Using Wellows?

Once you start optimizing for LLM citations, you need a way to track whether your efforts are driving results. A ChatGPT Visibility Tracker helps you see how often your brand appears in AI answers over time, and Wellows makes it easy to compare and monitor how your citation presence changes across platforms without manually testing hundreds of prompts.

To track LLM citations in Wellows, follow these simple steps:

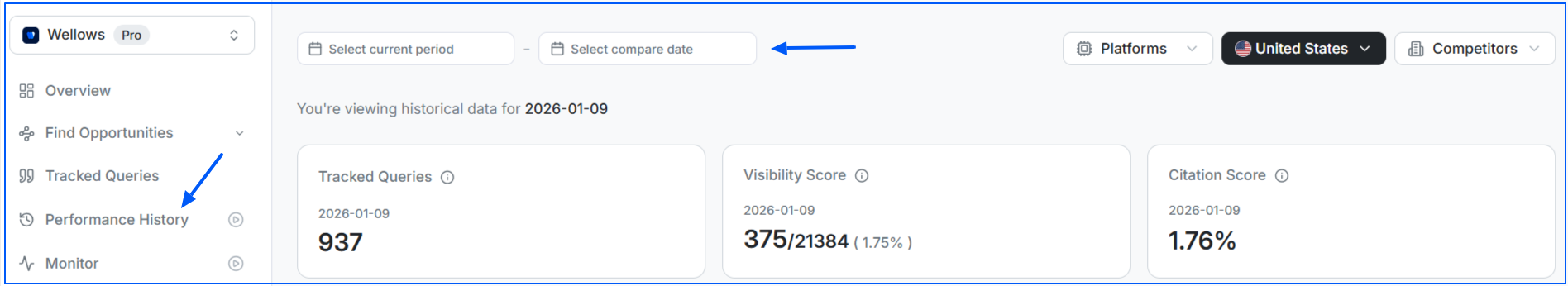

- In the Tracked Queries option, you can find the total number of queries you are tracking on Wellows for your website and your citation score against those queries.

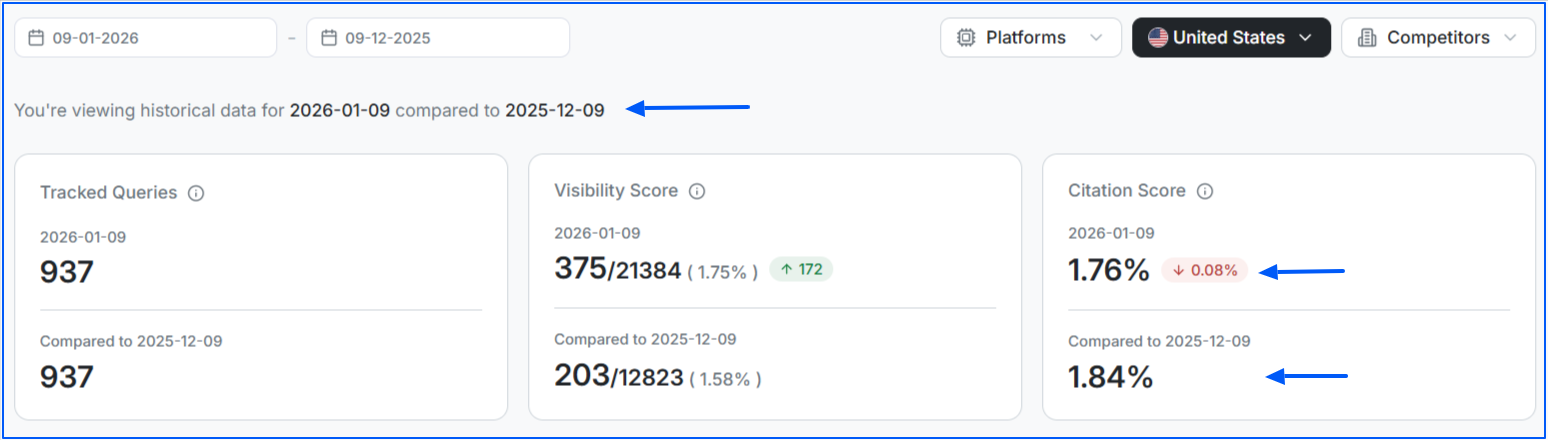

- To exactly see if your citation score is improving over time or not, got to the Performance History option. Choose the date range for which you want to compare the data.

- Now, Wellows will tell you the citation score for your selected date range along with the percentage it is increasing or decreasing.

- If you observe that your citation score is decreasing, update your content with fresh insights, data, case studies, etc.

Which AI Citation Types Drive Maximum Visibility?

Not all citations in LLMs deliver the same impact. Some place your brand front and center, while others quietly reinforce trust in the background. Yet, each one plays a vital role if you want language models to notice and remember you.

Here are the types of AI citations that actively move the needle:

1. Name-Drop Mentions Drive Brand Visibility

When AI directly mentions your brand or product in its response like in a recommendation or a “best of” list you instantly gain visibility. Users see your name without needing to scroll, making this the most direct form of exposure.2. Source References Build Credibility Signals

Think of this as the “works cited” section in AI outputs. Gemini, Perplexity, or Google AI Overviews may include your URL at the bottom or behind a dropdown. While you may not appear in the main response, you still earn credibility by being part of the trust framework.3. Quoted Passages Establish Expert Authority

When AI lifts your words word-for-word and attributes them to you, it establishes you as the expert. This type of citation places you in high-authority real estate, signaling not just recognition but leadership in your niche.4. Synthesized Mentions Shape Brand Narrative

At times, AI blends your insights into its own language without naming your brand or linking back. Even though it’s harder to trace, your content still shapes the narrative, reinforcing brand recall and positioning you as part of the bigger conversation.Each type serves a different purpose, but together they transform visibility and strengthen your generative engine optimization strategy.

Can I Compare LLM Citation Behavior Across different Models for the Same Query?

Yes, you can compare LLM citation behavior across different models (ChatGPT vs Gemini vs Claude) for the same query, which is where approaches similar to a Perplexity Visibility Tracker become useful for identifying how sources shift between engines.

If you manually run the same query across ChatGPT SEO, Gemini, and Claude, you can compare three things: whether they retrieve from the web, which sources they cite, and how consistently they mention your brand.

Just keep in mind results can change based on model settings (web browsing on/off), location, timing, and prompt phrasing, and some models may not show citations for every answer even when they used external information.

For a cleaner comparison of citations in LLMs, use the same exact prompt, run multiple tests over a few days, and track the cited domains and whether your page appears.

Instead of manually testing ChatGPT, Gemini, Perplexity, etc. one by one, Wellows shows how each model cites sources, mentions brands, and changes over time.

This allows you to compare which platforms surface your content, which favor competitors, and where citation patterns differ for the same question, all from a single view. Checking the LLM source link for citation tracking helps you understand how to optimize your content.

How AI Citations Transform Brands Narrative in LLMs?

AI citations transform a brand’s narrative in LLMs by deciding whether the brand appears at all and how it is framed inside the answer, not just where its page ranks.

In traditional search, backlinks helped a page earn visibility by passing authority between sites. In LLM-driven experiences, citations work differently. LLM citations control source inclusion, context, and credibility inside AI-generated responses.

Before people Google, they prompt. From ChatGPT to Gemini to Perplexity, AI tools now shape buying decisions, brand impressions, and user behavior. If your brand doesn’t appear in these answers, you’re absent from the conversation.

You don’t need a link to earn trust anymore. When AI repeatedly includes your brand in answers, users start to see you as credible. That recognition lasts even without a click. Traditionally, the role of backlinks in digital marketing was to boost rankings and referral traffic. By contrast, LLM citations shape perception directly inside generative engines.

While most content focuses on conversion, citations in LLMs insert your brand earlier at the discovery stage. You’re not just a product; you’re part of the answer itself.

Users no longer search with keywords alone they ask questions. The content that gets cited in LLMs is structured, clear, and designed to answer those prompts directly.

If you notice your competitors in LLM citations, it’s no accident. They’ve optimized for it, often through structured workflows where agencies deliver AI search visibility using citations, mentions, and prompt-aligned content. LLM citations are seeded strategically, and the brands doing it today are the ones users will remember tomorrow.

You could build millions of links and still stay invisible in generative answers. without PR for generative engine visibility to earn brand mentions LLMs actually cite. AI tools prioritize clarity, structure, and topical relevance over sheer link volume. They summarize they don’t rank.

Without regular updates, even clear and well-structured content can fade from AI answers — a pattern known as content decay, where relevance signals weaken over time despite strong backlinks.

What Types of Content are Most Likely to be Cited by LLMs as Authoritative Sources?

LLMs don’t cite content randomly. They favor formats that are clear, factual, structured, and easy to reuse when answering specific questions.

Certain content types consistently perform better because they reduce ambiguity and provide information in a way AI systems can reliably extract.

Here are the content types that helps you get cited in LLMs and why they work.

Content based on original data, surveys, benchmarks, or large datasets is highly citable. LLMs prefer primary sources because they offer information that cannot be easily replicated elsewhere.

Examples include industry benchmarks, annual reports, usage statistics, or proprietary research pages. These pages often become default references for numeric or comparative questions.

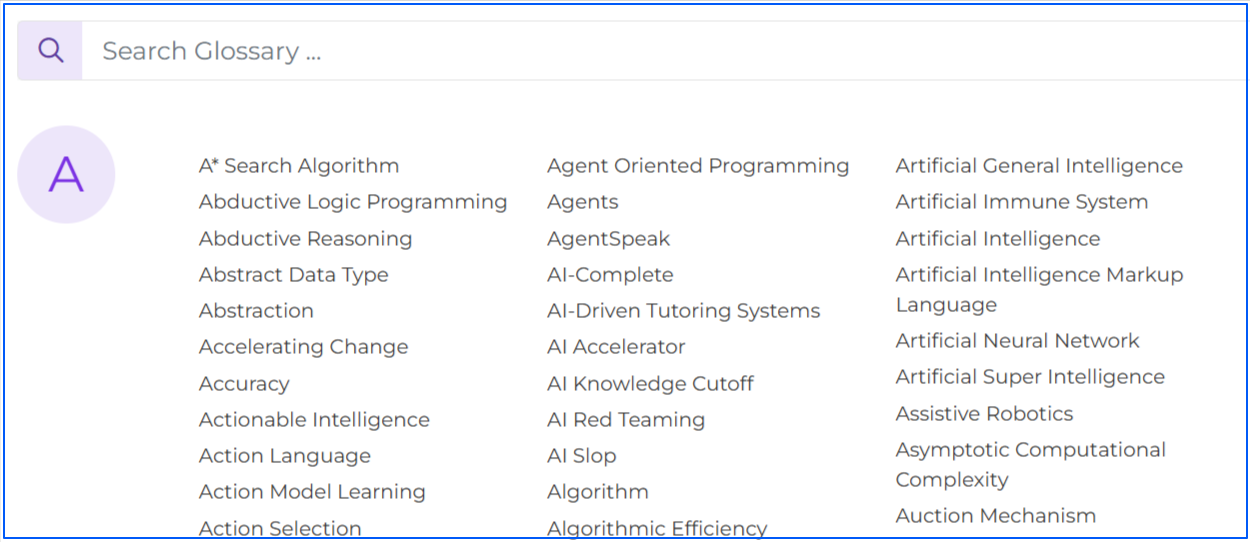

Glossary-style content works well because it provides concise, unambiguous explanations of specific terms. LLMs often cite these pages when users ask “what is” or “define” type questions.

Well-written glossary pages typically focus on one concept per page, use direct language, and avoid unnecessary context. Here is an example list of glossary blogs on a website that writes about AI:

Structured guides that explain how something works or how to do something are frequently cited, especially when they follow a logical sequence.

Numbered steps, clear headings, and short explanatory sections make this content easy for LLMs to reuse in instructional answers. Here is an example of covering how to topics:

LLMs often surface comparison content when users are deciding between options. Pages that objectively compare tools, products, approaches, or methodologies are useful because they organize information side by side.

Tables, feature breakdowns, and clear criteria improve citation likelihood. Below is an example of comparison topics:

FAQ sections align closely with how users ask questions and how LLMs generate answers. Each question-and-answer pair acts as a self-contained unit that AI systems can reference independently.

This format works particularly well for support, pricing, policies, and product usage topics. Here is an example of adding FAQs in your blogs:

Pages that serve as long-term reference material tend to earn repeated citations over time. These include foundational guides, explainer pages, and resource hubs that stay relevant even as details evolve.

Keeping these pages updated helps maintain citation visibility as AI systems prioritize accuracy and freshness.

Here is an example of evergreen resources (something user can always refer back to):

What tone of voice do LLMs reward in citations? LLMs tend to reward a clear, neutral, and authoritative tone when selecting content to cite. Content that is factual, direct, and confident, without hype or opinionated language, is easier for models to trust and reuse.

Explanatory writing that prioritizes accuracy over persuasion is more likely to be cited than content that sounds promotional or speculative.

Takeaway: LLMs prefer content that is factual, structured, and reusable. When information is easy to extract, verify, and present clearly, it becomes far more likely to be cited in AI-generated answers.

What is the Role of Expert Quotes in Earning LLM Citations?

Citations aren’t just about structure they’re also about trust. Large Language Models like ChatGPT and Gemini tend to favor content that reflects authority and credibility. One of the most effective ways to signal both is by incorporating expert quotes into your content.

Enhancing Credibility and Authority

Expert insights add depth to your content and demonstrate that it’s informed by real-world practitioners. LLMs prioritize authoritative sources, and featuring credible voices increases the chance of being cited.

Providing Unique and Verifiable Information

Direct quotes give LLMs specific, attributable snippets they can reuse in answers. This makes your content more valuable, verifiable, and citable.

Improving Structure and Clarity

Well-placed expert commentary breaks up content, adds readability, and reinforces key points. Clear, structured insights are more easily parsed by AI systems.

Aligning with E-E-A-T Principles

Google’s Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) framework is echoed in generative engines. Featuring credible experts strengthens these signals, improving both SEO and GEO performance.

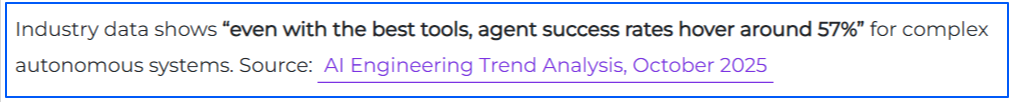

Content that integrates expert quotes has a higher chance of being cited in AI answers because it provides verifiable, quotable insights that models can confidently surface. Here is an example of how you can add expert quote or industry data in your blogs:

How does quote attribution in news articles impact LLM mentions? Quote attribution in news articles helps LLMs associate statements with a specific brand or expert, increasing the chance of consistent mentions in AI-generated answers.

When quotes are clearly attributed and repeated across sources, LLMs are more likely to recognize the speaker as an authoritative voice on that topic.

What LLMs Look for When Citing Sources?

When you ask an AI assistant a question, it expands the prompt into multiple related queries and uses retrieval-augmented generation (RAG) to fetch relevant content. Citations are selected from this retrieval pool based on a few consistent signals.

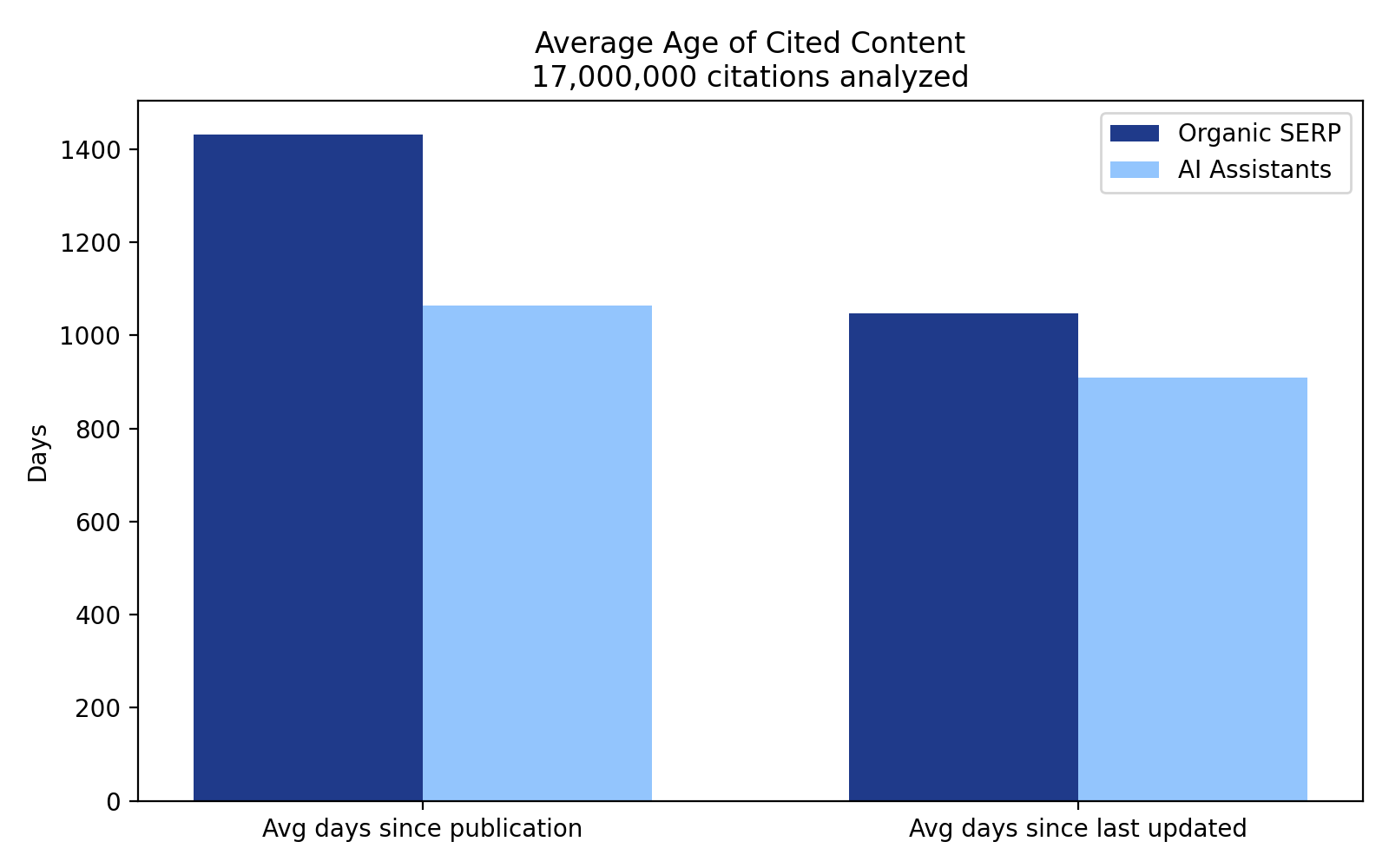

Freshness is heavily weighted when we observe AI platform citation patterns. LLMs favor recently published or frequently updated pages, especially for time-sensitive topics, reflecting a clear recency bias in source selection.

Ahrefs analysis shows LLMs favor recently published or regularly updated content, with freshness playing a key role in source selection, especially for time-sensitive topics.

Domain authority matters indirectly. AI systems tend to cite high-authority domains, not because they measure authority directly, but because those sites rank well for the expanded queries used during retrieval.

Semantic relevance is critical. Content is cited when it closely matches the specific intent of the query, often containing passages that directly answer the question being asked.

Structured, extractable formatting helps. Pages with clear structure, consistent context, and clean formatting — reinforced by strong on-page SEO foundations — are easier for LLMs to interpret and extract, increasing the likelihood they are cited.

What are the factors for URL citation in LLMs? High topical relevance, clear entity alignment, factual clarity, and strong source authority matter most. Structured content, up-to-date information, and consistent mentions across trusted sites also increase the chance of being cited.

Do Unlinked Mentions Impact AI Citations or Just Brand Recall?

Unlinked mentions when your brand is referenced without a hyperlink are often overlooked in traditional SEO— but in AI search, the brand mentions vs. citations difference can decide if you get surfaced even without a backlink. But in the era of GenAI visibility, their impact is twofold: shaping how AI engines cite brands and how audiences remember them.

Impact on AI Citations

Large language models (LLMs) like ChatGPT, Claude, and Gemini synthesize data from across the web, not just from clickable backlinks. This means unlinked mentions can still signal authority and relevance.

If your brand is consistently discussed even without a hyperlink it increases the likelihood that an AI will surface your name in responses. Research has shown that what matters most is how often your brand is talked about and the context of those mentions, underscoring their role in AI visibility.

Impact on Brand Recall

Beyond AI systems, unlinked mentions boost brand awareness among human audiences. Exposure to your name across articles, forums, or social feeds even without a direct link increases the chance that users will search for you directly.

It works much like traditional advertising: repeated exposure builds familiarity and trust over time, which can translate into direct traffic and long-term recognition.

Why Unlinked Mentions Matter

- Contribute to brand authority signals picked up by LLMs

- Strengthen AI citation potential across ChatGPT, Claude, Gemini

- Increase direct brand searches and organic recall

- Build familiarity even without SEO link equity

Limitations of Unlinked Mentions

- Don’t pass PageRank or SEO value like backlinks

- Harder to measure and track compared to traditional links

- May require complementary strategies (backlinks, structured content) to maximize impact

Bottom line: While unlinked mentions won’t replace backlinks, they are now an essential signal for both AI-driven citations and human brand recall. In the GEO era, being talked about linked or not can make the difference between being visible in AI answers or being invisible when it matters most.

Why Citations Matter for Your Brand?

LLM citations matter because they put your brand inside the answer, at the exact moment someone is researching a problem you solve. Instead of competing for a click, you’re competing to be the source an AI system trusts enough to reference.

They also create measurable, attributable traffic, even if volumes are still small. In practice, LLM-referred visits represent a small share of total traffic, but those visitors tend to arrive with higher intent, having already consumed an AI-generated summary and clicked through for deeper context.

What’s the impact of LLM citations on brand trust? Citations build authority and brand recognition in a new discovery channel.

A citation acts like a public trust signal: the model is effectively saying your page is reliable enough to support its response, even when users never click through. Over time, repeated citations can turn your brand into the “default” source LLMs reach for in your niche.

How do Large Language Models like ChatGPT and Gemini Decide Which Sources to Include in their Citations?

LLM citations usually appear when an AI assistant retrieves information from the live web, rather than relying only on what it learned during training.

This happens most often through retrieval-augmented generation (RAG), where the model searches for relevant pages and selects sources to support its answer.

If you are wondering how do LLM citations work, LLMs rely on two different knowledge paths:

Training data refers to built-in knowledge learned before the model was released. This knowledge helps shape general answers, but it rarely produces clickable citations because no live sources are being fetched.

Web retrieval (RAG) is used when accuracy or freshness matters. In this mode, the model actively searches the web, evaluates relevant pages, and may include some of them as citations in its response.

LLMs are more likely to trigger web retrieval, and therefore show citations, when a question requires:

- Fresh information, such as news, current pricing, or recent changes

- Niche or emerging topics not well covered in training data

- Specific statistics or numbers that need verification

- High-stakes topics like health, finance, or legal guidance

- Explicit instructions such as “search the web” or “what’s the latest”

Once retrieval is triggered, models decide which sources to include based on relevance, clarity, credibility, and alignment with the question intent. Pages that clearly answer the query, present structured information, and come from trusted domains are more likely to be selected.

Citation results can still vary, even for the same question. LLM outputs are probabilistic, meaning factors like phrasing, timing, model updates, location, and generation settings can influence which sources are retrieved and displayed.

Can we track content citations by LLMs’ backlinks? Not directly. Most LLMs do not expose backlink-style attribution. You can infer visibility using referral traffic, brand mentions, and AI answer monitoring tools, but there is no native citation report like Google Search Console.

What is the Difference between Traditional Backlinks in SEO and LLM Citations in AI-generated Answers?

Traditional backlinks help search engines rank pages by signaling authority and relevance, while LLM SEO focuses on whether a brand or page is referenced directly inside AI-generated answers.

Brand citations do not replace backlinks. Backlinks influence where a page appears in search results, but LLM citations influence whether the answer itself includes your content as a source.

Here are the key differences between LLM citations and backlinks:

| Aspect | Backlinks | LLM Citations |

|---|---|---|

| What They Are | Hyperlinks from one website to another long used as a ranking factor. | Mentions or references in content that AI models read, with or without a link. |

| Visibility | Mostly hidden to users unless they check the source code or anchor. | Front-facing often shown as a visible source in AI-generated answers. |

| Trust Impact | Influences authority indirectly via higher rankings. | Directly signals credibility by being cited in answers or summaries. |

| Selection Factors | Depends on domain authority, anchor text, and relevance. | Focuses on clarity, structure, originality, and usefulness of content. |

| Examples | A food blog links to your recipe page in a roundup post. | Google AI Overviews or ChatGPT referencing your brand in an answer. |

| SEO Focus | Earn quality links from reputable sites to improve ranking. | Create content LLMs can easily understand, summarize, and cite. |

| Effect | Improves rankings and organic traffic over time. | Drives brand recall and visibility directly in AI search responses. |

How do backlinks influence AI-generated citations? Backlinks influence AI-generated citations by serving as a core trust and authority signal for the underlying search algorithms and language models.

Traditional SEO doesn’t work in ChatGPT visibility. While AI models may not count links directly in real-time, strong backlink profiles increase a website’s organic search rankings, making that content more likely to be discovered, trusted, and cited by AI systems.

Is There a Way for Website Owners to Opt Out of Being Used as Sources or Citations by LLMs?

There isn’t a single, universal way for website owners to opt out of being used as sources or citations by large language models.

How and whether a page is used depends on how the model accesses information, whether through live web retrieval, traditional search infrastructure, or pre-existing training data. As a result, opt-out options are fragmented and often come with trade-offs.

- For search-based AI systems (including AI Overviews): the most reliable control is the same one used for traditional search. Blocking crawling or indexing through robots.txt, noindex tags, paywalls, or login requirements reduces the chance your pages are retrieved and cited. This also removes or limits your visibility in standard search results.

- For AI-specific crawlers: some model providers operate their own crawlers and honor user-agent rules in robots.txt. Blocking these crawlers can prevent live retrieval, though coverage varies by platform.

- For training data usage: opting out is less straightforward. Many LLM citations come from live retrieval rather than training data, and training opt-out mechanisms differ by provider, if they exist at all.

Practical takeaway: Avoiding LLM citations usually means limiting public access or crawlability, but there’s no guaranteed, platform-wide opt-out without also reducing visibility in traditional search.

Read More Articles

- The 10 Best Content Marketing Agencies: A Complete Guide 2026

- How Entity-Based Content Stands Out in LLMs & Why Does It Matter for SEO

- Why Structured SEO Briefs Are the New Foundation of AI Search Success

- What is LLM Seeding and How Can it Help in Generative Engine Optimization?

- How to Design Content Briefs for GEO?

- Can GSC Data Guide Your GEO Strategy?

- How Will Google’s AI Mode Transform Traditional SEO Practices?

- Top Content Marketing Statistics in 2025

- How to Become a Trusted Source in AI Search

FAQs

Citations place your content directly inside AI-generated answers, signaling trust and authority. They influence visibility at the answer level, not just rankings, where users increasingly get information.

No. Citations don’t replace backlinks, but they complement them. Backlinks influence discoverability and authority, while citations influence whether your content is referenced inside AI answers.

Backlink freshness in llm citation frequency is not directly related. LLMs prioritize content relevance, clarity, and recency over link freshness, though fresh backlinks can help improve visibility in retrieval systems that feed AI models.

Yes, pull quotes increase LLM citations when they clearly summarize key facts or insights. Well-attributed, standalone quotes make it easier for LLMs to extract and reuse specific statements.

Yes. LLMs can cite data studies even without backlinks if the information is clear, original, and verifiable. Primary data is often referenced regardless of link structure.

Yes site authority helps you get cited in LLMs, but it’s not the only factor. Trusted domains are more likely to be retrieved, but clear, well-structured pages can still earn citations even from less established sites.

URLs with clear answers, structured formatting, original information, and strong topical focus are easier for LLMs to extract and cite during retrieval.

Internal linking helps AI systems discover and understand page relationships. Strong internal links improve crawlability and context, increasing the chance a page is retrieved and cited.

To improve your chances of being cited by ChatGPT, keep your content consistent and reinforce trust signals. ChatGPT favor sites with accurate data, credible sourcing, and a clear, professional writing style.

Final Thoughts

LLM citations are quickly becoming a key signal of visibility and trust in AI-driven search. Being cited places your content inside the answer itself, while mentions mainly support awareness.

As discovery shifts from rankings to responses, brands that focus on clarity, credibility, and measurable citation visibility will be better positioned to stay discoverable in the age of AI.