AI search is changing what “visibility” means. In classic SEO, success is often measured by rankings, clicks, and traffic. In AI-driven search experiences, visibility is increasingly tied to whether your brand is mentioned, cited, or recommended inside generated answers—often before the user ever clicks a website.

Critical Context: AI Overviews now appear in 15.69% of all Google queries as of November 2025, with commercial query coverage growing from 8.15% to 18.57% in just one year (Semrush AI Overviews Study, December 2025). That makes AI competitive benchmarking for SEO a necessary discipline for teams that want to understand where they stand, how competitors are winning, and what visibility gaps are costing them.

This guide focuses on measurement and benchmarking, not generic optimization advice.

You’ll learn what AI competitive benchmarking is, which visibility signals matter most, how to capture reliable data across platforms, and how to turn benchmarking insights into clear content decisions—without overlapping with traditional competitor analysis posts.

- AI competitive benchmarking measures brand presence inside AI-generated answers, not SERP rankings.

- Boost loop: Benchmark → find missing prompts → update the best page → re-test until CFR/CSOV improve.

- Citation frequency, share of voice, and visibility quality now outperform traffic-based metrics.

- AI visibility data reveals missed demand, emerging competitors, and authority gaps earlier than traditional SEO tools.

- Benchmarking enables smarter prioritization across content, PR, and optimization efforts.

- Ongoing measurement protects against sudden visibility loss caused by AI model or data shifts

What Is AI Competitive Benchmarking (and What It Is Not)

AI competitive benchmarking is the process of measuring and comparing how brands appear in AI-generated responses across generative search systems. Instead of tracking URLs and rankings, it evaluates citations, mentions, and contextual placement inside AI answers.

AI competitive benchmarking boosts visibility by turning AI mentions into a measurable system: measure your current presence, identify “missing prompt” gaps where competitors appear and you don’t, update the strongest page to close those gaps, then re-test on the same prompt set.

To keep that loop repeatable, teams typically operationalize it with an AI visibility reporting checklist for Agencies so the same prompt library, scoring, and deltas are tracked every cycle.

Over time, this loop lifts citation frequency and share of voice because AI systems repeatedly select clearer, better-supported sources.

- Measuring how often your brand is cited by AI systems

- Comparing your visibility against competitors for the same queries

- Evaluating the quality, context, and sentiment of AI mentions

- Traditional SEO rank tracking

- Page-level optimization audits

- Traffic or click forecasting

This distinction matters because AI systems increasingly answer questions directly, reducing the role of clicks while amplifying the importance of trusted sources.

Limitations of Traditional SEO Metrics in the AI Era

Traditional SEO metrics still matter, but they don’t fully explain performance in AI-driven discovery. Rankings can stay stable while your brand disappears from AI-generated answers. Traffic can also fall even when content improves, because AI experiences often satisfy intent without a click.

- Rankings don’t reflect AI mentions/citations

- Traffic undercounts “seen but not clicked” impact

- Keyword reports don’t capture entity/trust signals AI systems weigh credibility, depth, and authority more than exact-match keywords

Zero-click reality: About 60% of searches end without a click (SEO.com, 2025), and 80% of consumers use AI summaries for at least 40% of searches (Semrush, 2025). This shifts value from “who ranked” to “who was referenced.”

Real-World Business Impact of AI Visibility

Search Engine Visibility affects how quickly people discover your brand, how much they trust it, and whether they consider it during decision-making. When AI systems mention or cite a brand in response to a high-intent query, that brand gains “default authority” in the user’s mind. Even without a click, the recommendation can influence brand recall, shortlist decisions, and eventual conversion paths.

Economic Impact: AI-driven SEO tools have been shown to boost organic traffic by up to 45% and increase ecommerce conversions by 38% (DemandSage, 2025). Additionally, 65% of businesses reported better SEO results after AI tool adoption (DemandSage, 2025).

Benchmarking AI visibility also improves strategic efficiency. Instead of spreading effort across dozens of updates, teams can identify where they’re losing visibility to competitors on valuable topics and focus resources accordingly.

It also reduces risk: when visibility drops suddenly, benchmarking helps confirm whether the cause is competitor movement, content freshness issues, or platform volatility—so teams respond with targeted action instead of guesswork.

Key Metrics for AI Competitive Benchmarking

The section above showed why traditional SEO metrics don’t fully capture AI-driven discovery. The metrics below fix that gap by measuring what actually matters in generative search: whether your brand is cited, how often you’re included vs competitors, and how your mention is framed inside the answer.

| Metric | What it measures | Formula | Why it matters |

|---|---|---|---|

| Citation Frequency Rate (CFR) | Percentage of AI queries where your brand appears in the answer | (Brand citations ÷ Total AI queries tested) × 100 | Shows baseline AI visibility even when rankings and traffic don’t change |

| Response Position Index (RPI) | How prominently your brand appears within AI-generated answers | Σ(position score per query) ÷ total queries (e.g., 10 pts first mention → 1 pt last) |

Distinguishes top recommendations from low-impact mentions |

| Competitive Share of Voice (CSOV) | Your share of total brand mentions across you and competitors | Your mentions ÷ (Your mentions + competitor mentions) × 100 | Reveals who AI systems consistently prefer inside answers |

| AI Visibility Score | Composite score summarizing overall AI search presence | Weighted index of CFR, CSOV, RPI, and mention quality | Provides a single KPI to track competitive progress over time |

How Wellows Makes These Metrics Actionable

Once these metrics are defined, the next step is turning them into clear competitive insight. That’s where Wellows helps: it visualizes citation performance by topic and competitor so teams can see where they’re winning, where they’re missing, and which rivals are setting the citation standard.

- Brand vs Competitors by Topic: A topic-level view of share of voice. It shows how your citation presence compares across query clusters (e.g., AI visibility, SEO automation, free tools), making gaps obvious at a glance.

- Citation Volume by Competitor: A competitor-level view of citation volume across the tracked query set. It surfaces which domains AI systems cite most often and where your brand is underrepresented.

In practice, this turns benchmarking from a static report into a decision tool: identify the topic clusters where competitors dominate, then prioritize updates or new content where citation gaps are largest and business value is highest.

What Types of AI Visibility Data You Need for Competitive Benchmarking

AI competitive benchmarking only works when the data you collect is structured, repeatable, and comparable across brands. Because AI answers can shift by platform, phrasing, and time, the goal isn’t to capture one “perfect” response—it’s to collect the right data types so patterns are measurable.

- 1. Brand Mentions & Citation Presence:

The foundational data point is whether—and how often—your brand appears inside AI-generated answers. This includes direct citations (linked sources), named mentions, and implied references. Benchmarking matters because it measures presence against competitors for the same prompts, which shows where AI systems choose your brand versus alternatives—not general brand awareness.

- 2. Citation Context & Placement:

Visibility isn’t just “in or out.” You need data on how your brand appears: as a primary recommendation, a supporting reference, or a passing mention. Context also matters—whether you’re framed as authoritative, neutral, optional, or an alternative. This is the difference between being the default answer and being listed as one of many.

- 3. Share of Voice Across AI Responses:

Share of voice captures how much visibility your brand owns relative to competitors across the tested query set. It’s a comparative signal: dominance, parity, or underexposure. This data is what turns benchmarking into competitive insight, because it shows who AI systems consistently prefer when multiple brands could plausibly be included.

- 4. Query-Level Visibility Patterns:

AI visibility data has to be tied to query types—informational, commercial, branded, and problem-based—because performance often varies by intent. When visibility is mapped at the query level, gaps become actionable: you can see where competitors win specific intents, which topic clusters you’re missing from, and where a visibility gap represents real demand loss.

- 5. Consistency & Volatility Signals:

Finally, competitive benchmarking requires tracking whether visibility is stable or fluctuating over time. AI systems change responses frequently, so decisions should be based on patterns across repeated runs, not one-off appearances. Volatility signals help teams separate noise from real competitive movement and catch early drops in visibility before they become performance losses.

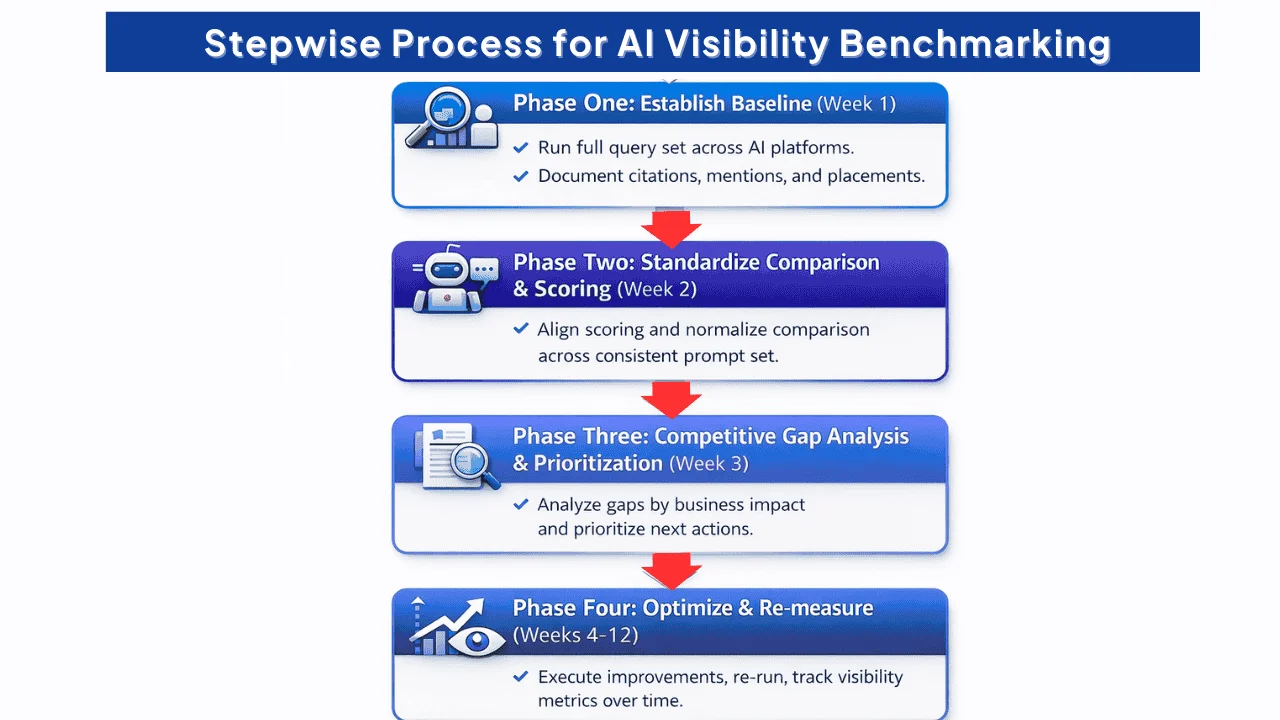

Stepwise Process for AI Visibility Benchmarking

A stepwise process keeps AI visibility benchmarking consistent and repeatable. Measure first, compare second, prioritize third, and only then optimize—so decisions reflect patterns over time, not one-off AI outputs.

Checklist: What to Do Before You Begin

- Lock the query set (prompt library): Build a stable list that reflects real demand across informational, comparison, and transactional intent. Keep prompts identical across runs so results remain comparable.

- Define the competitor set: Include direct competitors plus brand and product variants (abbreviations, common misspellings, and alternative names) so mentions aren’t missed during analysis.

- Set measurement rules: Standardize what counts as a citation vs. a mention, how you label placement (primary vs supporting), and how you tag context or sentiment.

- Decide your primary KPI: Choose one main benchmark metric (citation frequency rate, share of voice, or visibility quality) and define what “improvement” looks like before reporting starts.

- Document your capture method: Use the same collection approach every cycle (same geography, same timing window, same format), so shifts reflect reality—not inconsistent sampling.

Phase One: Establish Baseline Visibility (Week 1)

Start by running your full query set across your priority AI platforms and recording citations, mentions, placement, and framing. As you capture results, note which competitors repeatedly appear alongside you or instead of you—this becomes your baseline citation frequency rate and baseline share of voice.

Phase Two: Standardize Comparison & Scoring (Week 2)

Compare brands across the same prompt set and normalize how results are scored. Track how often competitors appear as the primary source, how frequently they are recommended, and how consistently they show up across query clusters. This creates a scorecard-ready view you can report month over month.

Phase Three: Competitive Gap Analysis & Prioritization (Week 3)

Translate benchmarking into decisions. Identify query clusters where competitors dominate and your brand is absent, then prioritize content gaps by business value and effort. The output should be a short action list: what to refresh, expand, consolidate, or create.

Phase Four: Content Optimization & Growth (Weeks 4–12)

Execute focused updates that improve cite-worthiness: tighten definitions, add evidence, update outdated claims, improve structure, and strengthen supporting references. Re-run the same query set on a consistent cadence and track whether visibility metrics and mention quality improve over time.

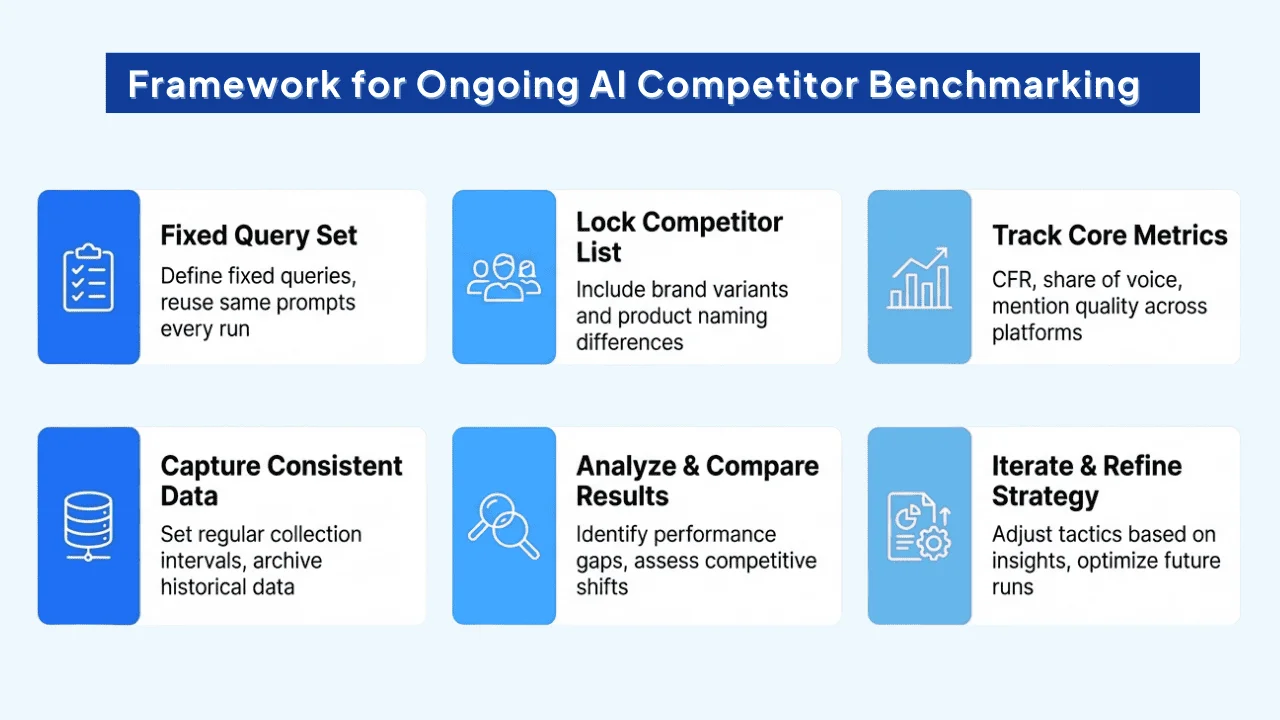

Framework for Ongoing AI Competitor Benchmarking

AI visibility shifts quickly, so benchmarking must be a routine system—not a one-time report. A sustainable framework keeps a fixed query set, scheduled measurement, consistent reporting, and clear ownership, so teams can spot competitive movement early and act before visibility loss turns into revenue loss.

Market Context: The AI search industry is scaling fast. 86% of enterprise SEO professionals have integrated AI into their strategy (SeoProfy, 2025), and 56% of marketers now use generative AI for SEO workflows (DemandSage, 2025). That shift makes ongoing competitive benchmarking table stakes.

The 6-Step Ongoing AI Competitor Benchmarking Loop

➡️Define a fixed query set and reuse the same prompts every run.

➡️Lock your competitor list, including brand variants and product naming differences.

➡️Track core metrics across platforms: CFR, share of voice, and mention quality (placement + sentiment).

➡️Capture results consistently: platform tested, timestamped outputs, and recorded context (not just yes/no).

➡️Set a cadence: weekly monitoring for early shifts, monthly deep dives for trend decisions.

➡️Turn gaps into actions: refresh, expand, consolidate, or create content based on what the data shows.

This loop enables early detection. If a competitor gains share of voice or your citations drop across multiple query clusters, you can respond while the shift is still small—before it turns into a traffic or pipeline issue.

How to Benchmark AI Visibility Across Platforms and Identify Competitor Gaps

AI platforms behave differently, so competitive benchmarking must account for platform-specific behavior. Some systems summarize broadly, others emphasize explicit citations, and some rely more on internal model knowledge than live web retrieval.

As a result, the same query can produce different visibility outcomes depending on the platform.

Platform-Specific Growth: Navigational AI Overviews (branded searches) increased from 0.74% to 10.33% of all queries between January and October 2025 (Semrush, December 2025). This makes platform-level segmentation mandatory—avoid reporting “AI visibility” as a single blended metric.

Benchmarking also reveals visibility gaps that traditional SEO tools often miss. You may rank well but still not be cited, or appear without being positioned as the recommended source.

The most valuable gaps are often missing prompts—queries where competitors appear consistently and your brand does not.

| AI Platform | How It Surfaces Information | Primary Visibility Signals | What to Benchmark |

|---|---|---|---|

| Google AI Overviews | Summarizes multiple sources with selective citations | Source citations, SERP integration, freshness | Citation frequency, cited domains, missing prompts, schema presence |

| ChatGPT (with browsing) | Generates answers using retrieved web sources | Authority signals, content clarity, recency | Brand mentions, citation placement, mention sentiment |

| Perplexity | Answer-first interface with explicit source links | Source attribution, topical authority | Linked citations, primary source frequency, competitor source overlap |

| Gemini | Model-driven responses with selective web grounding | Entity understanding, content trust signals | Brand mentions, entity consistency, framing quality |

Segmenting by platform shows where you can win visibility fastest and where content needs different proof/structure.

To make this data actionable, teams typically organize results in two formats. A competitor intelligence matrix maps topics and prompts against competitors to show where visibility is concentrated or missing.

A competitor visibility dashboard tracks citation frequency, share of voice, and mention quality over time—segmented by platform—so shifts are detected early and acted on quickly.

Together, platform-level benchmarking and structured reporting turn AI visibility data into a repeatable competitive system, not a one-off analysis.

How Competitive Benchmarking Guides Content Strategy and Optimization

Competitive benchmarking makes content decisions measurable. By comparing how your brand and competitors appear inside AI-generated answers, you can identify where you’re missing coverage, what competitors are being rewarded for, and which changes are most likely to improve visibility.

It helps teams act with precision: refresh the right pages, deepen the right topics, consolidate overlapping assets, and create new content only when a true gap exists. This reduces content sprawl and concentrates authority into the pages AI systems are most likely to cite.

- Content gaps: Prompts/topics where competitors appear and you don’t.

- Visibility drivers: Patterns in cited competitor content (structure, clarity, evidence, entity coverage).

- Targets: Baselines for CFR, share of voice, and primary vs. supporting mentions.

- Differentiation: How competitors are framed (recommended vs neutral) so you can adjust positioning without copying.

- Prioritization: Missing-prompt clusters turned into an editorial plan (refresh first, create second).

- Maintenance triggers: Visibility drops, framing shifts, and competitor surges used to time updates.

Structural Patterns Found in High-Visibility AI Content

Content that performs well in AI answers usually answers the core question early, uses descriptive headings, stays concise, and supports key claims with evidence. Definitions are explicit, and phrasing closely matches how users ask the question, making it easier for AI systems to extract and cite.

Benchmark Signals That Trigger Updates

Update decisions should be triggered by competitive signals, not intuition. Repeated competitor citations on topics you already cover, declining share of voice across priority queries, or shifts in how your brand is framed (recommended → neutral) are strong indicators that visibility is weakening and action is justified.

How to Interpret Results Across AI Platforms

AI platforms behave differently, so the same visibility signal can mean different things depending on where it appears. Keep measurement rules consistent across runs, but interpret results platform by platform so you don’t mistake platform volatility for a true competitive shift.

This is why the next step is structuring your findings into dashboards and recurring reporting—so competitive movement is detected early and acted on systematically.

AI Visibility Tracking, Reporting, and KPI Dashboards That Drive Decisions

Tracking and reporting should be built for decisions, not vanity. KPIs should show whether your AI visibility is improving relative to competitors—and whether that visibility is high-quality (recommended, cited, and trusted).

Reporting matters because benchmarking without it becomes a one-time exercise. When KPIs are tied to actions (refresh, expand, consolidate), benchmarking becomes an ongoing system.

Tools like Wellows support this by tracking AI visibility over time and making competitive movement easier to spot.

Weekly tracking is for early signal detection. Monitor a smaller priority query set to catch sudden shifts fast, validate them across multiple prompts, and flag meaningful changes for deeper review. Wellows helps surface ongoing changes in visibility patterns.

Monthly reporting is where trends are interpreted. Re-run the full query set, update competitor comparisons, and convert findings into an action plan: what to refresh, consolidate, expand, or create next. To keep reporting simple, teams often summarize citation performance into a single benchmark signal alongside CFR and share of voice. For example, Wellows’ Citation Score helps track progress over time while preserving competitive context.

A KPI dashboard should be easy to scan. Track citation frequency, share of voice, and mention quality trends over time, segmented by platform. Add annotations when major changes occur (competitor campaigns, content refreshes, or platform shifts) so teams can interpret trends correctly.

How to Resolve Common Issues in AI Competitive Benchmarking for SEO

Resolving issues in AI competitive benchmarking requires accurate measurement, human oversight, and a strong foundation in SEO fundamentals. Most problems are not strategic failures—they’re data, methodology, or interpretation issues that can be corrected with clearer rules and better context.

1. Data Accuracy and Consistency Problems

AI benchmarking depends on clean, comparable data. Inconsistent inputs—such as mixing platforms, date ranges, locations, or device types—often lead to false conclusions.

How to fix it: Normalize your data. Use the same query sets, platforms, time windows, geographies, and capture rules across all competitors. Focus reporting on a small, stable set of KPIs (citation frequency, share of voice, and mention quality) instead of vanity metrics that don’t reflect real visibility impact.

2. Over-Reliance on AI Without Human Judgment

AI tools are excellent for collecting and summarizing data, but they cannot replace strategic interpretation. Blindly following AI-generated recommendations can lead to generic content and missed nuance.

How to fix it: Use AI for measurement and pattern detection, then apply human judgment to decide what to change. Ground AI insights in brand context, historical performance, and audience expectations. Benchmarking should inform decisions—not automate them.

3. Benchmarking Against the Wrong Competitors

A common mistake is benchmarking against business competitors rather than AI visibility competitors. The brands AI systems cite most often are not always the biggest players in your industry.

How to fix it: Identify competitors based on who appears in AI-generated answers for your target prompts. Prioritize brands with comparable authority and resources so benchmarks are realistic and actionable.

4. Technical Accessibility and Crawlability Issues

If AI systems or underlying crawlers can’t access or understand your content, it won’t be cited—regardless of quality.

How to fix it: Maintain strong technical SEO hygiene. Ensure key pages aren’t blocked by robots.txt, login walls, or performance issues. Use clear structure, schema where appropriate, and fast-loading, mobile-friendly pages so AI systems can reliably retrieve and summarize your content.

5. Adapting to Platform and Algorithm Changes

AI search behavior changes frequently as models, retrieval methods, and interfaces evolve. Strategies that worked a few months ago can quickly lose effectiveness.

How to fix it: Treat benchmarking as an ongoing system. Monitor visibility regularly, review performance monthly, and realign strategy quarterly. Prioritize durable signals—clear answers, strong evidence, structured content, and genuine topical authority—that remain valuable even as platforms change.

When handled correctly, AI competitive benchmarking becomes resilient. Instead of reacting to noise, teams build a repeatable system that adapts to change while maintaining consistent visibility advantage.

Read More Articles

-

- Boost AI Search Visibility with Knowledge Graphs

- Effective LLM Citation Strategies for SEO Success

- Does Google Ranking Ensure Visibility in ChatGPT

- 8 Most Effective Strategies for AI Visibility Enhancement

- How SERP Visibility Analysis Uncovers Competitor Gaps in 2026

- AEO Vs GEO (2026): Differences, Use Cases & Which To Prioritize

- How to Design Content Briefs for GEO?

FAQs

It measures how often your brand appears in AI-generated answers and compares that presence against competitors using a consistent query set.

Citation frequency, share of voice, and visibility quality (context, sentiment, placement) are the most reliable signals.

Traditional analysis focuses on rankings, clicks, and traffic. AI benchmarking focuses on mentions and citations inside AI answers where users may not click.

Weekly checks catch sudden shifts early. Monthly deep dives help interpret trends and plan updates with confidence.

Yes—each platform retrieves and cites sources differently. Track results separately by platform to avoid misleading blended reporting.

Conclusion

AI competitive benchmarking helps teams measure what “visibility” means in AI-driven search: whether your brand is cited, mentioned, and positioned as a trusted choice inside generated answers.

By tracking citation frequency, share of voice, and mention quality across platforms, you can identify where competitors dominate, where you’re missing, and which topics need action.

Most importantly, benchmarking works best as a system—not a one-time audit. With consistent prompts, clear KPIs, and regular reporting, teams can prioritize smarter updates, respond faster to competitive shifts, and build durable authority in AI discovery.