Your analytics dashboard doesn’t lie, traffic is down, and it’s happening more often lately. If you’re trying to figure out why is your website traffic dropping, you’re not alone.

Search has changed quickly. A growing share of queries get answered directly on Google now, and 60% of searches end without a click (Digital Bloom, 2025). That can reduce clicks even when your rankings look stable.

So the right question isn’t only “did my rankings drop?” (If you’re planning timelines, see How Long Does It Take to Rank Your Website on Google.)

It’s whether the decline is coming from tracking issues, channel shifts, technical SEO problems, content decay, AI/zero-click behavior, or competitors improving faster.

AI-driven results can lead to significant traffic losses, depending on the industry and query mix.

This guide helps you confirm the drop is real, identify the cause (gradual vs. sudden), and prioritize fixes that bring traffic back without guessing or overhauling everything at once.

Confirm the Traffic Drop Before Taking Action

Before making changes, confirm the decline is real and not caused by faulty tracking. Analytics errors create false alarms more often than most teams realize.

![]()

Did you know?

How Website Traffic Is Measured

Platforms like GA4 track activity using a site-wide script that records sessions, pageviews, traffic sources, and engagement signals such as time on page and conversions. If that script breaks on even a few templates, reported traffic can fall without any real audience loss. through audit.

1. Common Tracking Issues That Create False Drops

Most false traffic declines come from a small set of problems:

Tracking tag issues: analytics scripts removed or broken after CMS, theme, or plugin updates.

Filtering mistakes: bot filters or IP exclusions unintentionally remove real users.

Domain or URL changes: HTTPS migrations, www changes, or parameter updates breaking attribution.

Consent updates: stricter GDPR/CCPA settings reducing measurable sessions.

Quick validation: Compare GA4 sessions with Google Search Console impressions. If impressions are stable but sessions drop, the problem is tracking—not visibility.

2. Identify Which Channels Are Actually Declining

Next, break traffic down by channel to narrow the cause:

Only organic search declines: SEO issues, algorithm changes, or AI-driven zero-click impact.

All channels decline together: tracking failure or a site-wide technical issue.

Impressions hold but clicks fall: AI Overviews or SERP features absorbing demand.

This step prevents unnecessary optimization and keeps your recovery focused on the real issue.

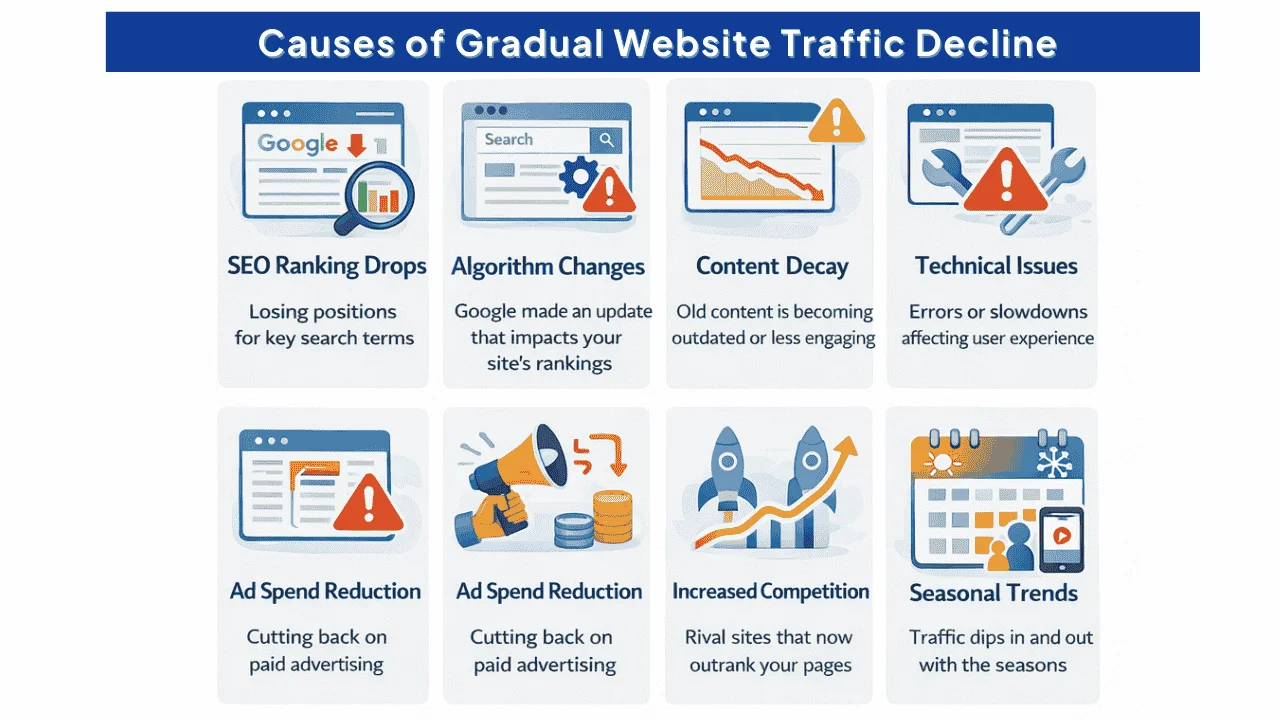

Causes of Gradual Website Traffic Decline

When traffic declines slowly, often 5–15% per quarter, the cause is usually cumulative rather than sudden. Small issues compound over time.

1. Content Aging and Reduced Relevance (Content Decay)

Search intent evolves. Content that performed well 12–18 months ago can lose relevance as competitors publish fresher resources, data becomes outdated, and user expectations shift.

Typical signs of content decay include:

- Gradual ranking drift across many pages

- Declining CTR even when rankings appear stable

On average, unrefreshed content can drop from ~2,500 monthly visits to ~1,600 within six months. Page-one content in competitive spaces is usually updated within the last two years (Siege Media, 2025).

Did you know?

Key takeaway: Content decay is reversible if addressed early. After long periods without updates, recovery becomes significantly harder.

2. Slow Loss of Keyword Rankings

Minor ranking drops across many pages compound into significant traffic losses. This often happens when:

- Competitors strengthen their content with better depth, data, or UX.

- Your pages no longer match search intent (for example, users now expect tools or templates—not just articles).

- Technical debt builds up (slow load times, broken links, weak mobile experience).

- Your backlink profile weakens as valuable links are lost or devalued.

Did you know?

Example: Losing just 2–3 ranking positions across 100 pages can eliminate 30–40% of organic traffic—even though no single page “crashed.”

The SEO playbook from 2023 doesn’t work heading into 2026, and the gap between what used to rank and what now earns visibility is exactly why content optimization feels way harder than SEO — once you see the specific reasons, the recovery path becomes far clearer.

Back then, many sites could rank with short posts targeting exact-match keywords. Now, AI Overviews appear on 15.69% of queries (Semrush, December 2025), and they tend to reward content that’s clearly structured, defines entities, and cites trustworthy sources.

If you’re still optimizing like it’s 2023, you’re competing with outdated rules.

Reasons for a Sudden Drop in Website Traffic

Sudden traffic drops—often 30% or more within days or weeks—usually have a clear trigger. These declines are sharp, noticeable, and require immediate investigation.

1. Did You Recently Change Your Website?

Website changes are the most common cause of sudden traffic crashes. Even well-intentioned updates can disrupt how Google understands and ranks your site.

Did you know?

Tip: During the audit, review linking density too. This guide on how many internal links per page for better SEO helps you spot pages that are overlinked or underlinked.

Site redesigns or migrations: URL changes without proper 301 redirects, removed pages without mapping, broken internal links, or templates stripping schema and headings

Large-scale content updates: deleting or merging pages, changing titles/meta and lowering CTR, removing ranking-related content, or over-optimizing and triggering quality filters

URL structure changes: HTTPS migrations, domain changes, or moving content from subdomains to subfolders without correct canonicals.

Technical implementations: JavaScript framework changes, lazy loading blocking indexing, or CDN rules accidentally blocking Googlebot.

When these changes happen, Google suddenly encounters missing pages, redirects to unrelated content, or inconsistent signals. Rankings drop quickly, and traffic follows.

Did you know?

What to do: Open Google Search Console → Indexing (Coverage/Pages). Look for spikes in errors, excluded URLs, or sudden drops in indexed pages around the date traffic declined. Fix broken redirects and restore lost URLs first.

2. Algorithm Updates Hit Harder Now

Google releases several major algorithm updates each year, and their impact has become more pronounced as quality and trust signals are weighted more heavily.

The December 2025 Core Update showed this clearly: 23% of sites gained traffic, while 77% either stayed flat or declined (ALM Corp, December 2025).

How to confirm an algorithm hit:

Did you know?

- Match the traffic drop date with known Google’s algorithm update history.

- Identify which pages and keywords lost visibility.

- Check industry reports to see if similar sites were affected.

Algorithm updates can’t be “fixed” directly. Recovery comes from improving the signals Google now prioritizes—content depth, clarity, trust, and technical stability.

3. Manual Penalties (Rare but Severe)

Manual penalties occur when Google’s reviewers find guideline violations. These usually cause immediate and dramatic losses—often 70–100% overnight.

Did you know?

- Common causes include: paid or manipulative links, thin or auto-generated content, and deceptive redirects or cloaking.

- How to check: Google Search Console → Security & Manual Actions → Manual Actions. If there’s no message, you do not have a manual penalty.

- Recovery: fully resolve the violation, document your fixes, submit a reconsideration request, and wait. Most legitimate fixes are reviewed within a few weeks.

Why Did My AI Search Visibility Suddenly Drop This Month?

AI-driven search has created a new kind of visibility loss that traditional SEO tools often miss. In many cases, rankings stay stable—but clicks drop because answers are being delivered directly inside AI results.

This is the core purpose of AI SEO: optimizing content so it’s not only ranking in classic SERPs, but also being selected, summarized, and cited inside AI-driven answers.

1. Changes in AI Overviews and AI-Driven Results

AI Overviews now appear in 15.69% of Google queries as of November 2025 (Semrush, 2025). When AI Overviews show up, click behavior shifts sharply.

- Organic CTR dropped 61% (from 1.76% to 0.61%) on queries with AI Overviews (Dataslayer, 2025).

- Paid CTR fell 68% on the same queries.

- Even queries without AI Overviews saw organic CTR decline 41% as users click less overall.

Did you know?

Why this matters: Even if you rank #1, an AI Overview can reduce traffic by 50–70%. Your rankings may not have dropped—user click behavior changed.

2. How to Tell If AI Visibility Is Your Problem

Traditional rank tracking often won’t detect AI-driven losses. Use Google Search Console to spot patterns like:

- Impressions staying steady (or increasing) while clicks fall.

- Pages ranking in positions 1–3 but showing unusually low CTR.

If impressions are flat/up but clicks are down, AI Overviews or other zero-click features are likely absorbing demand.

Did you know?

Platform-specific visibility: Tools like Wellows can track whether your brand appears in ChatGPT answers, Google Gemini responses, Perplexity citations, and AI Overviews—so you can measure visibility beyond classic clicks.

3. What Makes Content AI-Citation-Worthy?

AI systems tend to cite content that’s clear, current, structured, and trustworthy:

- Clear definitions: Define key terms explicitly so AI doesn’t have to infer meaning.

- Fresh updates: 76.4% of ChatGPT citations come from content updated in the last 30 days (Passionfruit, 2025).

- Structured data: 66% of Google AI Overviews reward pages with schema markup (Prerender.io, 2025).

- Authority signals: Expert authorship, credible references, and strong trust cues.

Shifts Toward Zero-Click Search Experiences

The share of searches ending without a click reached 60% in 2025 (Digital Bloom, 2025). Zero-click results include AI Overviews, featured snippets, Knowledge Panels, People Also Ask, and Local Packs.

The paradox: Your content can be more visible than ever—yet generate fewer visits than before. Strategic shift required: Optimize for search engine visibility (citations, mentions, impressions), not clicks alone.

Diagnosing What Went Wrong with AI Search Visibility

Search engine visibility loss requires a different diagnostic framework than traditional SEO. Rankings alone won’t explain what happened—because visibility is now distributed across AI Overviews, SERP features, and third-party AI platforms.

1. Monitoring AI Impressions and Visibility Signals

Instead of focusing only on positions, monitor where and how your brand is being surfaced:

- Whether your target queries now trigger AI Overviews.

- If your pages are cited inside AI Overviews, and whether you appear as a primary source or a supporting reference.

- Your presence in featured snippets, People Also Ask (PAA), and Knowledge Panels.

- Mentions or citations across ChatGPT, Google Gemini, and Perplexity. Pair mentions with sentiment signals using AI Brand Sentiment Tracking.

Did you know?

Key metric: If impressions rise while clicks fall, that often signals zero-click visibility—not necessarily performance failure. Your content may still be “winning,” but users are consuming answers without visiting your site.

2. Entity Coverage and Topical Authority Gaps

AI systems rely on entities and relationships, not keywords alone. If your content lacks clear entity signals, you can lose AI citations even when rankings remain stable.

- Define concepts explicitly so the topic and terms are unambiguous.

- Use consistent terminology across related pages to strengthen understanding.

- Build topical clusters by linking related pages together with purposeful internal links.

- Connect entities to authoritative sources where relevant (studies, standards, official docs).

Did you know?

In short: vague language weakens AI comprehension, while precise definitions and strong internal structure improve relevance.

3. Trust, Credibility, and E-E-A-T Signals

AI citation heavily favors content with strong E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). Strengthen the signals AI systems use to evaluate whether you’re safe to cite.

- Experience: original research, testing notes, case studies, and real-world examples.

- Expertise: clear author bios, credentials, and accountable authorship.

- Authority: quality backlinks, brand mentions, and recognition in your industry.

- Trust: transparent sourcing, HTTPS, clean UX, and clear company/contact information.

Did you know?

Impact: Sites with strong E-E-A-T signals are often 3–5× more likely to be selected for AI Overviews—even when traditional rankings look similar.

Technical SEO Issues That Reduce Visibility

Technical problems can quietly reduce traffic from both traditional search and AI-driven results. If Google can’t crawl, index, or load your pages properly, visibility drops—no matter how strong your content is.

1. Crawling and Indexing Barriers

If Google can’t crawl and index your pages, you’re invisible. These issues often appear after migrations, CMS updates, or template changes.

- Blocked crawling: robots.txt rules or server errors prevent Googlebot from accessing key pages.

- Indexing signals misconfigured: noindex tags, missing/incorrect canonicals, or duplicate URL versions confuse what should rank.

- Discovery problems: broken internal links or sitemap mistakes stop Google from finding and prioritizing important pages.

-

- If discovery problems or crawl traps are part of the drop, this Crawl Budget SEO guide helps you fix crawl waste and get priority pages crawled sooner.

-

Did you know?

How to check: Google Search Console → Indexing (Coverage/Pages). Review “Excluded” URLs and compare indexed pages to your expected page count.

Fix priority: Resolve crawl/index issues first—until Google can access your pages, other optimizations won’t matter.

2. Page Performance and Core Web Vitals

Slow pages reduce rankings, hurt engagement, and weaken trust signals used when AI systems select sources.

- LCP: aim for ≤ 2.5s

- FID: aim for ≤ 100ms

- CLS: aim for ≤ 0.1

Did you know?

Why it matters: 88.5% of visitors leave a site due to slow loading (Email Vendor Selection, December 2025).

Quick fixes: compress images (WebP), enable caching, minify CSS/JS, use a CDN, and reduce heavy third-party scripts.

Test: Google PageSpeed Insights or GTmetrix.

3. Mobile Usability and Accessibility Problems

With mobile-first indexing, mobile issues affect visibility across all devices. Accessibility gaps can also weaken page clarity and user signals.

- Mobile usability: unreadable text, cramped tap targets, layout overflow, or intrusive popups.

- Accessibility basics: missing alt text, poor heading hierarchy, low contrast, or unlabeled interactive elements.

Did you know?

Where to check: Google Search Console → Experience → Mobile Usability. Fix recurring issues at the template level so improvements apply sitewide.

Content Problems You Can’t Ignore

Even with perfect technical SEO, weak or misaligned content will struggle to rank. Content quality affects both traditional search performance and AI-driven visibility.

1. Keyword Targeting and Search Intent Mismatch

One of the most common reasons traffic drops is simple: you target the right keyword, but answer the wrong question. When users don’t get what they came for, they leave—and Google adjusts visibility based on that behavior.

- Know the intent: informational (learn), navigational (find a site), commercial (compare options), transactional (take action).

- Common mismatch: sending users to a landing page when they expect a guide, or publishing a blog post when users want a comparison or tool.

- How to diagnose: Google your target keyword and review the top results. If they’re mostly lists/tools/videos and yours is a different format, intent mismatch is likely.

Did you know?

2. Thin, Duplicate, or Over-Optimized Content

Search engines deprioritize pages that add little value, repeat what’s already on the site, or force keywords at the expense of clarity.

- Thin content: shallow pages with few examples, no unique insights, and little supporting detail (often 300–500 words on topics that need depth).

- Duplicate content: similar text across multiple URLs (repeated descriptions, boilerplate pages, HTTP/HTTPS or printer versions both indexed).

- Over-optimization: unnatural keyword stuffing in headings, anchors, or meta text that hurts readability.

- Recovery approach: consolidate overlaps with redirects, expand pages that deserve to exist, and remove pages that don’t serve a real user purpose.

Did you know?

Low-Value or Unhelpful AI-Generated Content

AI-assisted content can help—but only when used correctly. Only 6% of marketers publish fully AI-written content without major human editing (HubSpot, 2024), because unedited AI content rarely performs well long term.

AI content hurts rankings when it’s generic, repetitive, lacks real expertise, or shows obvious machine patterns. Content that simply rephrases top-ranking pages without adding value is especially vulnerable.

AI content performs best when it’s used for structure or research, then refined by humans with original data, real examples, expert quotes, and fact-checking. When paired with uniqueness and editing, AI-assisted content has shown 31% higher engagement despite only minimal perceived quality differences (ColorWhistle, September 2025).

The issue isn’t using AI—it’s publishing content that lacks originality, depth, and expertise.

Authority and Competition Challenges

Sometimes traffic drops aren’t caused by internal problems. They happen because your authority weakens, competitors improve faster, or your brand isn’t strong enough to earn clicks and AI citations.

1. Declining Backlink Quality or Quantity

When strong backlinks disappear, your authority signals fade—and rankings often slide gradually.

- Why links disappear: source sites redesign, pages get deleted, editors remove links, or low-quality domains get deindexed.

- How to spot it: use Ahrefs or Semrush → open the Lost Backlinks report → prioritize recovering links from high-authority, relevant sites.

Did you know?

2. Competitors Improving Faster Than You

SEO is relative. Even if your site stays the same, rankings shift when competitors publish better content, improve UX, or earn stronger authority signals.

- What to compare: content depth and freshness, page experience (speed/clarity), and backlink quality.

- How to check: use Semrush Organic Research or Ahrefs Competing Domains to see who replaced you on your key queries.

Did you know?

3. Weak Brand and Credibility Signals

Brand strength influences trust, click-through rates, and whether AI systems consider you “safe” to cite.

- Signals that matter: branded searches, mentions (even without links), reviews, and media/community visibility.

- Why this matters now: AI results frequently pull from forums and Q&A sources, so community presence can support visibility.

Did you know?

How to Recover Lost Website Traffic: The 4-Step Framework

Once you know what caused the drop, the fastest recoveries come from fixing things in the right order—protect what’s working, repair what’s broken, then rebuild growth.

- Step 1: Protect Your Winners First:

Start with the pages already driving the most traffic and leads. These are your quickest wins and your biggest risk if they decline further.

- Find them: Google Analytics → Engagement → Pages and screens.

- Stabilize them: fix obvious technical issues, refresh outdated sections, and strengthen internal links pointing to them.

- Step 2: Refresh Your Best Content:

Refreshing existing pages usually beats publishing new ones because the page already has ranking history and backlinks.

- Update outdated stats, examples, and screenshots.

- Add sections users now expect (based on current top-ranking pages).

- Improve structure for scanning (clear headings, shorter paragraphs).

Cadence: high-value pages every 3–6 months; evergreen pages every 6–12 months; seasonal pages as needed.

- Step 3: Fix Technical Issues:

Technical issues block recovery. If Google can’t crawl, index, or load pages properly, content updates won’t land.

Priority What to fix Timeline Critical Indexing blocks, broken tracking Immediately High Core Web Vitals, mobile usability Week 1–2 Medium Broken links, redirect chains Week 2–3 Nice to have Schema improvements, internal linking refinements Week 3–4 - Step 4: Build Authority Over Time:

To regain and keep rankings, you need stronger authority signals—especially in competitive spaces.

- Earn authority: original research, expert contributions, PR mentions, and high-quality backlinks.

- Strengthen trust: clear author bios, credible references, reviews, and transparent company info.

Authority work usually takes 3–6 months to show clear gains, but it’s what makes recovery stick.

How Long Website Traffic Recovery Takes

Recovery time depends on what caused the drop. Most sites stabilize first, then grow.

| Timeframe | What you should focus on | What results you’ll typically see | What to track |

|---|---|---|---|

| 2–8 weeks | Fix technical errors, refresh top pages, improve speed and mobile UX | Traffic stabilizes; key pages start to recover | Search Console impressions, average position, CTR, Core Web Vitals |

| 3–6 months | Build authority, improve content depth, strengthen topical clusters | Broader ranking growth and steady traffic gains | Organic sessions, conversions, backlink growth, brand searches |

| 6–12+ months | Recover from penalties, fix site-wide quality issues, rebuild brand trust | Full recovery in competitive or heavily impacted cases | Organic revenue, visibility share vs competitors, AI citations |

Realistic expectations: many sites stabilize in 4–6 weeks and see measurable improvement in 8–12 weeks. Full recovery to previous levels (or beyond) often takes 3–6 months.

How to know it’s working: rising impressions with stable or improving CTR is usually the earliest sign that traffic recovery is underway—even before sessions fully return.

Read More Articles

- AI Content Detection in 2025: Trends to Watch

- Effective LLM Citation Strategies for SEO Success

- Does Google Ranking Ensure Visibility in ChatGPT

- 8 Most Effective Strategies for AI Visibility Enhancement

- How SERP Visibility Analysis Uncovers Competitor Gaps in 2026

- AEO Vs GEO (2026): Differences, Use Cases & Which To Prioritize

- How to Design Content Briefs for GEO?

- Visibility Issues: What’s Hurting Your Site’s Performance

- How to Use AI to Find Content Gaps (Step-by-Step)

FAQs

Yes. CMS updates, theme/plugin changes, and consent banner edits can break analytics tracking. Compare Google Search Console impressions with analytics sessions—if impressions are stable but sessions drop sharply, tracking is likely the problem.

Updates don’t help if they don’t improve usefulness or match current search intent. Traffic can also drop when AI Overviews satisfy the query without a click, or when edits remove signals Google associated with rankings (sections, internal links, headings, or structure).

Core updates can reshuffle rankings based on quality, trust, and user satisfaction. Traffic often falls when competitors align better with what the update rewards, or when Google favors different formats (tools, comparisons, fresher guides).

This usually points to zero-click behavior. Your pages are still showing up, but users are getting answers through AI Overviews, featured snippets, or other SERP features—so they don’t click through as often.

Yes. Indexing problems, slow performance, Core Web Vitals failures, and poor mobile usability can suppress rankings and engagement. Since Google uses mobile-first indexing, mobile issues can impact visibility across both mobile and desktop.

It depends on the cause. Technical fixes may show movement in 2–4 weeks, content improvements often take 4–8 weeks, and authority building typically takes 3–6 months. Stabilization usually comes before growth.

The Bottom Line

Traffic drops heading into 2026 look different than they did even two years ago. AI Overviews are changing click behavior, zero-click searches are common, and algorithm updates reward quality and trust more aggressively than before.

If you’re still asking why is your website traffic dropping, the good news is this: most declines are fixable—but only when you diagnose the real cause and respond in the right order.

- Verify the drop is real (rule out tracking errors).

- Diagnose the cause (gradual vs. sudden, channel impact, what changed).

- Prioritize fixes (protect top pages, resolve technical issues, refresh content).

- Build long-term stability (authority signals, AI visibility, consistent updates).

The “set it and forget it” era is over. The sites winning now treat SEO as ongoing maintenance—where content, technical performance, and credibility work together to protect visibility and keep traffic growing.